Developer Guide

FPGA Optimization Guide for Intel® oneAPI Toolkits

A newer version of this document is available. Customers should click here to go to the newest version.

Visible to Intel only — GUID: GUID-E855C844-AE14-4DB2-8437-6087678FF7F7

Visible to Intel only — GUID: GUID-E855C844-AE14-4DB2-8437-6087678FF7F7

Mapping Arrays and Their Accesses to Hardware

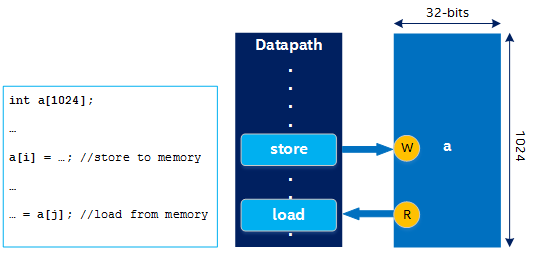

Similar to mapping statements to specialized hardware operations, the compiler maps arrays to hardware memories based on memory access patterns and variable sizes. The datapath interacts with this memory through load/store units (LSUs), which are inferred from array accesses in the source code.

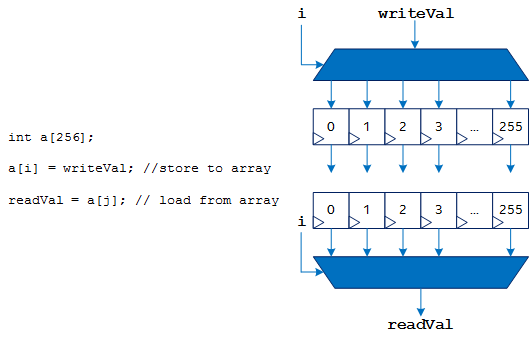

The following figure illustrates a simple example of mapping arrays and their accesses to hardware:

A RAM can have a limited number of read ports and write ports, but a datapath can have many LSUs. When the number of LSUs does not match the available number of read and write ports, the compiler uses techniques like replication, double-pumping, sharing, and arbitration. For more information, refer to Kernel Memory.

FPGAs provide specialized hardware block RAMs that you can configure and combine to match the size of your arrays. Doing so can provide many terabytes per second of on-chip memory bandwidth because each of these memories can interact with the datapath simultaneously.

Arrays might also be implemented in your kernel datapath. In this case, the array contents are stored as registers in the datapath when your algorithm is pipelined (as discussed in Pipelining). Storing array contents as registers in the datapath can improve performance in some cases, but it is a design decision whether to implement an array as registers or as memories.

When you access an array that is implemented as registers, LSUs are not used. The compiler might choose to use a select or a barrel shifter instead.