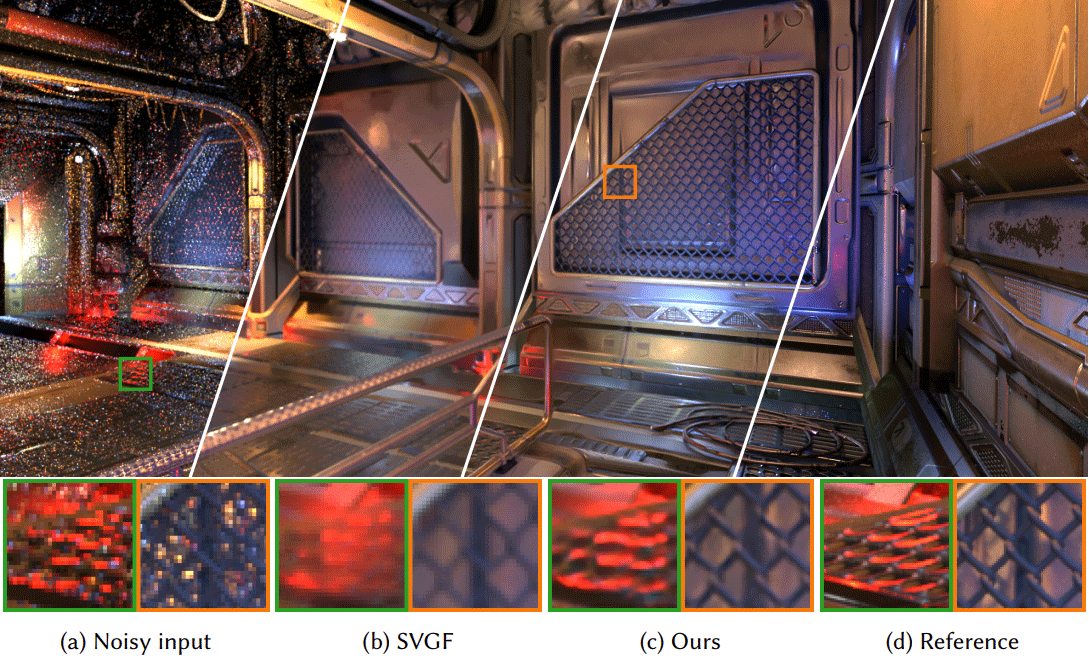

Given noisy, low-resolution input, our network performs spatiotemporal filtering to produce denoised

and antialiased output at twice the resolution. Compared to conventional denoisers, our method preserves

more detail and contrast and generates a higher resolution at a similar computational cost.

By Manu Mathew Thomas, Gabor Liktor, Christoph Peters, SungYe Kim, Karthik Vaidyanathan, Angus G. Forbes

Abstract

Recent advances in ray tracing hardware bring real-time path tracing into reach, and ray traced soft shadows, glossy reflections, and diffuse global illumination are now common features in games. Nonetheless, ray budgets are still limited. This results in undersampling, which manifests as aliasing and noise. Prior work addresses these issues separately. While temporal supersampling methods based on neural networks have gained a wide use in modern games due to their better robustness, neural denoising remains challenging because of its higher computational cost.

We introduce a novel neural network architecture for real-time rendering that combines supersampling and denoising, thus lowering the cost compared to two separate networks. This is achieved by sharing a single low-precision feature extractor with multiple higher-precision filter stages. To reduce cost further, our network takes low-resolution inputs and reconstructs a high-resolution denoised supersampled output. Our technique produces temporally stable high-fidelity results that significantly outperform state-of-the-art real-time statistical or analytical denoisers combined with TAA or neural upsampling to the target resolution.

Downloads:

PDF: Temporally Stable Real-Time Joint Neural Denoising and Supersampling (121 MB)

Research Area: ray tracing, denoising.

Published in High Performance Graphics 2022

Read the paper

Intel Graphics Research