Introduction

Red Hat* and Intel are responding to the industry need for a cloud-based platform optimized for data science operations and built with open source components. Their joint solution combines oneAPI-powered Intel AI solutions (that is, AI Tools [formerly Intel® AI Analytics toolkit] and OpenVINO™ toolkits), cnvrg.io*, and Intel Gaudi software integrated into Red Hat* OpenShift* AI.

Solutions for Data Science Productivity

Red Hat OpenShift AI is a comprehensive end-to-end environment to accelerate time-to-market for container-based AI solutions. As a cloud service, Red Hat OpenShift AI gives data scientists and developers a powerful AI and machine learning platform for building intelligent applications. Intel’s integrated toolkits amplify these capabilities.

Red Hat OpenShift AI is designed to solve data science challenges by:

- Choosing and deploying the right machine learning and deep learning tools (for example, open source tooling, Jupyter* Notebooks, TensorFlow*, PyTorch*, Kubeflow*, and commercial partners).

- Reducing the time required to train, test, select, and retrain machine learning models that provide the highest predictive accuracy.

- Improving performance of model training and inference tasks using software and hardware acceleration.

- Reducing reliance on IT operations to provision and manage infrastructure.

- Improving collaboration between data engineers and software developers to build intelligent applications.

What is AI and Machine Learning on Red Hat* OpenShift* AI?

Red Hat OpenShift AI is an enterprise-grade container-orchestration platform that takes advantage of open source technologies such as Docker*, Kubernetes*, Tekton*, and others.

Red Hat OpenShift AI and Intel® AI tools use the OpenShift platform to help organizations make the most out of their data—curating and ingesting it, creating models, and deploying them into production—using business processes for data governance, quality assessment, and integration.

What is AI and Machine Learning on Intel?

Intel's approach to AI and machine learning is driven by three principles, which are essential to the future of AI:

- Developing intelligent systems.

- Optimizing hardware and software resources.

- Collaborating with industry-leading partners to offer development platforms.

Intel teamed up with Red Hat to integrate its AI portfolio into Red Hat OpenShift AI. It is available on Amazon* cloud which, by the way, runs on Intel hardware and software including integration with the AI Tools powered by oneAPI. (AI Tools include essential tools for analyzing, visualizing, and optimizing data sets for machine and deep learning workloads.)

Understanding Red Hat OpenShift AI

As established above, Red Hat OpenShift AI combines into one common platform what self-service data scientists and developers want with the confidence enterprise IT demands. It provides a set of widely used open source data science tools that can be used to build intelligent applications, enabling developers to take advantage of the latest Intel technologies and build data science applications.

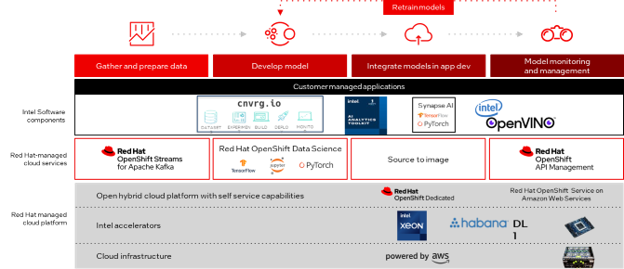

Figure 1: AI workflows using Intel's AI tools, frameworks, and solutions on Red Hat OpenShift AI.

Red Hat OpenShift AI Components

The platform is built on widely used open source AI frameworks—JupyterLab*, PyTorch*, TensorFlow, and more—and integrates with a core set of Intel technologies such as the aforementioned AI Tools, plus the OpenVINO toolkit, Intel® Tiber™ AI Studio (formerly cnvrg.io), and Intel® Gaudi® software using Amazon EC2* DL1 instances.

Collectively, this makes it easier for data scientists to quickly get started without having to worry about managing the underlying infrastructure.

Let’s look at the key details of each Intel technology and how they work with Red Hat OpenShift AI.

AI Tools accelerate end-to-end machine learning and data analytics pipelines with frameworks and libraries optimized for Intel architectures, including:

- Intel® Distribution for Python*, a version of the popular Python framework, which provides drop-in performance enhancements for your existing Python code with minimal code changes

- Intel® Optimizations for TensorFlow* and PyTorch* to accelerate deep learning training and inference

- Model compression for DL inference with the Intel® Neural Compressor

- Model Zoo for Intel® Architecture for pretrained popular deep learning models to run on Intel® Xeon® Scalable processors and deep learning reference models on Intel Gaudi GitHub*

- Optimizations for CPU- and multiple core-intensive packages with pandas and Intel-optimized versions of scikit-learn* and XGBoost* and distributed DataFrame processing in Intel® Distribution of Modin*

OpenVINO toolkit accelerates edge-to-cloud, high-performance model inference including:

- Support for multiple deep learning frameworks—TensorFlow, Caffe*, PyTorch, MXNet*, Keras*, ONNX*, and more

- Applicability across several DL tasks such as computer vision, speech recognition, and natural language processing

- Easy deployment of model server at scale in OpenShift

- Support for multiple storage options (S3, Azure Blob, GSC, local)

- Configurable resource restrictions and security context with OpenShift resource requirements

- Quantization, filter pruning, and binarization to compress models

- Configurable service options depending on infrastructure requirements

Intel Tiber AI Studio will extend Red Hat OpenShift AI enterprise-grade MLOps capabilities later this year with out-of-the-box, end-to-end MLOps tooling, including:

- Advanced MLOps platform to automate the continuous training and deployment of AI and machine learning models

- Management of the entire lifecycle: data preprocessing, experimentation, training, testing, versioning, deployment, monitoring, and automatic retraining

- Enablement to train and deploy on any infrastructure at scale

- Managed Kubernetes deployment on any cloud or on-premises environment

- Open and flexible data science platform, which integrates any open source tool

Intel Gaudi software DL1 instances for DL workloads will be available through the Red Hat OpenShift AI platform later this year. Intel Gaudi software is designed to accelerate model delivery, reduce time-to-train and cost-to-train, and facilitate building new or migrating existing models to Intel Gaudi software solutions, as well as deploying them in production environments. Intel Gaudi software benefits include:

- Easy access to Intel Gaudi software-based Amazon EC2 DL1 training instances from Red Hat OpenShift AI

- Reduction in Intel Gaudi accelerators aim to reduce in total cost of ownership (TCO) with a competitive price/performance ratio

- Streamlined training and deployment for data scientists and developers with Habana GitHub and Habana SynapseAI software stack featuring integrated TensorFlow and PyTorch frameworks, documentation, tools, support, reference models, and developer forum

Red Hat OpenShift AI Benefits for Data Scientists & Development Teams

- Eliminates complex Kubernetes setup tasks – Includes support for a full-featured, managed Red Hat OpenShift AI environment and is ready for rapid development, training, and testing.

- Efficient management of software lifecycles – Through the managed cloud service, Red Hat updates the platform and integrated AI tooling like Jupyter Notebook, PyTorch, and TensorFlow libraries. Kubernetes operators validate security provisions and automate management of components in the container stack, helping to avoid downtime and minimizing manual maintenance tasks.

- Provides specialized components and partner support within Jupyter Notebooks – Data scientists can work with familiar tools or tap into a dynamic technology partner ecosystem for deeper AI and machine learning expertise, including the AI Tools and OpenVINO toolkit.

- Streamlines development of data analytics solutions – Create models and refine them—from initial pilots to containerized deployments—on a shared, consistent platform. Data scientists can work efficiently with their choice of tools and access to a self-service infrastructure.

- Publish models as end points – Using the Source-to-Image (S2I) tool built into Red Hat OpenShift AI, models are container-ready, which makes it easier to integrate them into an intelligent app. Models can be rebuilt and redeployed as part of a continuous integration/ continuous development process based on changes to the source notebook.

- Harness the power of hardware acceleration for high-performance AI workloads – Intel AI tools and solutions unlock high-performance training and inference with the power of hardware acceleration via optimized, low-level libraries such as TensorFlow and PyTorch.

- Optimize and deploy DL models – Using the OpenVINO toolkit, deploy performant inference solutions for Intel XPUs including various types of CPUs, GPUs, and special DL inference accelerators.

- Improve productivity and deployment portability using best-in-class AI tools – Scale your code across multiple Intel architectures using aforementioned tools—all powered by oneAPI—without code changes.

Conclusion

Red Hat and Intel responded to the industry need for a cloud-based platform that is optimized for data science operations and built with open source components.

The solution: Red Hat OpenShift AI, a collection of open source components that help data scientists build, train, and deploy machine learning models with relative ease and speed.

Red Hat OpenShift AI is a managed cloud service provided as an add-on to the Red Hat OpenShift platform and is integrated with the latest Intel technologies to allow data scientists and application developers to quickly build and deploy intelligent applications across the hybrid cloud.

The platform’s design, including its integration with a variety of Intel AI tools, will enable users to take advantage of the latest Intel technologies and build data science applications using Red Hat OpenShift Cloud Services.

More Resources

- Developer Resources from Intel and Red Hat

- Intel and Red Hat Developer Program

- Intel AI Developer Zone

Acknowledgment

We would like to thank team Red Hat (Christina Xu, Steven Huels, Will McGrath, Audrey Reznik, Leigh Blaylock, Kristin Anderson, Erin Britton, Jeff DeMoss) and Team Intel (Susan Lansing, Maya Perry, Ryan Loney, Jack Erikson, Navin Samuel, Renuke Mendis, Neil Dey, Tony Mongkolsmai, Raghu K Moorthy, Peter Velasquez, Rachel Oberman, Thomas Dewey) for their contributions to the blog and, Monique Torres, Katia Gondarenko, Dan Zloof, Leigh Rosenwald and Keenan Connolly for their review and approval help.

Featured Software

Accelerate end-to-end machine learning and data science pipelines with optimized deep learning frameworks and high-performing Python* libraries.

Get It Now

See All Tools

You May Also Like

Related Webinars

- Optimize AI with Drop-In Acceleration from Intel and Red Hat

- Deploy AI Using Microsoft Azure* and ONNX for the OpenVINO Toolkit

- Speed and Scale AI Inference Operations across Multiple Architectures

Related Articles