Test with docker-compose

You easily can run multi-container applications on various Intel Platforms by importing docker compose YAML files hosted on external Github repositories. Common docker-compose configurations such as depends_on, ports and build are also supported.

Make sure to place a single docker-compose file in the root of the repo as the container-playground portal will look for the first available docker-compose.yml in your git repo.

Navigate to Docker Compose

Use your Dashboard view to access the +Import Resources option and select Docker Compose.

Configure Import

Enter the configuration of the source code repository.

GIT Repo URL: Enter the root browser url of your source code repository

Private: Check to import from private repository

Secret: For private repos, select an existing secret or create one using the +Create New Secret option. Enter your Secret Name, git account Username and the Git Token.

To generate a secret, navigate to your github account Settings > Developer Settings > Personal Access Tokens > Generate New Token and ensure repo access is enabled. Your one-time token will be generated in the next page.

Hit Verify on the bottom-right.

Enter a unique Resource Name to indentify your docker-compose resource in the Intel® Developer Cloud for the Edge portal and proceed to the next page with Submit.

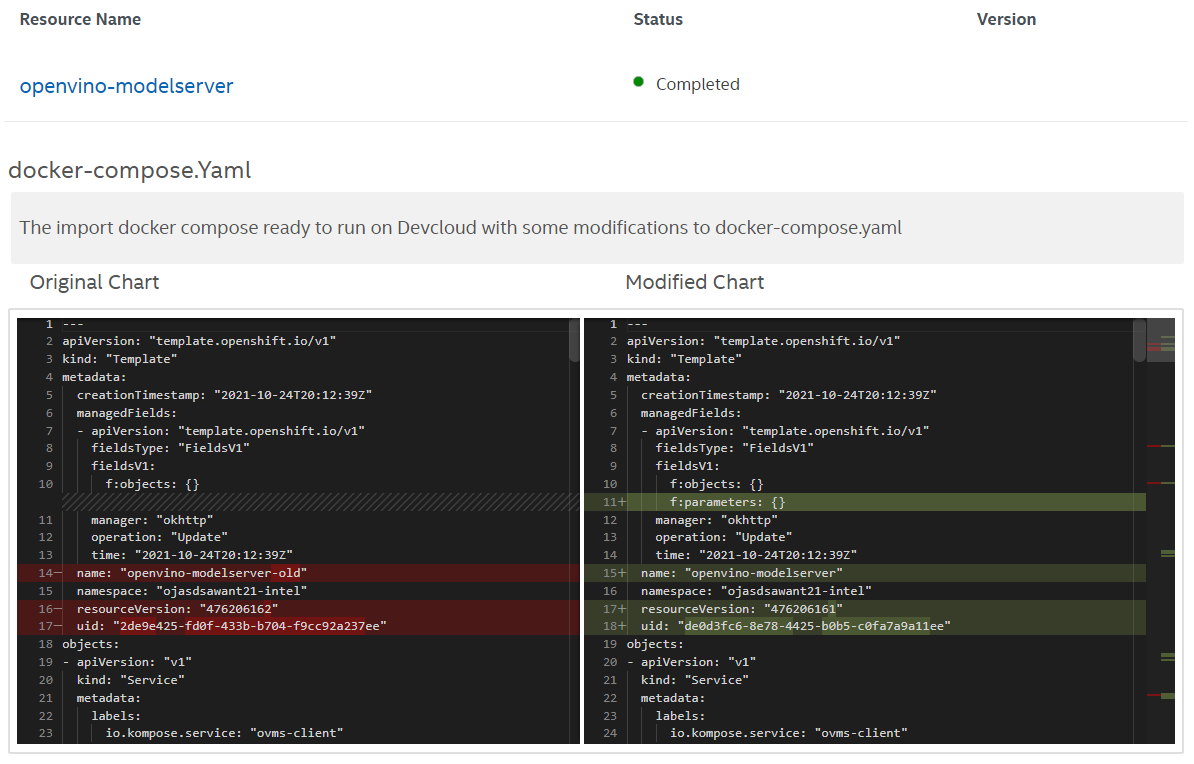

The container Playground portal will show the modified changes of your original docker-compose file required to run successfully inside your Intel® Developer Cloud for the Edge account.

You must select an existing project or create one with +Create New Project option to associate your imported resource.

(Optional) Check the Enable Routes option to additonally configure browser accessible url for a service port specified in your docker-compose file.

After assigning a project, use the My Library on the bottom to return to the My-Library view.

To launch your project, see Select Hardware and Launch Containers.

GIT Repo URL: https://github.com/intel/DevCloudContent-docker-compose

Resource Name: openvino-modelserver

Assign the resource to a new project dockercompose-example on the changes comparison page.

Use the dashboard view to see log output of ovms-server container serving a detection model and ovms-client container performing multiple grpc inference requests. The ovms-server will be in running state until stopped and the ovms-client container will reach completed state.