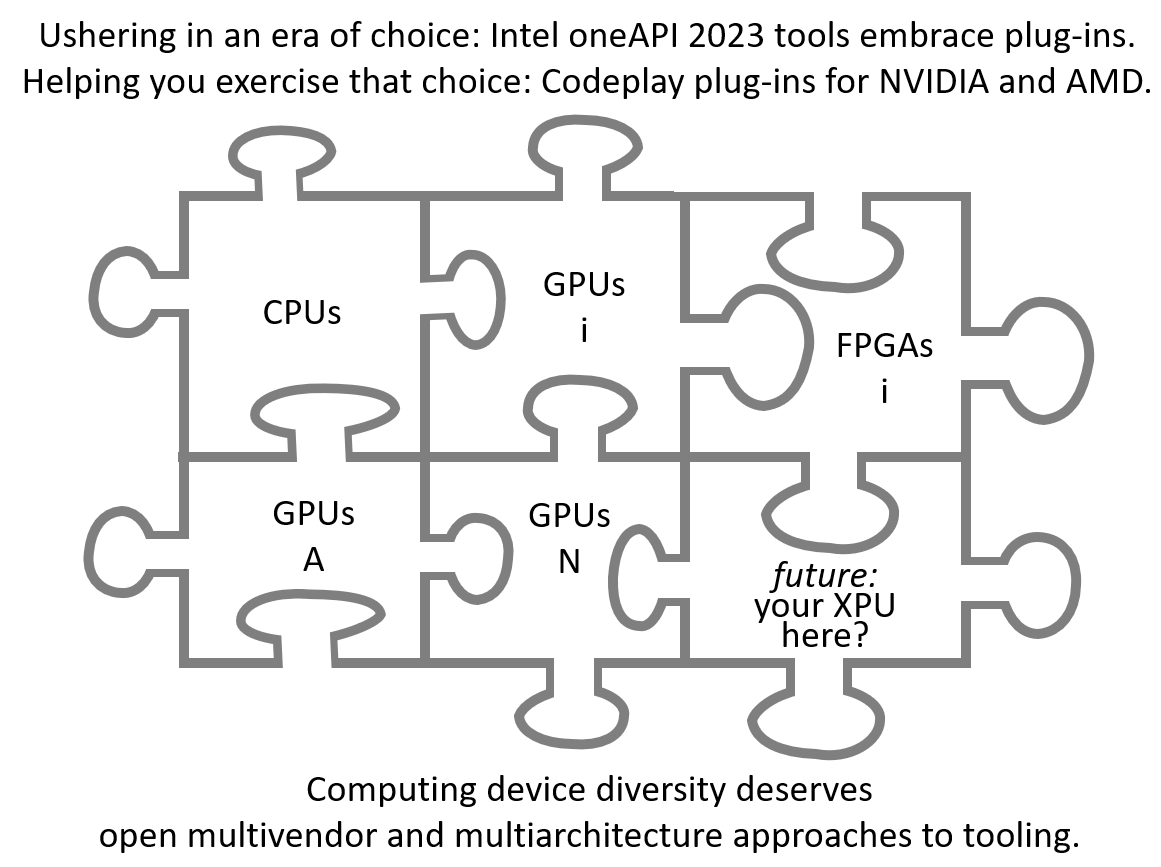

Once  a year, I have the privilege of introducing the annual edition of our software development tools. This year, you'll find continued leadership in optimization and standards support, including excellent support for the latest CPUs, GPUs, and FPGAs from Intel. And you'll find an exciting new capability that enables plug-ins which add support for non-Intel devices. This opens the door for "no excuses" support for all of accelerated computing. The first two instances of offering plug-in support come via NVIDIA* and AMD* plug-ins, prebuilt from open source projects and supported by Codeplay* Software. Together, oneAPI tools and plug-ins are making real the dream of an open future for accelerated and heterogeneous computing.

a year, I have the privilege of introducing the annual edition of our software development tools. This year, you'll find continued leadership in optimization and standards support, including excellent support for the latest CPUs, GPUs, and FPGAs from Intel. And you'll find an exciting new capability that enables plug-ins which add support for non-Intel devices. This opens the door for "no excuses" support for all of accelerated computing. The first two instances of offering plug-in support come via NVIDIA* and AMD* plug-ins, prebuilt from open source projects and supported by Codeplay* Software. Together, oneAPI tools and plug-ins are making real the dream of an open future for accelerated and heterogeneous computing.

Enabling NVIDIA and AMD GPU support

A bold new innovative plug-in model enables optimized support for NVIDIA and AMD GPUs. The approach provides paths to open source support for hardware, and it also connects to closed source library options should they be preferred (e.g., cuBLAS for NVIDIA). In other words – the goal is “no excuses” access to performance for all vendors and all architectures (not just GPUs). While Intel rolls out oneAPI 2023.0, plug-ins from Codeplay Software offers prebuilt plug-ins for the oneAPI tools to add support for NVIDIA and AMD GPUs, with a number of free and paid technical support options.

Available Now: Intel® oneAPI 2023.

The tools are already preinstalled and ready to use on Intel® Developer Cloud.

These programming development tools are available for download from Intel® Developer Zone, as well as repositories and other channels. Linux* and MacOS* tools are available immediately, and the Windows* tools will follow shortly.

For a video overview of the release please watch “An early look at the Intel oneAPI & AI Tools for 2023” webinar recording  - a part of the impressive collection of ever growing Tech.Decoded videos.

- a part of the impressive collection of ever growing Tech.Decoded videos.

LLVM is Delivering Strong Value

Intel compilers, both C/C++ and Fortran, benefit from adopting LLVM. A year ago, our C/C++ LLVM implementation achieved feature and performance parity with our older “classic” compiler. Drawing on the LLVM SYCL* project, our LLVM-based C++ compiler has added the latest SYCL 2020 support available in the open source github. With this release, our Fortran LLVM implementation reached a milestone of the surpassing features and standard support of our older “classic” compiler. This includes OpenMP* 5.x, F2003, F2008, and F2018 support including Coarray support and DO CONCURRENT with offload to accelerators. While performance is solid in the new Fortran compiler and accelerator support is unique to the newer LLVM-based compilers, we are eager to hear more feedback from our customers about their experiences.

Community projects including SYCL, OpenMP (with OpenMP target), and support for GPUs from multiple vendors, are among the benefits that LLVM brings to developers who use our latest tools. The new plug-in capability opens oneAPI tools to expanded support for products from more vendors. We are committed to helping open approaches to all computing, with an emphasis on the multivendor and multiarchitecture needs of accelerated computing everywhere.

Optimized for Intel's Latest Hardware

Intel is introducing and ramping many exciting new CPU, GPU, and FPGA products; this tools release is ready to help you make the most out of these exciting new Intel products.

In this release, you will find support for 4th Gen Intel® Xeon Scalable and Xeon CPU Max Series processors with Intel® Advanced Matrix Extensions (Intel® AMX), Intel® Quick Assist Technology (Intel® QAT), Intel® AVX-512, bfloat16; Intel® Data Center GPUs, including Flex Series with hardware-based AV1 encoder; and Max Series GPUs with data type flexibility, Intel® Xe Matrix Extensions (Intel® XMX), vector engine, Intel® Xe Link and other features. Of course, these tools already offer optimized support for the already installed base of powerful Intel CPUs, GPUs, and FPGAs.

Exciting CPUs, GPUs, and FPGAs - Intel offers outstanding solutions, and our oneAPI software supports them well. Learn more about Accelerate wth Xeon® in an event on January 10, 2023, or visit the website now. Learn more about Intel® Data Center Intel GPUs on their website. Learn more about Intel® FPGAs on their website.

AI Reference Kits

A series of open source AI reference kits, developed in collaboration with Accenture, show data scientists and developers how to innovate and accelerate using the AI application tools. All AI models are optimized using AI tools powered by oneAPI for faster training and inferencing performance using less compute resources. The AI reference kits use components from Intel's AI software portfolio, including Intel® AI Analytics Toolkit and the Intel® Distribution of OpenVINO™ toolkit. Each kit includes model code, training data, instructions for the machine learning pipeline, libraries, and oneAPI components.

The tips and techniques found in the AI reference kits help highlight performance gains – such as the 122% boost in ‘Pytorch real-time prediction’ shown in the Visual Quality Inspection reference kit. Each kit (over a dozen already) is full of useful information with actual performance results highlighted to motivate techniques of value.

Join oneAPI to Help Create the Best Future Together

These  tools and plug-ins are another important step in our journey to foster an open multivendor and multiarchitecture future for computing.

tools and plug-ins are another important step in our journey to foster an open multivendor and multiarchitecture future for computing.

The oneAPI initiative, and its open specification, are focused on enabling an open, multiarchitecture world with strong support for software developers—a world of open choice without sacrificing performance or functionality.

Please come join the growing community of those helping refine and drive this vision.

Try the tools on your own system or Intel® Developer Cloud. Get plug-ins from Codeplay. Join the various oneAPI groups to provide your expert advice and guidance on how to meet your needs for a productive and open solution to our software development needs. Your particiaption is encouraged: come be a part of the discussion, join oneAPI standards discussions and work, or contribute to the numerous open source projects working toward an open future for accelerated and heterogeneous computing. Learn more at oneapi.io.