Processor MMIO Stale Data Vulnerabilities are a class of memory-mapped I/O (MMIO) vulnerabilities that can expose data. The sequences of operations for exposing data range from simple to very complex.

Because most of the vulnerabilities require the attacker to have access to MMIO, many environments are not affected.

System environments using virtualization where MMIO access is provided to untrusted guests may need mitigation.

Intel® Software Guard Extensions (Intel® SGX) may require mitigation.

These vulnerabilities are not transient execution attacks. However, these vulnerabilities may propagate stale data into core fill buffers where the data may subsequently be inferred by an unmitigated transient execution attack.

Mitigation for these vulnerabilities includes a combination of microcode updates and software changes, depending on the platform and usage model. Some of these mitigations are similar as those used to mitigate Microarchitectural Data Sampling (MDS) or those used to mitigate Special Register Buffer Data Sampling (SRBDS).

These vulnerabilities have been assigned the following Common Vulnerabilities and Exposure (CVE) identifiers and Common Vulnerability Scoring System (CVSS) version 3.1 scores as shown in Table 1.

| Name (Acronym) |

CVE (CVSS) |

Affected Products | Privilege Required | Data Exposure | Mitigation Direction | Software Proposal |

|---|---|---|---|---|---|---|

| Device Register Partial Write (DRPW) |

CVE-2022-21166 (5.5 Medium: AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:N/A:N) |

|

MMIO | fill buffers, uncore buffers | microcode and software (same Simultaneous Multi-Threading restrictions as MDS) |

Software buffer overwriting if untrusted software has MMIO access |

| Update to Special Register Buffer Data Sampling (SRBDS Update) |

CVE-2022-21127 (5.5 Medium: AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:N/A:N) |

|

Ring 3 | RDRAND, RDSEED, SGX EGETKEY | microcode |

Same as SRBDS |

| Shared Buffers Data Read (SBDR) |

CVE-2022-21123 (6.1 Medium: AV:L/AC:L/PR:L/UI:N/S:U/C:H/I:L/A:N) |

|

MMIO | RDRAND, RDSEED, SGX EGETKEY, fill buffers, uncore buffers | microcode and software | Software buffer overwriting if untrusted software has MMIO access |

| Shared Buffers Data Sampling (SBDS) |

CVE-2022-21125 (5.6 Medium: AV:L/AC:H/PR:L/UI:N/S:C/C:H/I:N/A:N) |

|

Ring 3, MMIO | fill buffers, uncore buffers | microcode and software | Software buffer overwriting if untrusted software has MMIO access |

Background

The uncore portion of a CPU is a section of logic that is shared by physical processor cores and provides several common services. When a processor core reads or writes memory-mapped I/O (MMIO), the transaction is normally done with uncacheable or write-combining memory types and is routed through the uncore. Depending on the target device and implementation, the transaction may travel over the primary bus (high bandwidth) or the sideband bus (low bandwidth) inside the uncore.

This article describes security issues that involve the shared logic in the uncore. The uncore has different microarchitectural implementations on server and client platforms. Malicious actors may use uncore buffers and mapped registers to leak information from the same or different hardware threads within the same physical core or across cores.

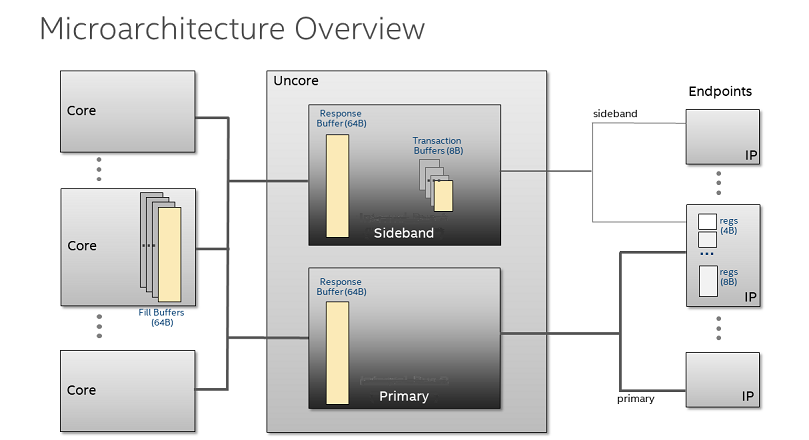

Microarchitecture Overview

Figure 1 illustrates, at a very high-level, the location of, and connections between, these various microarchitectural elements. These include:

- Uncore: System on Chip (SoC) logic shared by multiple cores that includes the core interface to the primary and sideband buses. A device or agent connected on Intel fabric may be attached to one or both primary or sideband bus.

- Primary bus: Internal high bandwidth SoC fabric for core/device data transfer. All PCIe discrete devices are connected to this bus.

- Sideband bus: Internal low bandwidth SoC fabric for core/device data transfer; includes, among other things, input-output memory-mapping unit (IOMMU) and digital random number generator (DRNG).

- Endpoints: The simplified diagram in Figure 1 shows all endpoints as if they were discrete devices. Endpoints can also be integrated on the same die, in the processor package, or in a companion chipset. Example endpoints include USB, network, display, and storage controllers.

Definitions

Additional key terms used frequently in this article are defined below.

- Non-coherent transaction: A transaction that accesses destinations outside the coherent system memory space, such as configuration, memory-mapped I/O, interrupts, and messages between agents. Refer to An Introduction to the Intel® QuickPath Interconnect for more information.

- Memory Mapped I/O (MMIO): MMIO uses the processor’s physical-memory address space to access I/O devices that respond like memory components. When using MMIO, any processor instruction that references memory can be used to access an I/O port located at a physical-memory address. See Intel SDM Volume 1, section 19.3.1, Memory-Mapped I/O. For the purposes of this article, legacy I/O instructions ({IN/OUT}B, {IN/OUT}W, and {IN/OUT}L) will behave like a correspondingly-sized memory operation to the same I/O port address.

- Special Register Buffer Data Sampling (SRBDS): Because the entire shared staging buffer for the special register reads is copied into the fill buffer of the core doing the read, the fill buffer doing the read may contain stale data from previous special register reads. On processors affected by Microarchitectural Fill Buffer Data Sampling (MFBDS) or Intel® Transactional Synchronization Extensions (Intel® TSX) Asynchronous Abort (TAA), an adversary may be able to infer data in the fill buffer entries.

- FB_CLEAR operations: This refers to explicit overwriting of fill buffers. Some processors overwrite fill buffers as part of prior MD_CLEAR1 functionality. On these processors, VERW instructions2 and L1D_FLUSH MSR operations will perform implicit FB_CLEAR operations. On other processors that explicitly enumerate FB_CLEAR functionality (refer to the IA32_ARCH_CAPABILITIES.FB_CLEAR section), the VERW instruction will perform FB_CLEAR operations, and L1D_FLUSH does not perform the FB_CLEAR operation.

- Partial Write: Any write that is smaller than 8 bytes or is not 8-byte aligned.

VERW Buffer Overwriting Details

The VERW instruction is already defined to return whether a segment is writable from the current privilege level. MD_CLEAR and FB_CLEAR enumerate that the memory-operand variant of VERW (for example, VERW m16) has been extended to also overwrite buffers affected by MMIO Stale Data vulnerabilities.

The register operand variant of VERW is not designed explicitly for this buffer overwriting functionality. The buffer overwriting occurs regardless of the result of the VERW permission check, as well as when the selector is null or causes a descriptor load segment violation. However, for lowest latency, Intel recommends using a selector that indicates a valid writable data segment.

Example usage3:

sub $8, %rsp

mov %ds, (%rsp)

verw (%rsp)

mov %al, (%rdi)

mfence

lfence

add $8, %rsp

Note that the VERW instruction updates the ZF bit in the EFLAGS register, so exercise caution when using the above sequence in-line in existing code. Also note that the VERW instruction is not executable in real mode or virtual-8086 mode.

The microcode additions to VERW are designed to overwrite all relevant microarchitectural buffers for a logical processor regardless of what is executing on the other logical processor on the same physical core.

Fill Buffer and Side Band Stale Data

In modern processors, stale data can be copied or moved from one microarchitectural buffer or register to another. The material in the next section (Data Vulnerabilities) discusses Processor MMIO Stale Data Vulnerabilities. Processor MMIO Stale Data Vulnerabilities are operations that result in stale data being directly read into an architectural, software-visible state or sampled from a buffer or register.

As mentioned in the Background section, the microarchitecture for Intel® Xeon® server processors4 is different than for client processors. As a result, servers are only subject to a subset of the vulnerabilities discussed in this paper. A reader only interested in server vulnerabilities should read the Device Register Partial Write (DRPW) section, and then proceed to the Mitigation section.

Fill Buffer Stale Data Propagator (FBSDP)

Stale data may propagate from fill buffers (FB) into the non-coherent portion of the uncore on some non-coherent writes. Fill buffer propagation by itself does not make stale data architecturally visible. Stale data must be propagated to a location where it is subject to reading or sampling.

Operations That May Cause Writes into Non-Coherent Fabrics

The main instructions that cause writes into non-coherent fabrics are uncacheable or write-combinable stores to MMIO addresses. However, there are other instructions and operations that may write to non-coherent fabrics, often through a microarchitectural operation known as a special register write. Some examples of these include WRMSR,RDMSR for certain MSRs, writes to PCIe configuration space, legacy I/O instructions, power state transitions, and asynchronous events such as system management interrupts (SMI) and frequency changes.

Sideband Stale Data Propagator (SSDP)

The sideband stale data propagator (SSDP) issue is limited to the client uncore implementation.

The sideband response buffer is shared by all cores. For non-coherent reads that go to sideband destinations, the uncore logic returns 64 bytes of data to the core, including both requested data and unrequested stale data, from a transaction buffer and the sideband response buffer.

As a result, stale data from the sideband response and transaction buffers now resides in a core fill buffer. Such a propagator by itself does not cause a security issue, but it can be leveraged by vulnerabilities described in the Data Vulnerabilities section as part of an attack.

Note that the previously disclosed Special Register Buffer Data Sampling (SRBDS) vulnerability is a subset of the possible issues associated with sideband stale data propagator. In SRBDS, the data is propagated from the sideband response buffer to a core fill buffer, where it is attacked with Microarchitectural Fill Buffer Data Sampling (MFBDS).

Primary Stale Data Propagator (PSDP)

The primary stale data propagator (PSDP) is limited to the client uncore implementation.

Similar to the sideband response buffer, the primary response buffer is shared by all cores. For some processors, MMIO primary reads will return 64 bytes of data to the core fill buffer including both requested data and unrequested stale data. This is similar to the sideband stale data propagator. However, unlike the sideband stale data propagator, the primary stale data propagator propagates stale data only from previous read transactions, not from previous write transactions.

As with other propagators, primary response buffer propagation by itself does not make stale data architecturally visible.

Data Vulnerabilities

The vulnerabilities described in this section are used to make stale data architecturally visible. Some are direct operations (reads and writes) and some are sampling operations (transient-execution attacks).

Readers only interested in server5 vulnerabilities should read the Device Register Partial Write (DRPW) section, and then proceed to the Mitigation section.

Device Register Partial Write (DRPW)

Some endpoint MMIO registers incorrectly handle writes that are smaller than the register size. Instead of aborting the write or only copying the correct subset of bytes (for example, 2 bytes for a 2-byte write), more bytes than specified by the write transaction may be written to the register. On processors affected by FBSDP, this may expose stale data from the fill buffers of the core that created the write transaction.

If an endpoint device ignores byte enables, all bytes are copied to the device register. This means that both the write data and the stale data now reside in the device register. A subsequent read of the same register will return as valid the written data, along with what used to be stale data. Endpoints with this behavior have been found integrated on silicon and in discrete PCIe devices.

Shared Buffers Data Sampling (SBDS)

After propagators have moved data around the uncore and copied stale data into core fill buffers, processors affected by MFBDS can leak data from the fill buffer.

When a fill buffer stale data propagator is followed by the sideband stale data propagator, and then by an MFBDS attack, it is considered an SBDS attack. In addition, any combination that uses the fill buffer stale data propagator or primary stale data propagator before an MFBDS attack is also considered SBDS.

SRBDS Update

The original SRBDS mitigation is subject to possible race conditions that special sideband transactions may occur between buffer use and overwriting operation. When this happens, data from RDRAND, RDSEED, and EGETKEY may remain even after buffer overwriting.

Shared Buffers Data Read (SBDR)

Shared Buffers Data Read (SBDR) differs from Shared Buffers Data Sampling (SBDS) in that the data is directly read into the architectural, software-visible state. In processors affected by SBDR, any read that is wider than 4 bytes could potentially have stale data returned from a transaction buffer.

Implications of Vulnerabilities

The DRPW and SBDR scenarios require software to access registers with a different size or alignment than is expected by the architectural definitions of these registers. These issues are not likely to occur accidentally during normal execution and are likely to only be triggered by malicious software. These MMIO-based attacks generally require MMIO access to make stale data software-visible. Note that any data copied to fill buffers may be vulnerable to MFBDS attacks, which do not require MMIO access.

The fill buffer, sideband, and primary stale data propagators can occur during normal execution. Data may be propagated into the uncore via the fill buffer stale data propagator, and into the core via the sideband stale data propagator. Confused deputies that may propagate information should be considered. Refer to the Preventing Software from Acting as a Confused Deputy by Propagating Secret Data into Vulnerable Buffers section for more information.

Mitigation

For each vulnerability, there are two broad strategies that software can take:

- Preventing secret data from getting into buffers from which it can be extracted (blocking propagators).

- Preventing untrusted software from extracting data from vulnerable buffers (blocking vulnerabilities).

The first strategy generally involves using FB_CLEAR operations to overwrite secret data before it could be transferred to the affected buffer.

On products not affected by MFBDS or TAA vulnerabilities, there may be some software usage models or configurations where the second strategy can be employed for all untrusted software. In these cases, it is acceptable for secret data to reside in affected buffers without overwriting the buffers because potential attackers are prevented from extracting any data. In particular, in some environments it may be realistic to disallow direct MMIO access to untrusted software; thereby preventing any malicious actors from exploiting DRPW or SBDR6.

To simplify mitigation, we assume in this article that system software has already mitigated the MDS family of issues. As with the previous MDS vulnerabilities, in systems with simultaneous multithreading (SMT7) enabled, there may be race conditions where malicious software can extract data, or a confused deputy may leak data, on the sibling hyperthread between when data is put into the fill buffers and when it is overwritten. These race conditions might allow attackers to extract secret data. Software running on systems with SMT enabled that is concerned about these race conditions can use scheduling methods to avoid the confused deputy case or ensure that untrusted software does not run on the same core with software from other security domains. Refer to the MDS documentation for more details on these mitigations.

The sections below provide details on each scenario and the specific mitigation sequences that must be used for each. It is assumed that the latest microcode updates will be installed on any affected systems.

Processor Support for Mitigations

Fill Buffer Clearing Operations

The mitigation for FBSDP can involve using MD_CLEAR functionality to overwrite fill buffer values. Some processors may enumerate MD_CLEAR because they overwrite all buffers affected by MDS/TAA, but they do not overwrite fill buffer values. This is because fill buffers are not susceptible to MDS or TAA on those processors.

For processors affected by FBSDP where MD_CLEAR may not overwrite fill buffer values, Intel has released microcode updates that enumerate FB_CLEAR so that VERW does overwrite fill buffer values.

When running with this updated microcode, the VERW instruction will overwrite the fill buffer values for all logical processors on the physical core. The fill buffers will also be overwritten on RSM and when entering or exiting Intel SGX enclaves. For EENTER and ERESUME, the fill buffers will be overwritten after all state loading has completed.

On some processors affected by FBSDP but not affected by MDS, an MSR has been added that allows disabling the fill buffer overwriting functionality in VERW. Virtual Machine Managers (VMMs) can use this MSR to avoid the performance overhead of VERW overwriting the fill buffers (for example, because the guest has no access to MMIO). This may be useful in cases where the VMM has to hide enumeration of MDS_NO from the guest due to the existence in the migration pool of older systems without MDS_NO. For more details, refer to the IA32_ARCH_CAPABILITIES.FB_CLEAR section.

For processors that are affected by MDS and support L1D_FLUSH operations and MD_CLEAR operations, the L1D_FLUSH operation flushes fill buffers.

For processors that are affected by MDS and support L1D_FLUSH operations and MD_CLEAR operations, the VERW instruction flushes fill buffers. On processors that enumerate FB_CLEAR, the VERW instruction also flushes fill buffers.

System Management Mode (SMM)

The RSM instruction will overwrite the fill buffer values for all logical processors on the physical core.

RDRAND/RDSEED/EGETKEY Protection

As part of the existing mitigation for SRBDS, Intel released microcode updates for client processors in order to modify the RDRAND, RDSEED, and SGX EGETKEY instructions to overwrite stale special register data in uncore response and transaction buffers. This type of mitigation is also relevant for processors affected by SBDR or SSDP, as it prevents secret data processed by these instructions from being extracted from those uncore buffers. Microcode updates have been released to add this mitigation to processors that were not affected by SRBDS but are affected by SBDR or SSDP. Additionally, microcode updates contain a workaround for the issue described in the SRBDS Update section. Note that RDRAND and RDSEED may put the random values into the fill buffers, and thus it is software’s responsibility to apply FB_CLEAR before switching to an untrusted entity that could perform attacks that could leak stale data from fill buffers (such as DRPW, SBDR, and SBDS). For Intel SGX, it is not the responsibility of software running in an enclave; the fill buffers are cleared by microcode/hardware upon EEXIT and upon exiting an enclave.

Software Mitigations

For platforms with client uncore implementations, read this entire section. Server8 implementations only need the Preventing Untrusted Software from Propagating Secret Data into Vulnerable Buffers subsection.

Preventing Untrusted Software from Propagating Secret Data into Vulnerable Buffers

For usage models where untrusted software needs fast access to MMIO, the OS and VMM may want to focus on preventing that software from being able to propagate secret data into vulnerable buffers (via FBSDP) rather than preventing the attacks from accessing the data from those vulnerable buffers.

On processors vulnerable to MFBDS, the OS and VMM software should already prevent secret data from being present in the fill buffers when running untrusted software. This is done by the OS/VMM using the MD_CLEAR functionality (by executing either the VERW instruction or the L1D_FLUSH command) to overwrite the fill buffers before transitioning to untrusted software.

Additionally, the OS/VMM software can use core scheduling and kernel rendezvous to ensure that the sibling thread is not in a different malicious security domain or might be acting as a confused deputy. For more details on the core scheduling policy, see the MDS guidance.

Similar basic techniques can be used by the OS/VMM to ensure that fill buffers do not contain secret data from other security domains when running untrusted software that could potentially employ Processor MMIO Stale Data vulnerabilities to read or infer the fill buffers.

One difference compared to the MFBDS mitigations is that while most software environments can perform an MFBDS attack, many unprivileged software domains are unable to perform SBDR and DRPW attacks. It may not be necessary to overwrite fill buffers when transitioning to software that lacks the privileges needed to perform an attack using these vulnerabilities.

Another difference is that privileged software may accidentally copy fill buffer data to a vulnerable sideband uncore transaction/response buffer, thus acting as a confused deputy. This is discussed in the Preventing Software from Acting as a Confused Deputy by Propagating Secret Data into Vulnerable Buffers section. Due to this reason, software with strict security requirements may choose to perform a VERW or L1D_FLUSH command before transitioning to privileged software that has not been mitigated against this, even if that was not needed as part of an MFBDS mitigation.

Although the mitigation for MDS required LFENCE or a similar execution-serializing instruction (such as SYSRET) to follow the VERW on some processors, this is not needed when VERW is used exclusively to mitigate vulnerabilities involving FBSDP (meaning the part is not affected by MDS or TAA).

Mitigation Considerations

In bare metal OS environments, it is uncommon for untrusted software to have access to MMIO. In virtualized environments, guests may be given access to devices, and so mitigation may be needed.

Intel Xeon server systems (excluding Intel Xeon E3 processors) are only affected by the DRPW issue. For these and any other processors that enumerate SBDR_SSDP_NO, the fill buffer overwriting described in the Preventing Untrusted Software from Propagating Secret Data into Vulnerable Buffers section is not needed before transitioning to untrusted software that does not have MMIO write access to a DRPW-affected endpoint.

Similarly, on client and Intel Xeon E3 systems that are affected by SBDR, it may not be necessary to overwrite fill buffers before transitioning to untrusted software that does not have access to MMIO addresses.

Preventing Software from Acting as a Confused Deputy by Propagating Secret Data into Vulnerable Buffers

On processors affected by FBSDP and either SBDR or SSDP, it is possible that victim software may unintentionally copy secret data from the core’s fill buffers to an uncore response or transaction buffer. Although it is not clear that an attacker will be able to control or cause this situation, customers should evaluate their risk profile to see if the mitigations described in this section are needed.

Note that Intel Xeon server systems (excluding Intel Xeon E3 processors) are not affected by SBDR or SSDP, and thus this section does not apply to them.

On affected processors where security requires mitigating this type of confused deputy attack, software can perform an FB_CLEAR operation before performing writes that go through sideband uncore response and transaction buffers. An MFENCE;LFENCE sequence or a serializing instruction after the write can ensure that younger operations that touch secret data do not put that data into a fill buffer entry before the older write uses that fill buffer entry. These writes include MMIO writes to endpoints on sideband, IO writes and certain operations that do special register writes to uncore buffer. Refer to the Operations That May Cause Writes into Non-Coherent Fabrics section.

Also note that there are several aspects that may still lead to secret data being written out to the non-coherent fabric in non-valid bytes. It is possible that the sibling hyperthread, code fetch, or a hardware prefetcher or asynchronous event can put secret data into the fill buffer between the FB_CLEAR operation and the partial write to the non-coherent fabric. Finally, software handlers that run between the FB_CLEAR operation and the partial write (such as interrupt or page fault handlers) may result in the kernel thread being preempted. When the kernel thread is rescheduled, the OS may perform an FB_CLEAR to ensure that any fill buffers are overwritten.

Mitigating PSDP Propagation

After reading secret data from MMIO (such as an encryption key from a TPM device), that data may remain in uncore buffers. On parts affected by PSDP, that stale data may propagate to the fill buffers where it could be attacked by other techniques.

To mitigate this, the OS, VMM, or driver that reads the secret data can reduce the window in which that data remains vulnerable in the uncore buffers by performing an additional read of some non-secret data from a valid MMIO register on the primary bus with the same cache line offset (address mod 64) and at least the same size as the register from which the secret data was read. Note that TPM 2.0 supports encrypted MMIO transactions, and this mode may also be used to mitigate a stale data attack.

Since aborted accesses will not overwrite the buffer, software should use real register reads that are mapped over the primary bus. Ring 0 software can either read the lower configuration space of the device containing secret data or be hardcoded to read the standard configuration addresses of a device that is never disabled (such as SPI). Guest software and Ring 3 software may not have access to these options, so the hypervisor or other Ring 0 software would need to provide appropriate addresses based on the devices exposed to the guest or Ring 3 software.

Preventing Untrusted Software from Using Processor MMIO Stale Data Vulnerabilities to Extract Data

For some systems and usage models, it may be more feasible to prevent malicious actors from observing secrets in the uncore response/transaction buffers than it is to prevent secret data from entering those buffers. Such systems may be able to avoid some of the situations described in the Preventing Software from Acting as a Confused Deputy by Propagating Secret Data into Vulnerable Buffers section. Note that this may not be feasible on systems that are affected by both MFBDS and SSDP.

The easiest way to prevent malicious actors from observing these secrets is to prevent them from having access to MMIOs. In cases where untrusted software must have access to MMIO, a VMM may be able to cause VM exits on the transactions and scrub the data for cases that may be attacks, but the needed VM exits may cause increased performance overhead.

Considerations for Nested Hypervisors and Migration Pools

Some environments may have migration pools that include different platforms. In such cases, the VMM must expose the lowest common denominator features to VMs and nested hypervisors in order to ensure compatibility. The VMM is responsible for ensuring that the proper behaviors and security properties are maintained for each platform.

Nested Hypervisors

On platforms affected by FBSDP (platforms that enumerate FBSDP_NO == 0) the VMM is expected to perform an FB_CLEAR operation on certain VM transitions (refer to the Processor Support for Mitigations section) in order to prevent fill buffer data from being exposed. As documented in the Definitions section under the explanation of FB_CLEAR, on some platforms both the L1D_FLUSH command and the VERW instruction will do this, but on other platforms only VERW does.

Supporting migration pools that include platforms affected by FBSDP, some of which perform an FB_CLEAR operation as part of the L1D_FLUSH command and some of which do not, requires the VMM to handle nested VMMs specially. If the primary (L0) VMM is running on a platform that requires VERW in order to perform the FB_CLEAR operation, the primary VMM should take a VMEXIT on access to the IA32_FLUSH_CMD MSR (where the L1D_FLUSH command bit is). It can then execute the VERW instruction and potentially also the L1D_FLUSH command. This will ensure that, even if the nested (L1) VMM only executes the L1D_FLUSH command and not VERW, the fill buffers will be cleared.

Consider the following example of a mixed migration pool:

- System A enumerates the following: RDCL_NO=1, MDS_NO=1, FBSDP_NO=0, L1D_FLUSH=1, MD_CLEAR=1, FB_CLEAR=1. On this system, VERW performs FB_CLEAR but L1D_FLUSH does not.

- System B enumerates the following: RDCL_NO=0, MDS_NO=0, FBSDP_NO=0, L1D_FLUSH=1, MD_CLEAR=1. On this system, both VERW and L1D_FLUSH perform FB_CLEAR.

- A nested (L1) VMM running in this pool may run on either system and so would see the following minimal feature set enumerated to it on all systems in the pool: RDCL_NO=0, MDS_NO=0, FBSDP_NO=0, L1D_FLUSH=1, MD_CLEAR=1.

As a result, this nested VMM will expect that the L1D_FLUSH command will perform an FB_CLEAR operation, and so it will not additionally execute VERW. - But when the L1 VMM runs on System A, its L1D_FLUSH will not actually perform an FB_CLEAR. Thus, the L0 VMM on System A must intercept the L0 VMM’s L1D_FLUSH and additionally execute VERW. The L0 VMM on System B does not need to do this, as the L1 VMM’s L1D_FLUSH will perform an FB_CLEAR when running on System B.

Performance Considerations

On platforms affected by FBSDP (platforms that enumerate FBSDP_NO == 0), a guest OS may use the FB_CLEAR operation to protect kernel and application data from a malicious application that has (or could have) MMIO access. This will incur the performance overhead of fill buffer clearing on these context switches.

However, the guest OS does not know whether or not it has really been given direct hardware access to a device’s MMIO space, or whether it is using an emulated or paravirtualized device, and therefore the guest OS must assume it could have direct access and so protect the data.

The VMM does know whether it has given direct MMIO access to a guest OS. In cases where it has not, the VMM could set the FB_CLEAR_DIS bit to cause the VERW instruction to not perform the FB_CLEAR action. This will significantly reduce the performance overhead for those guests which are performing FB_CLEAR operations on process context switches.

Similarly, guests that are in migration pools with platforms that are affected by MDS or TAA will be told by the VMM that they are always running on a platform that is affected, even if in some cases they are not. For those cases where the guest is run on a platform that is not affected by either MDS or TAA, or is affected by the Processor MMIO Stale Data Vulnerabilities but does not have direct hardware access, the VMM can set FB_CLEAR_DIS bit to reduce the performance impact.

Intel® Software Guard Extensions (Intel® SGX)

Attestation

When SMT is disabled at boot and the Intel SGX platform TCB is up-to-date, attestations originating from affected platforms will result in the SW_HARDENING_NEEDED attestation response from the attestation verifier. This means that the relying party should evaluate the attesting ISV enclave (by inspecting its SGX Report included in the attestation) to determine whether the enclave contains the necessary mitigations. For example, the ISVPRODID or ISVSVN field in the Report can be used to signal the presence of mitigations.

It is important to note that Intel SGX attestations only reflect the microcode patch that is loaded upon platform reset. Intel SGX attestations do not reflect, for example, a microcode patch loaded by an operating system.

SMT Considerations for Intel® SGX

On processors with SMT enabled that are not affected by MDS or TAA, but are affected by these MMIO vulnerabilities, it is possible for malicious software running on a sibling thread to use these vulnerabilities to propagate an enclave’s fill buffer data into the uncore where it can then be extracted.

The Intel SGX security model does not trust the OS scheduler to ensure that software workloads running on sibling threads mutually trust each other. Intel SGX remote attestation reflects whether Intel® Hyper-Threading Technology (Intel® HT Technology) is enabled or disabled by the BIOS10. For processors affected by these MMIO stale data vulnerabilities, the remote attestation response will indicate CONFIGURATION_AND_SW_HARDENING_NEEDED11 if Intel HT Technology is enabled. If Intel HT technology is disabled, the attestation response will be SW_HARDENING_NEEDED, as stated above. An Intel SGX remote attestation relying party can evaluate the risk of potential cross-thread attacks when Intel HT Technology is enabled on the platform and decide whether to trust an enclave on the platform to protect specific secret information.

Implications for Software Running in Intel SGX Enclave Mode

Because the Intel SGX security model does not trust the OS, a malicious OS could map MMIO memory into the untrusted memory space of an application that uses one or more Intel SGX enclaves. This could include the region of untrusted memory used for parameter passing to/from ECALLs and OCALLs, or any external buffers that an enclave might use to communicate with its application. If the malicious OS does such mapping, then when the enclave writes to this memory, it could propagate the stale data in its fill buffers into the uncore, where it could later be extracted by malicious software.

The mitigations below assume that Intel HT Technology is disabled to ensure that once the fill buffers are overwritten, a sibling thread cannot repopulate them. The mitigation depends on how the enclave is accessing the non-enclave memory regions.

- Enclaves that only write to memory outside the enclave in the context of ECALLs and OCALLs:

For enclaves using the Intel SGX SDK and Edger8r tool included with the SDK to create and manage the ECALL and OCALL interface, Intel has released an update to the SDK and Edger8r tool that will prevent fill buffer data exposure through the code generated by the Edger8r tool. Similarly, the Intel SGX SDK will include updates that will prevent fill buffer data exposure through the code used by enclaves that use the switchless mode supported by the Intel SDK. - Enclaves that write to memory outside the enclave using code that isn’t associated with ECALLs or OCALLs:

This includes enclaves that use the Intel SGX SDK but which specify the [user_check] attribute in their enclave definition language (EDL)12. For these enclaves, all writes to untrusted memory must either be preceded by the VERW instruction and followed by the MFENCE; LFENCE instruction sequence or must be in multiples of 8 bytes, aligned to an 8-byte boundary.

Intel will work with the developers of other SGX SDKs and runtimes to help ensure that they have similar mitigations.

32-bit Enclaves vs. 64-bit Enclaves

32-bit enclaves running on a 32-bit OS are vulnerable to additional OS manipulation of page table mappings. There is no mitigation for this issue, but remote attestation can determine if an enclave is 32-bit, and thus susceptible to such an attack. 64-bit enclaves are not susceptible to this issue. Attestation cannot be used to determine if the 32-bit enclave is running in a 32-bit OS or in a maliciously configured environment.

System Management Mode (SMM)

Systems enter System Management Mode (SMM) either:

- Asynchronously in response to some external event that requires intervention by platform firmware, or:

- Synchronously to process some request from the operating system or VMM (for example, for an EFI runtime services function call).

Code in SMM may execute MMIO operations that move data between microarchitectural buffers as documented in the preceding sections. This may result in OS/VMM data moving from fill buffers to uncore buffers where it may be vulnerable to some of the techniques described in this paper.

OSes/VMMs concerned about this possibility may use an FB_CLEAR operation to overwrite fill buffers before executing code that is known to synchronously enter SMM.

SMM isolation solutions, such as an SMI Transfer Monitor (STM), that protect the OS and VMM from compromised SMI handlers, should follow the VM and OS guidance in this article to prevent isolated/deprivileged SMM code from performing attacks using these methods.

Enumeration

Intel recommends applying the latest Intel security mitigations and ensuring systems are running the latest firmware/MCU versions available. MCU may still be required for enumeration even when processors are not affected by a particular security issue. Contact your operating system (OS) and virtual machine manager (VMM) vendors to obtain their latest software updates.

Note that on processors where IA32_ARCH_CAPABILITIES is not enumerated (CPUID.(EAX=7H,ECX=0):EDX[29] is 0), all IA32_ARCH_CAPABILITIES bits should be treated as 0.

Note that microcode updates to mitigate these issues may also change enumeration for existing features not described here. For example, they may set SRBDS_CTRL (CPUID (EAX=07H,ECX=0).EDX[SRBDS_CTRL = 9]) on parts that did not previously enumerate it.

| Register Address (Hex) | Register Address (Dec) | Register Name / Bit Fields | Bit Description | Comment |

|---|---|---|---|---|

| 10AH | 266 | IA32_ARCH_CAPABILITIES | Enumeration of Architectural Features (RO) | If CPUID.(EAX=07H, ECX=0):EDX[29]=1. |

| 10AH | 266 | 13 | SBDR_SSDP_NO: The processor is not affected by either the SBDR vulnerability or the SSDP. | |

| 10AH | 266 | 14 | FBSDP_NO: The processor is not affected by FBSDP. | |

| 10AH | 266 | 15 | PSDP_NO: The processor is not affected by vulnerabilities involving PSDP. | |

| 10AH | 266 | 17 | FB_CLEAR: The processor will overwrite fill buffer values as part of MD_CLEAR operations with the VERW instruction. On these processors, L1D_FLUSH does not overwrite fill buffer values. | |

| 18 | FB_CLEAR_CTRL: The processor supports read and write to the IA32_MCU_OPT_CTRL MSR (MSR 123H) and to the FB_CLEAR_DIS bit in that MSR (bit position 3). | On such processors, the FB_CLEAR_DIS bit can be set to cause the VERW instruction to skip the FB_CLEAR action (FB_CLEAR_DIS does not disable the FB_CLEAR action in the L1D_FLUSH operation for processors in which L1D_FLUSH overwrites the fill buffers) |

Example Enumeration of Processor MMIO Stale Data Vulnerabilities

Software can use a list of affected CPUs to enumerate these issues on systems that do not have updated microcode. Newer processors and microcode update on existing affected processors will add new bits to IA32_ARCH_CAPABILITIES MSR. These bits can be used to enumerate specific variants of Processor MMIO Stale Data vulnerabilities and mitigation capability. If a CPU is on the list of affected processors, you can use the algorithm below to enumerate the Processor MMIO Stale Data vulnerability:

If CPUID(EAX=7H,ECX=0):EDX[ARCH_CAP=29] == 1

If (IA32_ARCH_CAPABILTIES. SBDR_SSDP_NO [bit 13] == 0 OR

IA32_ARCH_CAPABILTIES. FBSDP_NO [bit 14] == 0 OR

IA32_ARCH_CAPABILTIES. PSDP_NO [bit 15] == 0)

Processor MMIO Stale Data vulnerabilities present

Else

Processor MMIO Stale Data vulnerabilities not present

Example Mitigation Selection for Processor MMIO Stale Data Vulnerabilities

If Processor MMIO Stale Data vulnerabilities are present (as enumerated in the section above), use the following pseudocode algorithm to decide which mitigation is needed. This pseudocode checks whether a system has the microcode to overwrite fill buffers.

If IA32_ARCH_CAPABILTIES. FB_CLEAR [bit 17] == 1

OR

(CPUID (EAX=7H,ECX=0):EDX [MD_CLEAR = bit 10] == 1 AND

CPUID (EAX=7H,ECX=0):EDX [FLUSH_L1D = bit 28] == 1 AND

IA32_ARCH_CAPABILTIES. MDS_NO [bit 5] == 0)

VERW overwrites fill buffers

Else

Microcode update needed

If (System is also affected by MDS or TAA)

Mitigate by executing VERW before transitioning to untrusted software (same as MDS)

Else

Mitigate by executing VERW before VMENTER only for MMIO-capable attackers (guests)

IA32_ARCH_CAPABILITIES.SBDR_SSDP_NO

Processors that set bit 13 in IA32_ARCH_CAPABILITIES (MSR 10AH) are enumerating SBDR_SSDP_NO. Such processors are not affected by either the Shared Buffers Data Read (SBDR) vulnerability or the sideband stale data propagator (SSDP). This means that the techniques described in this paper cannot directly attack or otherwise infer stale data from the sideband response buffers or sideband transaction buffers.

Because of this, mitigations to prevent secret data from entering the sideband response buffers or sideband transaction buffers are not needed. Specifically, the mitigations described in the Preventing Software from Acting as a Confused Deputy by Propagating Secret Data into Vulnerable Buffers section are not needed.

IA32_ARCH_CAPABILITIES.FBSDP_NO

Processors that set bit 14 in IA32_ARCH_CAPABILITIES (MSR 10AH) are enumerating FBSDP_NO. Such processors are not affected by the fill buffer stale data propagator (FBSDP).

On processors that enumerate this, mitigations to DRPW are not needed because DRPW attacks require FBSDP propagators. Additionally, processors that enumerate this, MDS_NO, and TAA_NO do not need the mitigations described in the Preventing Untrusted Software from Using Processor MMIO Stale Data Vulnerabilities to Extract Data section because no technique is known that can infer or read the stale bytes in the fill buffer.

IA32_ARCH_CAPABILITIES.PSDP_NO

Processors that set bit 15 in IA32_ARCH_CAPABILITIES (MSR 10AH) are enumerating PSDP_NO. Such processors are not affected by vulnerabilities involving primary stale data propagator (PSDP). Even processors that do not enumerate PSDP_NO may still not need the mitigations described in the Mitigating PSDP Propagation section if they are not affected by any other known vulnerability that can reveal the fill buffer contents (specifically, they enumerate MDS_NO, FBSDP_NO, and either enumerate TAA_NO or do not support Intel TSX).

IA32_ARCH_CAPABILITIES.FB_CLEAR

Processors that set FB_CLEAR, bit 17 in IA32_ARCH_CAPABILITIES (MSR 10AH), will overwrite fill buffer values as part of MD_CLEAR operations, as described in the Fill Buffer Clearing Operations section. On these processors, the VERW instruction will overwrite fill buffer values while L1D_FLUSH may or may not.

Processors that do not enumerate MDS_NO13 (meaning they are affected by MDS) but that do enumerate support for both L1D_FLUSH14 and MD_CLEAR15 implicitly enumerate FB_CLEAR as part of their MD_CLEAR support. Note that processors not affected by these issues may not explicitly enumerate FB_CLEAR, which may have impact on VMM emulation of this enumeration in migration pools.

IA32_ARCH_CAPABILITIES.FB_CLEAR_CTRL

Processors that set bit 18 in IA32_ARCH_CAPABILITIES (MSR 10AH) support read and write to IA32_MCU_OPT_CTRL MSR (123H) and to the FB_CLEAR_DIS bit in that MSR (bit position 3). On such processors, the FB_CLEAR_DIS bit can be set to cause the VERW instruction to not perform the FB_CLEAR action (FB_CLEAR_DIS does not disable the FB_CLEAR action in the L1D_FLUSH operation for processors in which L1D_FLUSH overwrites the fill buffers). This may be useful to reduce the performance impact of FB_CLEAR in cases where system software deems it warranted (for example, when performance is more critical, or the untrusted software has no MMIO access). This bit does not disable fill buffer overwriting on exit from Intel SGX enclaves or during enclave execution. Note that FB_CLEAR_DIS has no impact on enumeration (for example, it does not change FB_CLEAR or MD_CLEAR enumeration) and it may not be supported on all processors that enumerate FB_CLEAR. Not all processors that support FB_CLEAR will support FB_CLEAR_CTL.

| Register Address Hex | Register Address Dec | Register Name / Bit Fields | Bit Description | Comment |

|---|---|---|---|---|

| 123H | 291 | IA32_MCU_OPT_CTL | Microcode Update Option Control (R/W) | CPUID (EAX=07H,ECX=0).EDX[SRBDS_CTRL = 9]==1 |

| 123H | 291 | 0 | RNGDS_MITG_DIS (R/W) When set to 0 (default), SRBDS mitigation is enabled for RDRAND and RDSEED. When set to 1, SRBDS mitigation is disabled for RDRAND and RDSEED executed outside of Intel SGX enclaves. |

|

| 123H | 291 | 1 | RTM_ALLOW: When set to 0, XBEGIN will always abort with EAX code 0. When set to 1, XBEGIN behavior depends on the value of IA32_TSX_CTRL[RTM_DISABLE]. | Read/Write. See below criteria for when RTM_ALLOW may be set. |

| 123H | 291 | 2 | RTM_LOCKED: When 1, RTM_ALLOW is locked at zero, writes to RTM_ALLOW will be ignored. | Read-Only status bit. |

| 123H | 291 | 3 | FB_CLEAR_DIS: When set, causes the VERW instruction to not perform the FB_CLEAR action. | |

| 123H | 291 | 63:4 | Reserved |

Performance Consideration for Processor MMIO Stale Data Vulnerabilities

Although the underlying hardware issue for Processor MMIO Stale Data Vulnerabilities is different than MDS and SRBDS, they are mitigated using similar techniques. Thus, systems that have already applied mitigations for MDS and SRBDS should experience minimal performance impact. For processors that are not affected by MDS and SRBDS and are applying these mitigation techniques for the first time, the performance impact is expected to be similar to the performance impact experienced on processors with the MDS and SRBDS mitigations.

Any potential performance impact associated with mitigation of the Processor MMIO Stale Data vulnerabilities will be workload-dependent and will vary depending on the processor and platform configuration (hardware, software, OS, and VMM), as well as mitigation technique.

Mitigation requires a combination of microcode/BIOS and software/OS/VMM updates. Note that any potential performance impacts may not be observable until all updates with mitigations are installed.

Affected Processors

Note the SRBDS Update mitigation affects the same processors that received the original SRBDS mitigation as well as some newer processors that were not affected by SRBDS but are affected by certain Processor Stale Data MMIO vulnerabilities.

A list of processors affected by these Processor MMIO Stale Data vulnerabilities can be found in the consolidated Affected Processors table under the MMIO: Device Register Partial Write (DRPW) and MMIO: Shared Buffers Data Sampling (SBDS) and Shared Buffers Data Read (SBDR) columns.

Footnotes

- For more information on MD_CLEAR functionality, refer to Intel Analysis of Microarchitectural Data Sampling (MDS).

- Only the memory operand variant of VERW has been extended to also overwrite buffers affected by Processor MMIO Stale Data Vulnerabilities. This use of VERW is the same as the use of VERW to overwrite buffers affected by MDS.

- This example assumes that the Data Segment selector indicates a writable segment.

- Except Intel® Xeon® E3 processors. Refer to the Affected Processors table.

- Except Intel Xeon E3 processors.

- Note that SBDS only applies to processors affected by MFBDS or TAA.

- Intel® Hyper-Threading Technology (Intel® HT Technology) is Intel’s implementation of simultaneous multithreading (SMT).

- Except Intel Xeon E3 processors.

- The OS can disable SMT, but Intel SGX attestations do not reflect this.

- Implies that the platform TCB is up-to-date.

- For more information on the [user_check] attribute, refer to the Intel SGX SDK for Linux* OS developer reference.

- MDS_NO support enumerated by IA32_ARCH_CAPABILITIES[5].

- L1D_FLUSH support enumerated by CPUID.(EAX=7H,ECX=0):EDX[L1D_FLUSH=28].

- MD_CLEAR support enumerated by CPUID.(EAX=7H,ECX=0):EDX[MD_CLEAR=10].