It Is Easier Than Ever to Move to a Nonproprietary Programming Language

Subarnarekha Ghosal, compiler technical consulting engineer, Intel Corporation

@IntelDevTools

Get the Latest on All Things CODE

Sign Up

It is becoming apparent that future computing systems will be heterogeneous. The Matrix Algebra on GPU and Multicore Architectures (MAGMA) project at the University of Tennessee is developing a dense linear algebra library similar to the Linear Algebra Package (LAPACK), but for heterogeneous architectures, like current CPU and GPU systems. MAGMA stands out as an obvious candidate when you look for a sparse solver code sample that gives good performance across different architectures. This article describes the use of the Intel® DPC++ Compatibility Tool to migrate MAGMA for CUDA* code to Data Parallel C++ (DPC++).

Migration Steps and Hacks

Migration can happen in two ways. The first method is file-to-file manual migration, which is a good choice if you are migrating a few files. The second method is to create a JSON file for projects that use Make or CMake commands. MAGMA has a makefile, so this article focuses on the JSON approach.

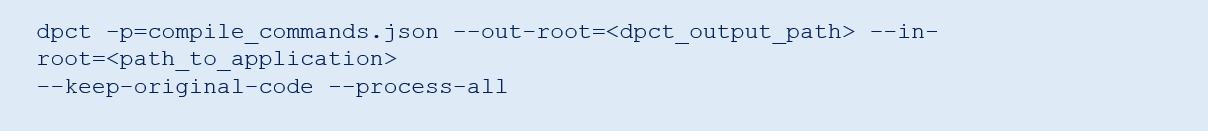

The build options of the input projects files (that is, include path and macros definitions) are collected in a JSON file. The file is generated using the intercept-build Make command (be sure to use Make 4.0 or later). Next, run the dpct command, which has a handful of flags to help with migration. Use the following command line to migrate MAGMA code.

Table 1. Flags and functions

| Flags | Functions |

|---|---|

| -p=compile_commands.json | Specifies the compile_command. json file. |

| --keep-original-code | To see the lines of code that has been changed by the compatibility tool, it's a good practice to use this flag. This flag allows you to see the original code followed by the code that has changed in the migrated file by the Intel® DPC++ Compatibility Tool. |

| --in-root=<path to input folder> | Specifies the directory path for the root of the source tree to be migrated. |

| --out-root=<path to dpct_output folder> | Used to specify a custom path to the output directories. |

| --process-all | The Intel DPC++ Compatibility tool skips file(s) if the file(s) doesn't contain any syntax or type that needs to be migrated, and the skipped file(s) are not available in the out-root folder. This option ideally replicates the folder structure in out-root that exists in the original application. |

| --cuda-include-path=<dir> | When CUDA headers are not in the expected path or there are multiple versions of CUDA in the same path, it is a good practice to use this flag to specify the path to the desired version of CUDA. |

For additional flags, see Command Line Options Reference.

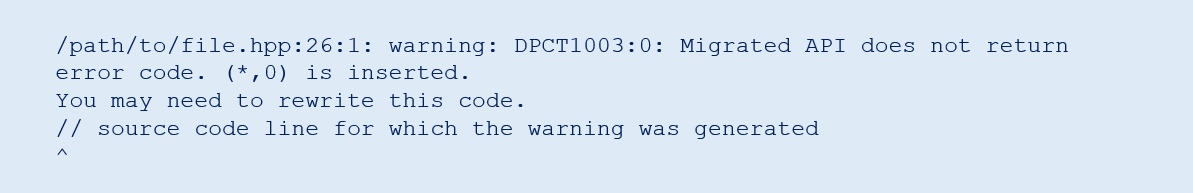

The next step is to interpret the output after you run dpct on the application. The dpct annotates places in the code where modifications may be necessary to make the code DPC++ compliant or syntactically correct.

For large projects, it is advised to redirect the migration logs to a file. To learn how various error codes and diagnostics are reported by the tool, see Diagnostics Reference.

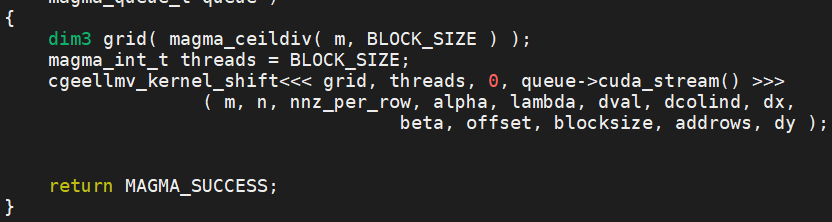

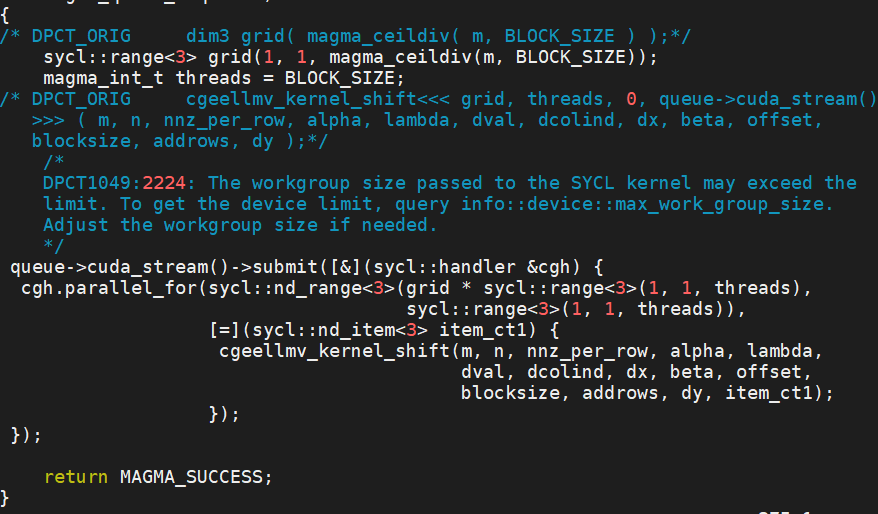

Figures 1 and 2 show a successful migration of a kernel call from the MAGMA library. Since the migration is done with the keep-original-code flag, the original code is also present in the migrated file (Figure 2).

Figure 1. Original CUDA code

Figure 2. Migrated DPC++ code

Some manual effort is required to migrate functions that the tool cannot migrate, but annotations generated by the tool help.

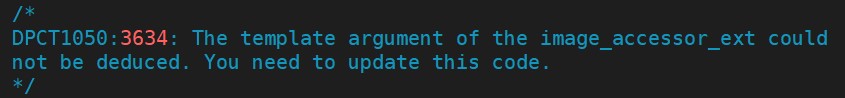

Figure 3. Annotations generated by the tool

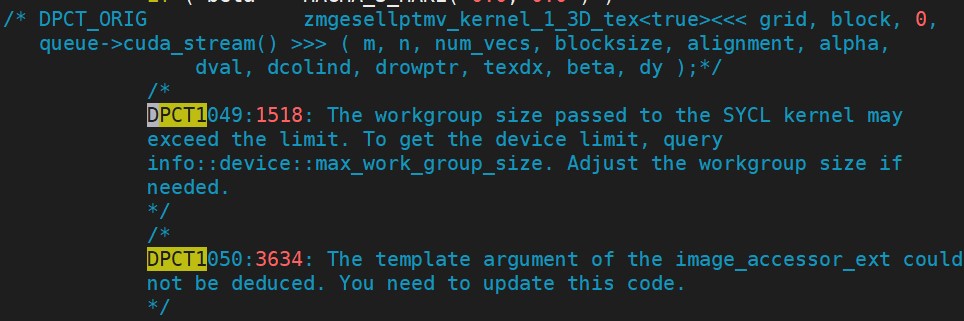

Sometimes, there are multiple annotations for a line of CUDA code.

Figure 4. Multiple annotations generated by the tool

Some CUDA libraries have equivalent functions in libraries for oneAPI (for example: oneAPI Math Kernel Library, oneAPI Deep Neural Network Library, and oneAPI Video Processing Library). The Intel DPC++ Compatibility Tool can migrate many CUDA library functions to their oneAPI equivalents. The tools call out those functions that cannot be migrated directly. It is often possible to manually implement the same functionality. For example, the CUDA cusparseDcsrmv function can be manually migrated using a combination of oneMKL mkl::sparse::gemv and mkl::sparse::set_csr_data functions.

The Intel DPC++ Compatibility Tool is evolving every day with input from users. Some of the known issues with the tool are stated in the Known Issues and Limitations section of the release notes.

The Intel DPC++ Compatibility Tool reduces the time and effort required to migrate CUDA applications to DPC++. It gives helpful annotations and warnings to minimize the manual effort required for sections of code that are not migrated by the tool. Migrating MAGMA from CUDA to DPC++ would have been a tedious job without this tool.

If you are interested in migrating a CUDA application to DPC++, get started with this online training. The Intel DPC++ Compatibility Tool is available as part of Intel® oneAPI Base Toolkit.

______

You May Also Like

| Migrate Your Existing CUDA Code to DPC++ Code |

| Intel® oneAPI Base Toolkit Get started with this core set of tools and libraries for developing high-performance, data-centric applications across diverse architectures. Get It Now See All Tools |