| Notes |

|

The OpenVINO™ toolkit quickly deploys applications and solutions that emulate human vision. The toolkit extends computer vision (CV) workloads across Intel® hardware based on Convolutional Neural Networks (CNN), which maximizes performance. These steps generally follow the How to Build ARM CPU plugin available. However, specific changes are required to run everything on the Raspberry Pi 4*. This guide provides steps for building open-source distribution of the OpenVINO™ toolkit for Raspbian* 32-bit OS with a cross-compiling method.

Click on the topic for details:

System requirements

| Note | This guide assumes you have your Raspberry Pi* board up and running with the operating system listed below. |

Hardware

- Raspberry Pi* 4 (Raspberry Pi* 3 Model B+ should work.)

- At least a 16-GB microSD Card

- Intel® Neural Compute Stick 2

- Ethernet Internet connection or compatible wireless network

- Host machine with docker container installed

Target operating system

- Raspbian* Buster, 32-bit

Setting up your build environment

| Note | This guide contains commands that need to be executed as root or sudo access to install correctly. |

Make sure your device software is up to date:

sudo apt update && sudo apt upgrade -y

Installing Docker Container

| Note | You may follow the installation instruction based on the docker official documentation https://docs.docker.com/engine/install |

sudo apt-get install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

sudo groupadd docker

sudo usermod -aG docker ${USER}

sudo systemctl restart docker

Clone openvino_contrib repositor

| Note | The openvino_contrib and OpenVINO toolkit version for this article is based on 2022.1 |

Download source code and modify the config file:

git clone --recurse-submodules --single-branch --branch=2022.1 https://github.com/openvinotoolkit/openvino_contrib.git

Go to the arm_plugin directory:

cd openvino_contrib/modules/arm_plugin

Modify the contents of the Dockerfile.RPi32_buster file as below with editor tools:

vim dockerfiles/Dockerfile.RPi32_buster

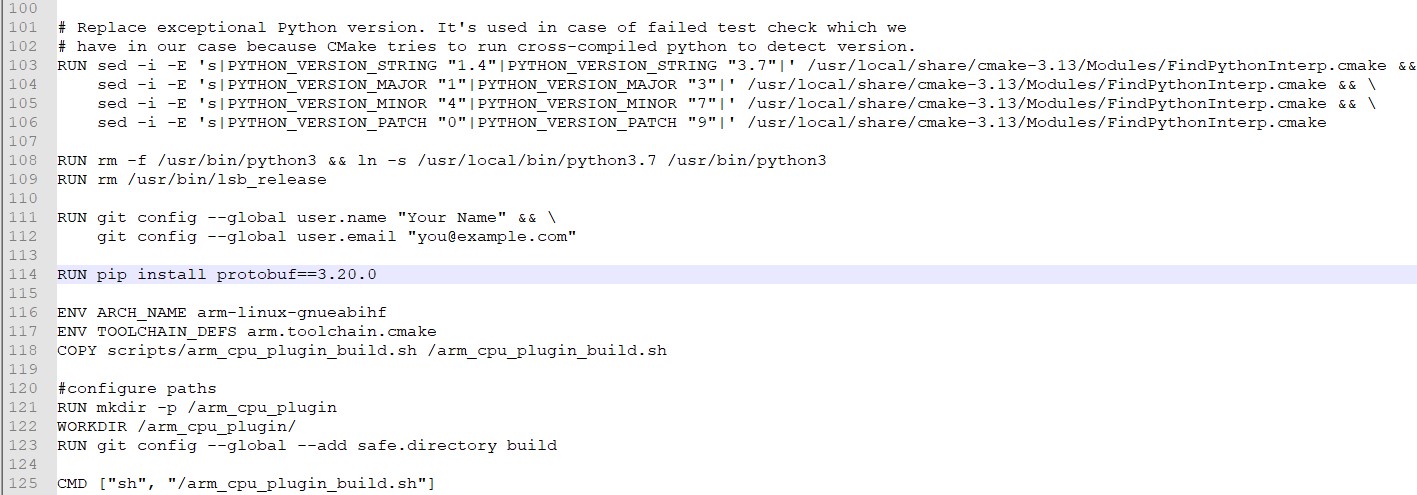

Add "RUN pip install protobuf==3.20.0" in line 114, as shown in the diagram below.

Save the edited file.

Modify the contents of the arm_cpu_plugin_build.sh file as shown below with editor tools:

vim scripts/arm_cpu_plugin_build.sh

Edit lines 77, 78, 79 and 136 and add changes as shown in bold below:

77 checkSrcTree $OPENCV_HOME https://github.com/opencv/opencv.git 4.5.5-openvino-2022.1 4.x

78 checkSrcTree $OPENVINO_HOME https://github.com/openvinotoolkit/openvino.git 2022.1.0 releases/2022/1

81 checkSrcTree $OMZ_HOME https://github.com/openvinotoolkit/open_model_zoo.git 2022.1.0 releases/2022/1

136 -DENABLE_INTEL_MYRIAD=ON -DCMAKE_BUILD_TYPE=$BUILD_TYPE \

Save the edited file.

Cross-Compile OpenVINO™ toolkit in Docker container environment

In this step, we will run the script to download and cross-compile OpenVINO™ toolkit and other components like OpenCV* in the Docker container environment :

Go to the ARM CPU plugin directory:

cd openvino_contrib/modules/arm_plugin

Build a Docker* image:

docker image build -t arm-plugin -f dockerfiles/Dockerfile.RPi32_buster .

Build the plugin in Docker* container:

The build process is performed by /arm_cpu_plugin_build.sh script executed inside /arm_cpu_plugin directory (default container command to execute). All intermediate results and build artifacts are stored inside the working directory.

So one could mount the whole working directory to get all results stored outside of the container:

mkdir build

docker container run --rm -ti -v $PWD/build:/arm_cpu_plugin arm-plugin

| Note | There are a few environment variables that control /arm_cpu_plugin_build.sh script execution.

|

In the build folder, OV_ARM_package.tar.gz is generated

ls build

Transfer the OV_ARM_package.tar.gz to the target device (Raspberry Pi 4* 32-bit Buster)

There are various ways of transferring the packages to the target device (Raspberry Pi 4*), secure copy directly to the target device, copy the package to a USB thumb drive and transfer it.

This article will show how to mount a USB thumb drive in the host machine and copy the build package to the mounted thumb drive.

Insert the USB thumb drive into the system USB port, then check the device boot using the command below;

sudo fdisk -l

Once verified the device boot, mount the device boot (for example /dev/sda) into /mnt;

sudo mount /dev/sda /mnt

Next, copy the OpenVINO package to the USB thumb drive;

sudo cp -rf build/OV_ARM_package.tar.gz /mnt/

Verifying the build package

After completing the cross-compilation, and successful copied the OV_ARM_package.tar.gz to the target device (Raspberry Pi 4*).

Install compilation tool

sudo apt update

sudo apt install cmake -y

Extract the OV_ARM_package.tar.gzpackage

mkdir ~/openvino_dist/

tar -xvzf OV_ARM_package.tar.gz -C ~/openvino_dist/

Source the Setup Variable

source ~/openvino_dist/setupvars.sh

Compile the Sample code

cd ~/openvino_dist/samples/cpp

./build_samples.sh

To verify that the toolkit and Intel® Neural Compute Stick 2 and ARM* plugin work on your device, complete the following steps:

- Run the sample application hello_query_device to confirm that all libraries load correctly.

- Download a pre-trained model.

- Select an input for the neural network (i.e. an image file).

- Configure the Intel® Neural Compute Stick 2 Linux* USB driver.

- Run benchmark_app with the selected model and input.

Sample applications

The Intel® OpenVINO™ toolkit includes sample applications utilizing the Inference Engine and Intel® Neural Compute Stick 2. One of the applications is hello_query_device, which can be found in the following directory:

~/inference_engine_cpp_samples_build/armv7l/Release

Run the following commands to test hello_query_device:

cd ~/inference_engine_cpp_samples_build/armv7l/Release

./hello_query_device

It should print a dialog, describing the available devices for inference present on the system.

Downloading a model

The application needs a model to pass the input through. You can obtain models for the Intel® OpenVINO™ toolkit in IR format by:

- Using the Model Optimizer to convert an existing model from one of the supported frameworks into IR format for the Inference Engine. Note the Model Optimizer package is not available for Raspberry Pi*.

- Using the Model Downloader tool to download from the Open Model Zoo. Only public pre-trained models.

- Download the IR files directly from storage.openvinotookit.org

For our purposes, downloading directly is easiest. Use the following commands to grab a person-vehicle-bike detection model:

wget https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.1/models_bin/3/person-vehicle-bike-detection-crossroad-0078/FP16/person-vehicle-bike-detection-crossroad-0078.bin -O ~/Downloads/person-vehicle-bike-detection-crossroad-0078.bin

wget https://storage.openvinotoolkit.org/repositories/open_model_zoo/2022.1/models_bin/3/person-vehicle-bike-detection-crossroad-0078/FP16/person-vehicle-bike-detection-crossroad-0078.xml -O ~/Downloads/person-vehicle-bike-detection-crossroad-0078.xml

| Note | The Intel® Neural Compute Stick 2 requires models that are optimized for the 16-bit floating point format known as FP16. If it differs from the example, your model may require conversion using the Model Optimizer to FP16 on a separate machine as the Model Optimizer is not supported on Raspberry Pi*. |

Input for the neural network

The last item needed is input for the neural network. For the model we’ve downloaded, you need an image with three channels of color. Download the necessary files to your board:

wget https://cdn.pixabay.com/photo/2018/07/06/00/33/person-3519503_960_720.jpg -O ~/Downloads/person.jpg

Configuring the Intel® Neural Compute Stick 2 Linux USB Driver

Some udev rules must be added to allow the system to recognize Intel® NCS2 USB devices.

| Note | If the current user is not a member of the user's group, run the following command and reboot your device. |

sudo usermod -a -G users "$(whoami)"

Set up the OpenVINO™ environment:

source /home/pi/openvino_dist/setupvars.sh

To perform inference on the Intel® Neural Compute Stick 2, install the USB rules by running the install_NCS_udev_rules.sh script:

sh /home/pi/openvino_dist/install_dependencies/install_NCS_udev_rules.sh

The USB driver should be installed correctly now. If the Intel® Neural Compute Stick 2 is not detected when running demos, restart your device and try again.

Running benchmark_app

When the model is downloaded, an input image is available, and the Intel® Neural Compute Stick 2 is plugged into a USB port, use the following command to run the benchmark_app:

cd ~/inference_engine_cpp_samples_build/armv7l/Release

./benchmark_app -i ~/Downloads/person.jpg -m ~/Downloads/person-vehicle-bike-detection-crossroad-0078.xml -d MYRIAD

This will run the application with the selected options. The -d flag tells the program which device to use for inference. Specifying MYRIAD activates the MYRIAD plugin, utilizing the Intel® Neural Compute Stick 2. After the command successfully executes, the terminal will display statistics for inference. You can also use the CPU plugin to run inference on the ARM CPU of your Raspberry Pi 4* device, refer to ARM* plugin operation set specification for operation support as the model used in this example is not supported by the ARM* plugin.

[ INFO ] First inference took 410.75 ms

[Step 11/11] Dumping statistics report

[ INFO ] Count: 388 iterations

[ INFO ] Duration: 60681.72 ms

[ INFO ] Latency:

[ INFO ] Median: 622.99 ms

[ INFO ] Average: 623.40 ms

[ INFO ] Min: 444.03 ms

[ INFO ] Max: 868.18 ms

[ INFO ] Throughput: 6.39 FPS

If the application ran successfully on your Intel® NCS2, OpenVINO™ toolkit and Intel® Neural Compute Stick 2 are set up correctly for use on your device.

Environment variables

You must update several environment variables before compiling and running OpenVINO toolkit applications. Run the following script to set the environment variables temporarily:

source /home/pi/openvino_dist/setupvars.sh

**(Optional)** The OpenVINO™ environment variables are removed when you close the shell. As an option, you can permanently set the environment variables as follows:

echo "source /home/pi/openvino_dist/setupvars.sh" >> ~/.bashrc

To test your change, open a new terminal. You will see the following:

[setupvars.sh] OpenVINO environment initialized

This completes the cross-compilation and build procedure for the open-source distribution of OpenVINO™ toolkit for Raspbian* OS, and usage with Intel® Neural Compute Stick 2 and ARM* plugin.