Introduction

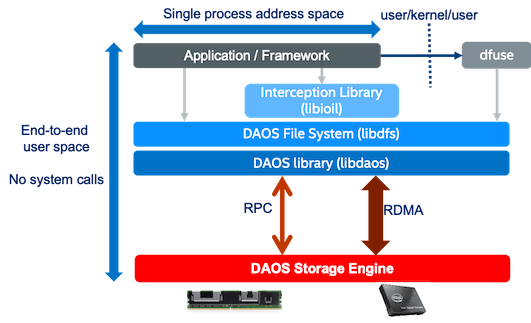

The DAOS File System (DFS) library (libdfs) allows a regular POSIX namespace to be encapsulated into a DAOS container and accessed directly by applications. If modifying the application source code to use the libdfs APIs is not an option, the alternative is to use the DAOS FUSE (dfuse) daemon to expose libdfs transparently. However, using FUSE may require an interception library (libioil) to avoid some of the FUSE performance bottlenecks by delivering full operating system (OS) bypass for read/write operations. For more information, please refer to the POSIX Namespace document at daos.io.

This article will show how to use the three code paths available to applications—dfuse, dfuse+libioil, and libdfs—to access data in a POSIX namespace stored in a DAOS container. In the case of libdfs (which corresponds to the direct path), the source code modifications necessary to make it work will be shown, along with its traditional POSIX equivalents.

Creating the Container

We assume the pool dfs_pool and its container dfs_cont exist for all cases here. To create those, you can run the following:

$ dmg pool create --label=dfs_pool --size=8G

Creating DAOS pool with automatic storage allocation: 8.0 GB total, 6,94 tier ratio

Pool created with 100.00%,0.00% storage tier ratio

--------------------------------------------------

UUID : b405ad74-5136-4325-a549-eea40d76fc66

Service Ranks : 0

Storage Ranks : 0

Total Size : 8.0 GB

Storage tier 0 (SCM) : 8.0 GB (8.0 GB / rank)

Storage tier 1 (NVMe): 0 B (0 B / rank)

$ daos container create --type=POSIX --pool=dfs_pool --label=dfs_cont

Container UUID : 51fe3022-6b32-47a9-8485-1fe669deafe1

Container Label: dfs_cont

Container Type : POSIX

Successfully created container 51fe3022-6b32-47a9-8485-1fe669deafe1

The pool is created with a size of 8GiB, and the type of the container is set to POSIX. The type serves to designate a pre-defined layout of the data in terms of the underneath DAOS object model. For example, other available types are HDF5 and PYTHON.

If we run a container check, we should see two objects inside the newly created POSIX container:

$ daos container check --pool=dfs_pool --cont=dfs_cont

check container dfs_cont started at: Wed Nov 10 05:49:47 2021

check container dfs_cont completed at: Wed Nov 10 05:49:47 2021

checked: 2

skipped: 0

inconsistent: 0

run_time: 1 seconds

scan_speed: 2 objs/sec

One object corresponds to the POSIX container superblock that holds the metadata, and the other is the root directory (that is, "/") in the namespace. For more information, please refer to the DFS README in the DAOS github repository.

Using the DFS Container with Dfuse

FUSE (Filesystem in User Space) is a filesystem provided by an ordinary user space process that can be accessed through the kernel interface. FUSE has a lot of applications. For example, it can be used to mount encrypted file systems (see EncFS), remote file systems (see sshfs or s3fs), or specific devices such as iPhones and iPods (see iFuse). You can check a comprehensive list of projects in the arch Linux wiki page for FUSE as well as in the Wikipedia page for FUSE.

In the case of dfuse, what is provided to the kernel is a remote file system backed by a DAOS container.

The first step to using dfuse is creating the mount point for our POSIX namespace. Let’s use /opt/dfs:

$ mkdir /opt/dfs

Now we can run dfuse. If you want to run it in the foreground to see any error messages for testing, you can pass the option --foreground (if you run it in the foreground, open a new terminal):

$ dfuse --pool=dfs_pool --container=dfs_cont --foreground --mountpoint=/opt/dfs

To check that dfuse is mounted, run:

$ df -h | grep dfuse

dfuse 7.5G 2.2M 7.5G 1% /opt/dfs

NOTE: Caching in dfuse is enabled by default. This can cause parallel applications to fail, as data is not synchronized between parallel caches. It is possible to disable caching in dfuse with the option --disable-caching. For more information, please refer to the Caching section of the DAOS File System documentation.

Let’s create a file by writing something to it (followed by reading its content back):

$ echo "Hello World" > /opt/dfs/hello.txt

$ cat /opt/dfs/hello.txt

Hello World

$

You can run a container check to verify that we have three DAOS objects now, where the extra object is the file we just created:

$ daos container check --pool=dfs_pool --cont=dfs_cont

check container dfs_cont started at: Wed Nov 10 05:56:43 2021

check container dfs_cont completed at: Wed Nov 10 05:56:43 2021

checked: 3

skipped: 0

inconsistent: 0

run_time: 1 seconds

scan_speed: 3 objs/sec

When done, dfuse can be unmounted via fusermount:

$ fusermount3 -u /opt/dfs

Dfuse+Libioil

To avoid some FUSE performance bottlenecks, we can intercept all the read/write (and lseek) system calls in our application with libioil to bypass the OS.

To showcase how, we created the following C program:

#include <fcntl.h>

#include <unistd.h>

#include <stdio.h>

#include <errno.h>

#include <string.h>

#define CHECK_ERROR(ret, funcname) \

do { \

if (ret < 0) \

{ \

printf("Error on %s(): %s\n", funcname, \

strerror(errno)); \

return -1; \

} \

} while (0)

int main (int argc, char *argv[])

{

int fd;

int ret;

off_t offset;

ssize_t bytes;

char buf[128];

if (argc < 2)

{

printf("USE: %s <file-path>\n", argv[0]);

return -1;

}

fd = open(argv[1], O_RDWR | O_CREAT | O_TRUNC, S_IRWXU);

CHECK_ERROR(fd, "open");

bytes = write(fd, "Hello World", strlen("Hello World"));

CHECK_ERROR(bytes, "write");

offset = lseek(fd, 0, SEEK_SET);

CHECK_ERROR(offset, "lseek");

bytes = read(fd, buf, 128);

CHECK_ERROR(bytes, "read");

printf("data read = %.*s\n", bytes, buf);

ret = close(fd);

CHECK_ERROR(ret, "close");

return 0;

}

As you can see, the code is straightforward. The program first opens the file passed as an argument (it creates it if it does not exist; it truncates it if it does). Then writes "Hello World" to it. After that, the program seeks the descriptor to position zero (beginning of the file), reads the file’s contents, prints them out, and closes the file.

We run perf to visualize the system calls before using the interception library. perf allows us to capture a large set of predefined events:

# perf record -e 'syscalls:*open' -e 'syscalls:*write' -e 'syscalls:*lseek' -e 'syscalls:*read' -e 'syscalls:*close' ./write_and_read /opt/dfs/hello.txt

data read = Hello World

[ perf record: Woken up 28 times to write data ]

[ perf record: Captured and wrote 0.028 MB perf.data (22 samples) ]

# perf script

...

write_and_read 45849 [056] 13442525.391293: syscalls:sys_enter_open: filename: 0x7ffe7f0bd535, flags: 0x00000242, mode: 0x000001c0

write_and_read 45849 [056] 13442525.393287: syscalls:sys_exit_open: 0x3

write_and_read 45849 [056] 13442525.393289: syscalls:sys_enter_write: fd: 0x00000003, buf: 0x004009ca, count: 0x0000000b

write_and_read 45849 [056] 13442525.393832: syscalls:sys_exit_write: 0xb

write_and_read 45849 [056] 13442525.393834: syscalls:sys_enter_lseek: fd: 0x00000003, offset: 0x00000000, whence: 0x00000000

write_and_read 45849 [056] 13442525.393834: syscalls:sys_exit_lseek: 0x0

write_and_read 45849 [056] 13442525.393836: syscalls:sys_enter_read: fd: 0x00000003, buf: 0x7ffe7f0bbf10, count: 0x00000080

write_and_read 45849 [056] 13442525.393837: syscalls:sys_exit_read: 0xb

write_and_read 45849 [056] 13442525.393885: syscalls:sys_enter_write: fd: 0x00000001, buf: 0x7f720d41f000, count: 0x00000018

write_and_read 45849 [056] 13442525.393916: syscalls:sys_exit_write: 0x18

write_and_read 45849 [056] 13442525.393919: syscalls:sys_enter_close: fd: 0x00000003

write_and_read 45849 [056] 13442525.393920: syscalls:sys_exit_close: 0x0

We can see that all the system calls issued from the code (open(), write(), lseek(), read(), and close()) do indeed call the system. Notice the extra write() just before close(), which corresponds to the printf() where the write is to descriptor 1 (standard output) instead of 3.

Let’s now add the interception library. All we have to do is to add the path to the libioil.so file to the LD_PRELOAD environment variable:

$ export LD_PRELOAD=<DAOS_INSTALLATION_DIR>/lib64/libioil.so:$LD_PRELOAD

If we run perf again, we get the following:

# perf record -e 'syscalls:*open' -e 'syscalls:*write' -e 'syscalls:*lseek' -e 'syscalls:*read' -e 'syscalls:*close' ./write_and_read /opt/dfs/hello.txt

data read = Hello World

[ perf record: Woken up 25 times to write data ]

[ perf record: Captured and wrote 1.154 MB perf.data (12292 samples) ]

# perf script

...

write_and_read 45944 [054] 13442652.584153: syscalls:sys_enter_open: filename: 0x7ffc4d31650f, flags: 0x00000242, mode: 0x000001c0

write_and_read 45944 [054] 13442652.586117: syscalls:sys_exit_open: 0x3

...

write_and_read 45944 [019] 13442652.720267: syscalls:sys_enter_write: fd: 0x00000001, buf: 0x7f986881c000, count: 0x00000018

write_and_read 45944 [019] 13442652.720298: syscalls:sys_exit_write: 0x18

write_and_read 45944 [019] 13442652.720317: syscalls:sys_enter_close: fd: 0x00000003

write_and_read 45944 [019] 13442652.720318: syscalls:sys_exit_close: 0x0

...

This time, there are no events between open() and close() for descriptor 3. Therefore, the interception library is effectively redirecting those to libdfs.

One can also check if the interception library is working by exporting the D_IL_REPORT environment variable. For example, without interception we have:

$ D_IL_REPORT=-1 ./write_and_read /opt/dfs/hello.txt

data read = Hello World

With interception:

$ D_IL_REPORT=-1 LD_PRELOAD=<DAOS_INSTALLATION_DIR>/lib64/libioil.so ./write_and_read /opt/dfs/hello.txt

[libioil] Intercepting write of size 11

[libioil] Intercepting read of size 128

data read = Hello World

[libioil] Performed 1 reads and 1 writes from 1 files

For more information, please refer to the Monitoring Activity section of the DAOS File System documentation.

Libdfs

In this section, we will transform the simple C code shown above so it directly uses the API provided by libdfs. Be aware that the example is limited and does not cover the whole API. If you want to learn more, please refer to the daos_fs.h header file in the DAOS GitHub repository.

#include <fcntl.h>

#include <daos.h>

#include <daos_fs.h>

#include <sys/stat.h>

#include <stdio.h>

#include <errno.h>

#include <string.h>

#define CHECK_ERROR(ret, funcname) \

do { \

if (ret < 0) \

{ \

printf("Error on %s(): %s\n", funcname, \

strerror(errno)); \

return -1; \

} \

} while (0)

#define CHECK_ERROR_DAOS(ret, funcname) \

do { \

if (ret < 0) \

{ \

printf("Error on %s(): %d\n", funcname, ret); \

return -1; \

} \

} while (0)

int main (int argc, char *argv[])

{

daos_handle_t poh;

daos_handle_t coh;

dfs_t *dfs;

dfs_obj_t *file_obj;

d_sg_list_t sgl_data;

d_iov_t iov_buf;

int ret;

char buf_in[128];

char buf_out[128];

daos_size_t read_size;

if (argc < 4)

{

printf("USE: %s <pool_label> <container_label> <file_name>\n",

argv[0]);

return -1;

}

/** init phase */

ret = daos_init();

CHECK_ERROR_DAOS(ret, "daos_init");

ret = daos_pool_connect(argv[1], NULL, DAOS_PC_RW, &poh, NULL, NULL);

CHECK_ERROR_DAOS(ret, "daos_pool_connect");

ret = daos_cont_open(poh, argv[2], DAOS_COO_RW, &coh, NULL, NULL);

CHECK_ERROR_DAOS(ret, "daos_cont_open");

ret = dfs_mount(poh, coh, O_RDWR, &dfs);

CHECK_ERROR(ret, "dfs_mount");

/** equivalent to open() */

ret = dfs_open(dfs, NULL, argv[3], S_IRWXU | S_IFREG,

O_RDWR | O_CREAT | O_TRUNC, 0, 0, NULL, &file_obj);

CHECK_ERROR(ret, "dfs_open");

/** equivalent to write() */

sgl_data.sg_nr = 1;

sgl_data.sg_nr_out = 0;

sgl_data.sg_iovs = &iov_buf;

strcpy(buf_in, "Hello World");

d_iov_set(&iov_buf, buf_in, strlen(buf_in));

ret = dfs_write(dfs, file_obj, &sgl_data, 0, NULL);

CHECK_ERROR(ret, "dfs_write");

/** equivalent to read() */

d_iov_set(&iov_buf, buf_out, 128);

ret = dfs_read(dfs, file_obj, &sgl_data, 0, &read_size, NULL);

CHECK_ERROR(ret, "dfs_read");

printf("data read = %.*s\n", read_size, buf_out);

/** equivalent to close() */

ret = dfs_release(file_obj);

CHECK_ERROR(ret, "dfs_release");

/** finish phase */

ret = dfs_umount(dfs);

CHECK_ERROR(ret, "dfs_close");

ret = daos_cont_close(coh, NULL);

CHECK_ERROR_DAOS(ret, "daos_cont_close");

ret = daos_pool_disconnect(poh, NULL);

CHECK_ERROR_DAOS(ret, "daos_pool_disconnect");

ret = daos_fini();

CHECK_ERROR_DAOS(ret, "daos_fini");

return 0;

}

In the code itself, comments are added to show what parts of the new code correspond to the old code. There are also two new parts: init and finish. Let’s go piece by piece:

Init

ret = daos_init();

CHECK_ERROR_DAOS(ret, "daos_init");

ret = daos_pool_connect(argv[1], NULL, DAOS_PC_RW, &poh, NULL, NULL);

CHECK_ERROR_DAOS(ret, "daos_pool_connect");

ret = daos_cont_open(poh, argv[2], DAOS_COO_RW, &coh, NULL, NULL);

CHECK_ERROR_DAOS(ret, "daos_cont_open");

ret = dfs_mount(poh, coh, O_RDWR, &dfs);

CHECK_ERROR(ret, "dfs_mount");

The init phase has to be executed only once per program run. It is composed of 4 function calls:

- daos_init(): Inits the daos client library.

- daos_pool_connect(): Connects to the pool.

- daos_cont_open(): Opens the container inside the pool. We assume here that the container exists. If not, one can also call daos_cont_create() to create it here.

- dfs_mount(): Creates a handler to allow file and directory operations against the container.

Open

ret = dfs_open(dfs, NULL, argv[3], S_IRWXU | S_IFREG,

O_RDWR | O_CREAT | O_TRUNC, 0, 0, NULL, &file_obj);

CHECK_ERROR(ret, "dfs_open");

The function open() is replaced by dfs_open(), which opens the file (or creates it if it does not exist).

Write

sgl_data.sg_nr = 1;

sgl_data.sg_nr_out = 0;

sgl_data.sg_iovs = &iov_buf;

strcpy(buf_in, "Hello World");

d_iov_set(&iov_buf, buf_in, strlen(buf_in));

ret = dfs_write(dfs, file_obj, &sgl_data, 0, NULL);

CHECK_ERROR(ret, "dfs_write");

The call to write() is replaced by a call to dfs_write(). For dfs_write(), we can’t pass a constant string as input. The input must be passed as a buffer inside a d_sg_list_t data structure (sgl_data). To do that, d_iov_set() is called to set the raw input buffer to a d_iov_t data structure (iov_buf), which is then used inside the d_sg_list_t structure.

Read

d_iov_set(&iov_buf, buf_out, 128);

ret = dfs_read(dfs, file_obj, &sgl_data, 0, &read_size, NULL);

CHECK_ERROR(ret, "dfs_read");

printf("data read = %.*s\n", read_size, buf_out);

The call to read() is replaced by a call to dfs_read(). As with dfs_write(), we must pass the buffer as a d_sg_list_t variable. In this case, we change the pointer assigned to the iov_buf variable (to buf_out). Since iov_buf is already assigned to sgl_data, we can pass that to dfs_read().

Close

ret = dfs_release(file_obj);

CHECK_ERROR(ret, "dfs_release");

The function close() is replaced by dfs_release().

Finish

ret = dfs_umount(dfs);

CHECK_ERROR(ret, "dfs_close");

ret = daos_cont_close(coh, NULL);

CHECK_ERROR_DAOS(ret, "daos_cont_close");

ret = daos_pool_disconnect(poh, NULL);

CHECK_ERROR_DAOS(ret, "daos_pool_disconnect");

ret = daos_fini();

CHECK_ERROR_DAOS(ret, "daos_fini");

Finally, we execute the cleanup code to close the open handlers and to call daos_fini(). This code segment has to be executed only once at the end of the program.

Summary

In this article, I showed how to use the three code paths available to applications—dfuse, dfuse+libioil, and libdfs—to access data in a POSIX namespace stored in a DAOS container. In the case of libdfs (which corresponds to the direct path), the source code modifications necessary to make it work are shown, along with its traditional POSIX equivalents.