Introduction

Traditional applications organize their data between two tiers: memory and storage. Emerging persistent memory technologies introduce a third tier. This tier can be accessed like volatile memory, using processor load and store instructions, but it retains its contents across power loss like storage. Intel® Optane™ DC persistent memory modules are high capacity non-volatile dual in-line memory modules (NVDIMMs) that fit in this tier.

If you’re a software developer who wants to get started developing software or modifying an application to have persistent memory (PMEM) awareness but you don't have these persistent memory modules, you can use emulation for development.

This tutorial provides a method for setting up PMEM emulation using regular dynamic random access memory (DRAM) on a physical or virtual machine (VM) with a Linux* kernel version 4.3 or higher. It was developed and tested on a system with an Intel® Xeon® processor E5-2699 v4 processor, 2.2 GHz, on the Intel® Server System R2000WT product family platform, running CentOS* 7.2 with Linux* kernel 4.5.3.

For information about PMEM programming, visit the Intel® Developer Zone Persistent Memory site. There you'll find articles, sample code, links to industry presentations on the topic of PMEM programming, and information about the open-source Persistent Memory Development Kit (PMDK). This kit includes developer tools and a set of libraries based on the Storage Networking Industry Association (SNIA) NVM Programming Model.

Why Use Emulation?

You don't need to emulate persistent memory during the functional development stage of your project, but beyond that, you will need emulation to avoid the slow performance of msync(2) operations when cache flushing operations have to send data to a traditional storage device. With PMEM emulation, your code will use memory cache flushing instructions, just as it will when Intel Optane DC persistent memory modules are present.

Emulation in Virtualized Environments

If you will be using PMEM emulation in a VM, follow the instructions in this article inside the VM guest. No work is required on the host system.

Emulating Persistent Memory Using DRAM

Emulation of persistent memory is based on DRAM memory that will be seen by the operating system (OS) as a PMEM region. Because it is a DRAM-based emulation, it is likely to be faster than persistent memory, and all data will be lost upon powering down the machine. Here’s an overview of the configuration steps we’ll follow. If you're using a Linux distribution that supports PMEM, you'll be able to skip step 1:

- Reserve PMEM in the kernel configuration

- Enable BIOS to treat memory marked as e820-type 12 as PMEM

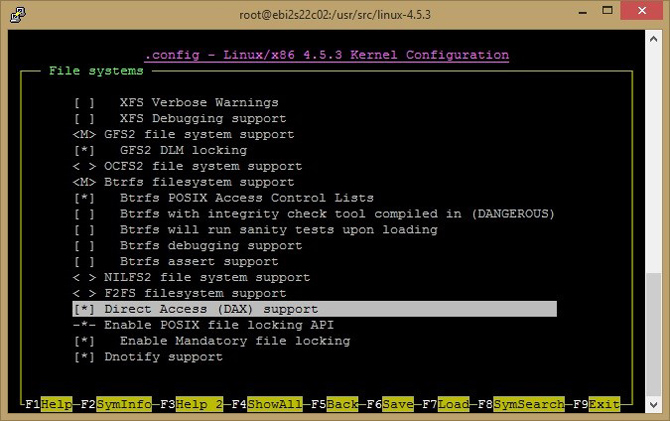

- Enable Direct Access (DAX)

- Identify usable regions in DRAM

- Specify memmap kernel parameters using GRUB file

- On reboot:

- A PMEM region is created

- The kernel offers this space to the PMEM driver

- Linux sees this DRAM region as PMEM and creates pmem devices (/dev/pmem0, /dev/pmem1,…)

- Create file system – ext4 and XFS have been modified to support PMEM

- Mount file system on /dev/pmem

using the direct access (DAX) option

Linux* Kernel Configuration

Support for persistent memory devices and emulation have been present in the Linux kernel since version 4.0, however, a kernel newer than 4.2 is recommended for easier configuration. In the kernel, a PMEM-aware environment is created using DAX extensions to the filesystem. Some distros, such as Fedora* 24 and later, have DAX/PMEM support built in. To learn whether or not your kernel supports DAX and PMEM, use this command:

# egrep ‘(DAX|PMEM)’ /boot/config-`uname –r`

The command output should be similar to this:

CONFIG_X86_PMEM_LEGACY_DEVICE=y

CONFIG_X86_PMEM_LEGACY=y

CONFIG_BLK_DEV_RAM_DAX=y

CONFIG_BLK_DEV_PMEM=m

CONFIG_FS_DAX=y

CONFIG_FS_DAX_PMD=y

CONFIG_ARCH_HAS_PMEM_API=y

If your kernel supports DAX and PMEM, you can skip to the “GRUB Configuration" section of this article to configure memory mapping to DRAM. If not, follow the steps below to modify, build, and install your kernel with DAX and PMEM support.

Enable DAX and PMEM in the Kernel

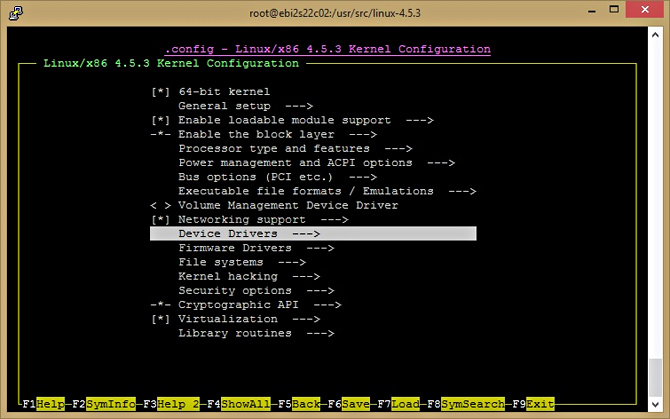

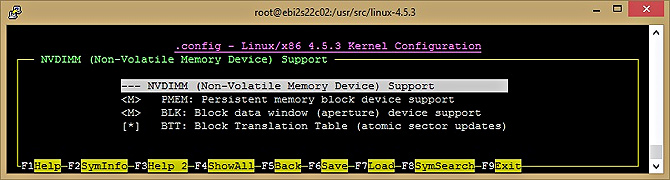

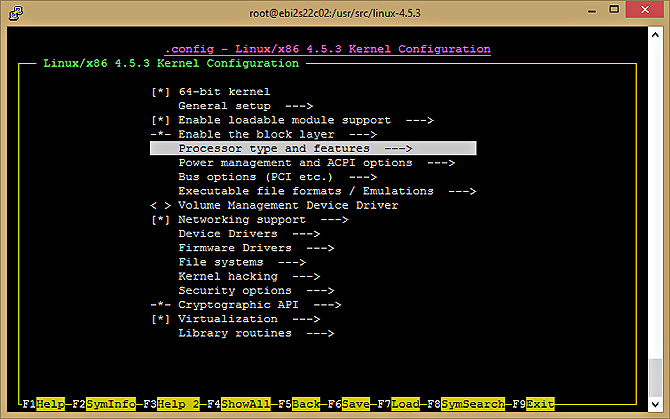

To configure the proper driver installation, run make nconfig and enable the driver. Per the instructions below, Figures 1 to 5 show the correct setting for Intel Optane DC persistent memory module support in the Kernel Configuration menu.

$ make nconfig

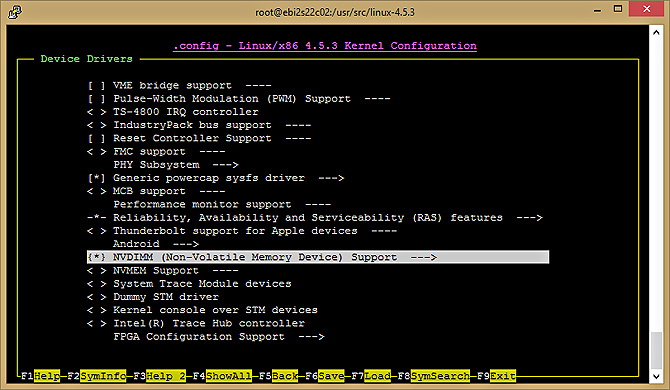

-> Device Drivers -> NVDIMM Support ->

<M>PMEM; <M>BLK; <*>BTT

Figure 1: Set up device drivers.

Figure 2: Set up the NVDIMM device.

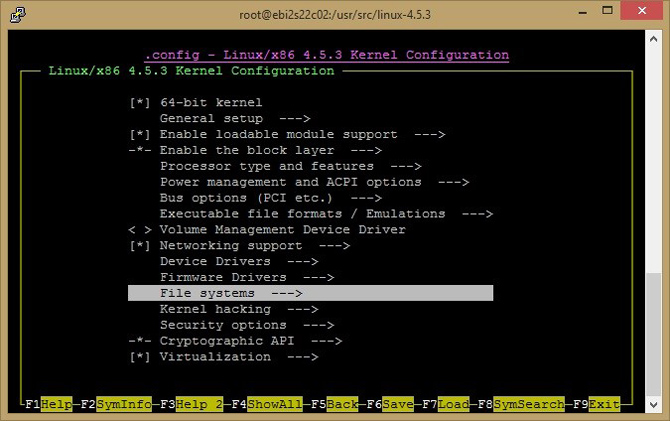

Figure 3: Set up the file system for Direct Access support.

Figure 4: Set up for Direct Access (DAX) support.

Figure 5: NVDIMM Support property.

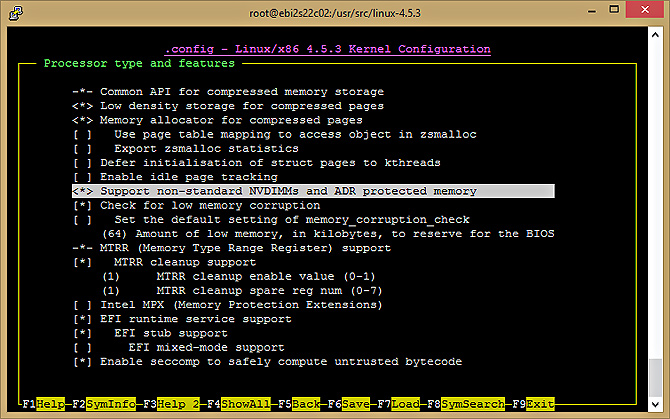

Processor Type and Features Settings

You also need to enable treatment of memory marked using the non-standard e820 type 12 as protected memory. The kernel will offer these regions to the PMEM driver for use as persistent storage.

$ make nconfig

-> Processor type and features

<*>Support non-standard NVDIMMs and ADR protected memory

Figures 6 and 7 show the required change to "Processor type and features" in the Kernel Configuration menu.

Figure 6: Set up the processor to support NVDIMMs.

Figure 7: Enable NON-standard NVDIMMs and ADR protected memory.

Build and Install the Kernel

Now you are ready to build and install your kernel.

# make -jX # where X is the number of cores on the machine

# make modules_install install

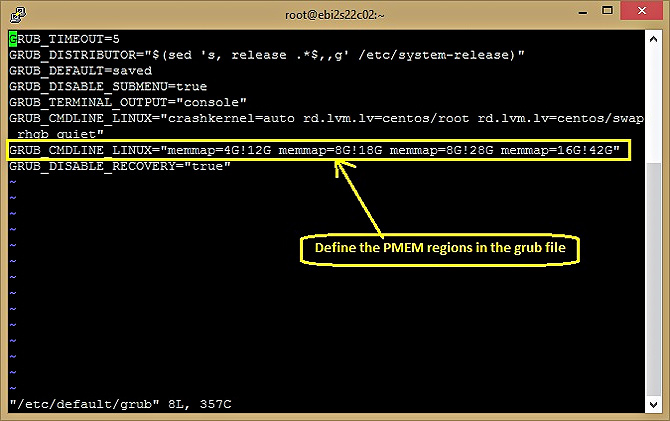

GRUB Configuration

Next, we'll modify the boot configuration file to reserve a memory region for use by the OS as persistent memory. The configuration change is done using GRUB, and the steps vary between Linux distributions.

First, you must identify available physical addresses to reserve. Read the Persistent Memory Wiki trace how_to_choose_the_correct_memmap_kernel_parameter_for_pmem_on_your_system for help with this task. Once you've identified an available address range, proceed with the instructions below.

# memmap=nn[KMG]!ss[KMG]

For example, memmap=4G!12G reserves 4 GB of memory between the 12th and 16th GB. The example below shows how to edit the GRUB file and build the configuration on a CentOS 7.0 BIOS or EFI-based machine.

# vi /etc/default/grub

GRUB_CMDLINE_LINUX="memmap=nn[KMG]!ss[KMG]"

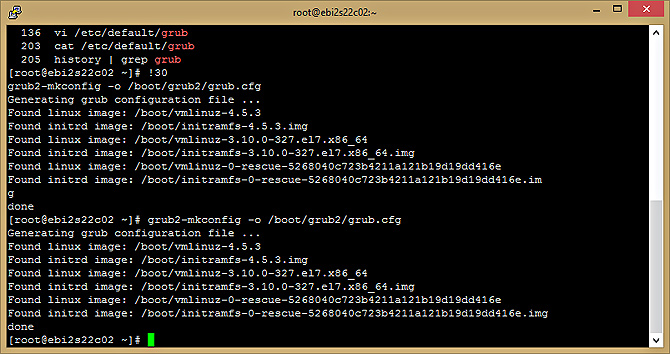

Build the configuration on a BIOS machine:

# grub2-mkconfig -o /boot/grub2/grub.cfg

Build the configuration on an EFI machine:

# grub2-mkconfig -o /boot/efi/EFI/centos/grub.cfg

Figure 8 shows the memmap change to the GRUB file. Note that this example specifies the mapping of four memory regions. Figure 9 shows the output from running grub2-mkconfig.

Figure 8: Define PMEM regions in the /etc/default/grub file.

Figure 9: Generate the boot configuration file based on the GRUB template.

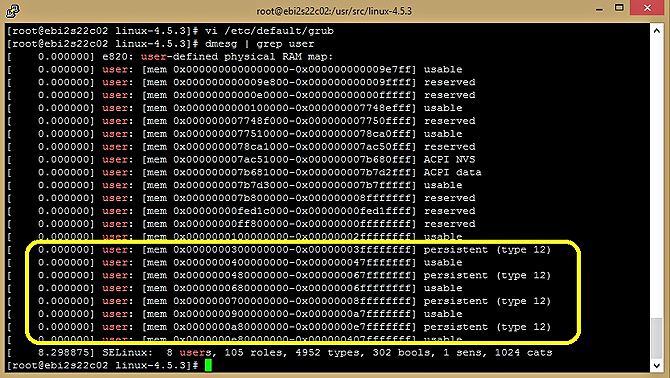

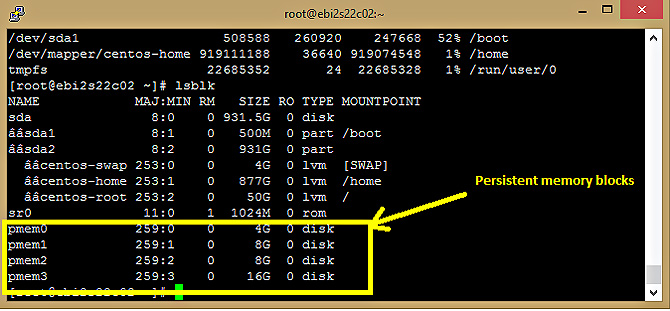

Now reboot the machine, after which you should be able to see emulated devices /dev/pmem0…/dev/pmem3. If you don’t see the devices you defined, verify the memmap setting correctness in the GRUB file as shown in Figure 8, followed by dmesg(1) analysis as shown in Figure 10. You should be able to see reserved ranges identified as "persistent (type 12)".

Figure 10: Persistent memory regions are highlighted as (type 12).

Build a DAX-enabled File System

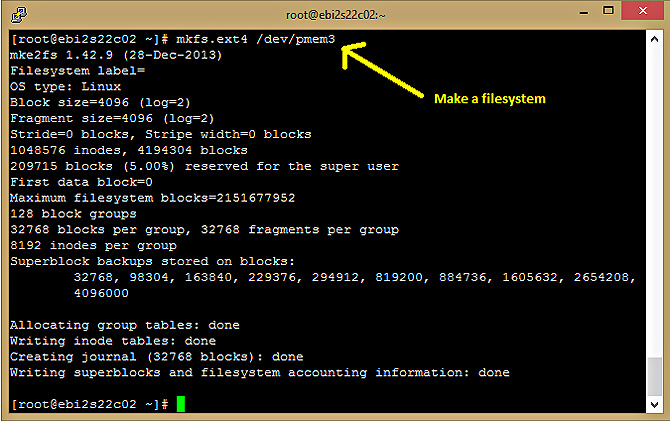

The final step in our process is to build file systems with DAX enabled for our persistent devices. To do this, we create an ext4 or xfs filesystem for each device and then mount it using the DAX option. In the example below, we create an ext4 filesystem on /dev/pmem3.

# mkdir /mnt/pmemdir

# mkfs.ext4 /dev/pmem3

# mount -o dax /dev/pmem3 /mnt/pmemdir

Now files can be created on the freshly mounted partition for use in creating PMDK pools.

Figure 11: Persistent memory blocks.

Figure 12: Making a file system

Conclusion

Now you know how to set up an emulated environment where you can build a PMEM application without actual PMEM hardware, on a physical or virtual machine. We hope the information helps you get started early on the challenging task of converting your application to use persistent memory. Learn more about persistent memory programming at the Intel Developer Zone Persistent Memory site.

References

- How to emulate Persistent Memory at pmem.io

- how_to_choose_the_correct_memmap_kernel_parameter_for_pmem_on_your_system, at the Persistent Memory Wiki

About the Authors

Thai Le is the software engineer focusing on cloud computing and performance computing analysis at Intel Corporation.

Usha Upadhyayula is a software ecosystem enabling engineer for Intel memory products.