Intel® Software Innovators Apply Intel® AI-based Inference Technology to

Render Alternate Realities in Real Time

Three German Intel® Software Innovators are employing AI-driven algorithms using powerful Intel® processors to advance synthetic media technology, rendering deepfakes and other reality-bending illusions in real time. Outfitted with this groundbreaking tech, viewers can stroll through a painterly dreamscape, assume the features of a famous politician, or enjoy an entertaining break from the real world for a bit.

Perhaps the best-known application for synthetic media, or style transfer, is deepfake technology. For those unfamiliar, a deepfake – the word is a mashup of “deep learning” and “fake” – describes digital media in which one person’s likeness is superimposed onto that of another. The deepfake can even be made to speak and display facial expressions. There are countless deepfake techniques and platforms in the tech world, but what the TNG team does is accelerate the capture process so it can be displayed almost instantly.

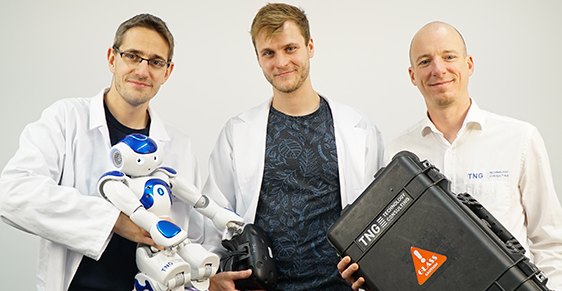

Thomas Endres, Martin Foertsch and Jonas Mayer are active contributors to the Intel® Software Innovator Program, Intel’s community for forward-thinking developers. Participants share thought leadership and technology expertise to inspire their developer peers by speaking and giving demos of their work at industry events. Now expanding to support graphics developers and creators, the Intel Software Innovator Program is adding an Xe Community track with an emphasis on applications and experiences demanding greater speed and performance even as workloads increase.

Evolution of Innovation Through Technology

Synthetic media variants are just the latest apps of interest for Endres and Foertsch, of Munich-based TNG Technology Consultants. The two became Intel Software Innovators in 2014 and are also Intel® Black Belts. “Everything started with the gesture-controlled quadcopters based on Intel® RealSense™ cameras,” recalled Endres about their program relationship. “The program helped us kickstart our efforts. We were first invited to the Intel® Developer Forum, where we were able to showcase the gesture-controlled drones. With time we got more invites to different conferences, so were encouraged to continue our work, which continues to this day.” View their projects on Intel® DevMesh.

“With our innovation hacking, we’ve had some great moments with Intel” added Foertsch. “From robots we moved on to augmented reality and virtual reality, when we created Project Avatar, a telepresence robotic system. With time we got to know AI and now it’s our main focus of work, starting with computer vision and moving on to natural language. AI is where all the fun comes in.”

The Many Faces of Jonas

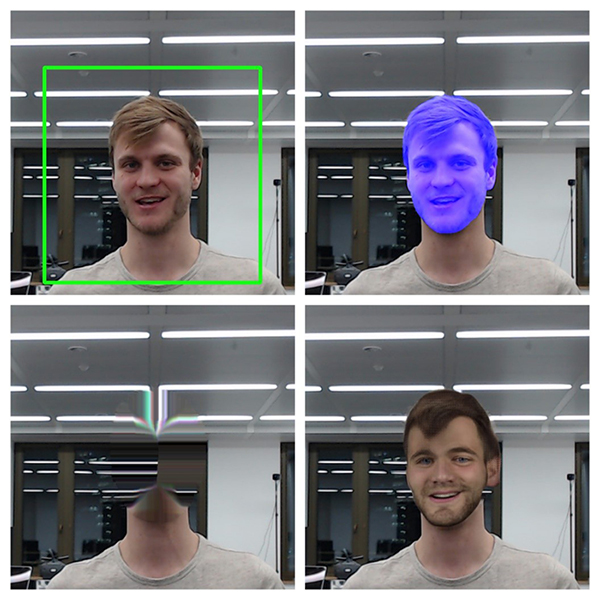

Jonas Mayer, TNG’s main developer on the innovation hacking team, stood in as the human subject for their live-action video demo of their best-known synthetic media application, the deepfake. As Jonas spoke, the technology swapped out his face for those of: a Bavarian state minister, German Chancellor Angela Merkel, a TV news personality and so on. As he continued to speak, the mouths of the rotating deepfakes moved in unison.

To render the deepfake, the first step is to capture a raw camera image containing the subject face, which they discern using simple face detection that runs entirely on the Intel® Xeon® CPU. This is enabled by Intel’s optimizations for face detection on OpenCV*, a library of programming functions for real-time computer vision originally developed by Intel. System hardware is thus freed for other tasks.

Next, they analyze the image to perform head segmentation to map the location of every pixel, and then apply the Intel-optimized OpenCV function to perform ‘inpainting’, a process by which the subject’s head is erased and replaced with a plausible background infill.

Finally, they plug the cut-out head into an auto-encoder that transposes the facial characteristics of a different (and usually famous) person, “which is not creepy at all,” said Mayer.

Video: How the TNG team creates real-time deepfakes

The World Becomes a Painting via Style Transfer

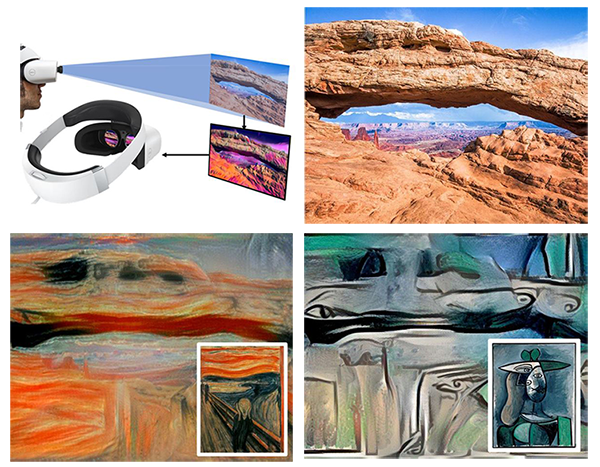

Another example of TNG’s real-time style transfer innovation employs virtual reality glasses that can transform your environment instantly into nearly any kind of 3D work of art. TNG records the scene using a pair of cameras that are integrated into virtual reality glasses. Camera images are forwarded to a powerful workstation for processing using OpenCV and then stylized to emulate whatever artistic style it has learned via convolutional neural net and Tensorflow*. The system streams the stylized content back to the Windows* Mixed Reality VR goggles.

Dazzled viewers are thus treated to the experience of seeing their own world through the eyes of Edvard Munch or Pablo Picasso. Or, they can inhabit their own version of the ‘80s music video “Take On Me,” by a-ha.

Putting Style Transfer to Responsible Use

Mindful that deepfake applications of synthetic media technology can be controversial, especially as they grow more sophisticated and harder to detect, the Innovators will use their prototype to show what’s possible but will not market or release their technology – it’s purely a topic for research. On the other hand, parts of their deepfake prototype can have positive, useful applications, such as their face detection/inpainting application. That technology could be repurposed to create dynamic privacy masks to anonymize faces for data protection. It’s all a matter of context and purpose.

“We want people to be inspired by our work,” said Foertsch. To assist in that inspiration, take the TNG Hacking Tour of their various projects here.

Video: A walkthrough of the many types of innovation hacking the TNG team has created

Elevate Your Graphics Skills

Ignite inspiration, share achievements, network with peers and get access to experts by becoming an Intel® Software Innovator. Game devs, media developers, and other creators can apply to be Intel® Software Innovators under the new Xe Community track – opening a world of opportunity to craft amazing applications, games and experiences running on Xe architecture.

To start connecting and sharing your projects, join the global developer community on Intel® DevMesh.