Persistent memory technologies such as Intel® Optane™ DC persistent memory come with several challenges. Remote access seems to be one of the most difficult aspects of persistent memory applications because there is no ready-to-use technology that supports remote persistent memory (RPMEM). Most commonly used remote direct memory access (RDMA) for remote memory access does not consider data durability aspects.

This paper proposes solutions for accessing RPMEM based on traditional RDMA. These solutions have been implemented in the Persistent Memory Development Kit (PMDK) librpmem library. Before you read this part, which describes how to set up and configure RDMA-capable NICs (RNICs) and how to use the RDMA-capable network in a replication process, we suggest reading Part 2: Remote Persistent Memory 101. The other parts in this series include:

- Part 1, “Understanding Remote Persistent Memory," describes the theoretical realm of remote persistent memory.

- Part 4, "Persistent Memory Development Kit-Based PMEM Replication," describes how to create and configure an application that can replicate persistent memory over an RDMA-capable network.

Prepare to Shine – RNIC Setup

RNICs are recommended for the use with rpmem because RDMA allows writing data directly to persistent memory, which gives significant performance gains.

This section gives entry-level knowledge regarding the setup and the configuration of RNICs from three manufacturers: Mellanox*, Chelsio*, and Intel. RNIC configuration is a complex topic and describing it in detail is outside the scope of this paper. For details regarding the setup of RNICs from each manufacturer, see the manuals mentioned in the respective sections below.

The setup described here consists of two separate machines, each equipped with its own RNIC and connected to each other. The configuration steps described below have to be performed on both of them; on the initiator as well as on the target.

Mellanox*

Mellanox RNICs support three RDMA-compatible protocols: InfiniBand*, RoCE v1, and RoCE v2. The decision on which protocol is the best for the specific application depends on the technical constraints of the network. Mellanox provides exhaustive documentation describing all required configuration steps for all of them. For details see Mellanox OFED for Linux* User Manual Rev 4.5 or later.

To check if the machine is equipped with Mellanox RNIC:

$ lspci | grep Mellanox

5e:00.0 Ethernet controller: Mellanox Technologies MT27700 Family [ConnectX-4]

5e:00.1 Ethernet controller: Mellanox Technologies MT27700 Family [ConnectX-4]

To start working with Mellanox RNICs, first choose the RDMA protocol and perform the following steps:

- Install Mellanox software (see “Installing Mellanox Software” later in this section).

- Configure port types required for chosen RDMA protocol (see “Configuring Port Types” later in this section).

- Configure the chosen RDMA protocol:

- RoCE v2 (see “RoCE v2” later in this section); or

- InfiniBand (see “InfiniBand*” later in this section); or

- RoCE v1 (see “RoCE v1” later in this section).

Installing Mellanox Software

Download the software package from Mellanox. Choose the relevant package depending on the machine’s operating system.

# extract the downloaded software

$ tar -xzf MLNX_OFED_LINUX-4.4-1.0.0.0-fc27-x86_64.tgz

$ cd MLNX_OFED_LINUX-4.4-1.0.0.0-fc27-x86_64

# install

$ sudo ./mlnxofedinstall

Reboot the machine.

Configuring Port Types

Mellanox RNIC ports can be individually configured to work as InfiniBand or Ethernet ports. Each of the RDMA protocols requires a specific port type so, prior to configuration of the chosen RDMA protocol, it is necessary to configure the appropriate port type.

Before querying and changing the port type, start the mst (Mellanox Software Tools) service:

$ sudo mst start

Querying port types:

# query RNIC port types

$ sudo mlxconfig -d /dev/mst/mt*_pciconf0 q | grep LINK_TYPE

LINK_TYPE_P1 ETH(2)

LINK_TYPE_P2 ETH(2)

The LINK_TYPE has to match chosen RDMA protocol. There are two possible LINK_TYPE values:

- ETH(2) - Ethernet (required by RoCE v2)

- IB(1) - InfiniBand (required by InfiniBand and RoCE v1)

Setting port type (for example, to the Ethernet):

$ sudo mlxconfig -d /dev/mst/mt*_pciconf0 set LINK_TYPE_P1=2 LINK_TYPE_P2=2

# (...)

Applying... Done!

-I- Please reboot machine to load new configurations.

After changing the port type, a machine reboot is required.

If the machine is equipped with more than one Mellanox RNIC it will also have more than one /dev/mst/mt*_pciconf0 devices in the system. In this case, the wildcard in the path has to be replaced with appropriate vendor_part_id. It can be found in the ibv_devinfo(1) command output.

RoCE v2

RoCE v2 requires configured Priority Flow Control (PFC) and tagging outbound packages (egress) in order to function reliably. The example below creates the separate virtual LAN interface (VLAN) for which all outbound packets are tagged with the chosen PFC priority.

How to choose the appropriate PFC priority is outside the scope of this paper. For details see Mellanox OFED for Linux User Manual Rev 4.5 or later.

# associate InfiniBand Ports to network interfaces

# “Up” next to the network interface means it is connected

$ ibdev2netdev

mlx5_0 port 1 ==> enp94s0f0 (Down)

mlx5_1 port 1 ==> enp94s0f1 (Up)

# enable Priority Flow Control (PFC) with the desired priority

# note that enp94s0f1 is the network interface obtained in the previous step

# command below chooses Priority=4

$ mlnx_qos -i enp94s0f1 --pfc 0,0,0,0,1,0,0,0

# create a VLAN interface (e.g. VLAN_ID=100)

$ sudo modprobe 8021q

$ sudo vconfig add enp94s0f1 100

# set egress mapping for created VLAN to configured PFC priority

$ for i in {0..7}; do sudo vconfig set_egress_map enp94s0f1.100 $i 4 ; done

# assign an IP address to the VLAN

$ sudo ifconfig enp94s0f1.100 up

$ sudo ifconfig enp94s0f1.100 192.168.0.1

For RoCE v2, several link speeds are available. It is important to choose the right one to obtain the desired performance. The link speed is negotiated between the machines on both ends of the link, so the link speed has to be set to the same value on both machines.

# query supported speeds

$ ethtool enp94s0f1

# (...)

Supported link modes: 1000baseKX/Full

10000baseKR/Full

# (...)

100000baseCR4/Full

100000baseLR4_ER4/Full

# (...)

# set speed

$ sudo ethtool -s enp94s0f1 speed 100000 autoneg off

InfiniBand defines several fixed-size MTUs; for example: 1024, 2048, or 4096 bytes. However, when configuring using ifconfig it is necessary to take the RoCE transport headers into account. The acceptable values are 1500, 2200, and 4200. This value has to be the same on both machines.

# set MTU

$ sudo ifconfig enp94s0f1 mtu 4200

Libfabric uses librdmacm for communication management. RDMA_CM has to be configured to use RoCE v2:

$ ibdev2netdev

mlx5_0 port 1 ==> enp94s0f0 (Down)

mlx5_1 port 1 ==> enp94s0f1 (Up)

# set RoCE v2 the default RoCE mode of RDMA_CM applications

$ sudo cma_roce_mode -d mlx5_1 -m 2

RoCE v2

InfiniBand*

The subnet manager (opensm) must be running for each InfiniBand subnet. Since the initiator and the target are in the same subnet, opensm has to run only on one of them.

$ sudo /etc/init.d/opensmd start

Further configuration has to be performed on both nodes:

# associate InfiniBand Ports to network interfaces

# “Up” next to the network interface means it is connected

$ ibdev2netdev

mlx5_0 port 1 ==> ib0 (Down)

mlx5_1 port 1 ==> ib1 (Up)

# assign an IP address to the interface

# note that ib1 is the network interface obtained in the previous step

$ sudo ifconfig ib1 192.168.0.1

Opensm has to be started after both IB network interfaces in the subnet are powered on.

RoCE v1

RoCE v1 and RoCE v2 run over Converged Ethernet so they impose the same requirements on the Ethernet configuration (PFC and egress mapping) in order to function reliably.

Because it is an Ethernet link layer protocol, RoCE v1 allows communication between any two hosts in the same Ethernet broadcast domain. RoCE v2 is an internet layer protocol so it can be routed between broadcast domains.

PFC setup has to be performed on both nodes:

# enable Priority Flow Control (PFC) with the desired priority

$ echo "options mlx4_en pfctx=0x10 pfcrx=0x10" | sudo tee --append /etc/modprobe.d/mlx4_en.conf

$ sudo service openibd restart

Unloading HCA driver: [ OK ]

Loading HCA driver and Access Layer: [ OK ]

# check PFC

$ cat /sys/module/mlx4_en/parameters/pfcrx

16

Since RoCE v1 runs over InfiniBand, it also requires one opensm instance for each InfiniBand subnet. It can run either on the initiator or on the target:

$ sudo /etc/init.d/opensmd start

After opensm starts, the network interfaces on both machines should be up and ready for further configuration:

# associate InfiniBand Ports to network interfaces (e.g. ib1)

$ ibdev2netdev

mlx5_0 port 1 ==> ib0 (Down)

mlx5_1 port 1 ==> ib1 (Up)

# create a VLAN interface (e.g. VLAN_ID=100)

$ sudo modprobe 8021q

$ sudo vconfig add ib1 100

# set egress mapping to configured PFC priority

$ for i in {0..7}; do sudo vconfig set_egress_map ib1.100 $i 4 ; done

# configure an IP address to the VLAN

$ sudo ifconfig ib1.100 up

$ sudo ifconfig ib1.100 192.168.0.1

How to choose appropriate PFC priority is outside the scope of this paper. For details, see Mellanox OFED for Linux User Manual Rev 4.5 or later.

Libfabric uses librdmacm for communication management. RDMA_CM has to be configured to use RoCE v1:

$ ibdev2netdev

mlx5_0 port 1 ==> ib0 (Down)

mlx5_1 port 1 ==> ib1 (Up)

# set RoCE v1 the default RoCE mode of RDMA_CM applications

$ sudo cma_roce_mode -d mlx5_1 -m 1

IB/RoCE v1

Chelsio*

Chelsio RNICs support RDMA by implementing the iWARP protocol. Chelsio provides documentation describing all required configuration steps. For details, see the User Guide for Chelsio Unified Wire v3.10.0.0 (or later) for Linux.

Installing Chelsio Software

Download the software package from Chelsio. Choose the relevant package depending on the machine’s operating system.

# extract the downloaded software

$ tar -xzf ChelsioUwire-3.10.0.0.tar.gz

$ cd ChelsioUwire-3.10.0.0

# install

$ sudo make install

Configuring

Associate the Chelsio network controller ports to the network interfaces.

# query Chelsio network controller bus and device number (e.g. 03:00)

$ lspci | grep Chelsio

03:00.0 Ethernet controller: Chelsio Communications Inc T520-LL-CR Unified Wire Ethernet Controller

# (...)

# find matching network interfaces (e.g. ens2f4 and ens2f4d1)

$ ls -l /sys/class/net | grep 03:00

lrwxrwxrwx. 1 root root 0 02-11 08:40 ens2f4 -> ../../devices/pci0000:00/0000:00:02.0/0000:03:00.4/net/ens2f4

lrwxrwxrwx. 1 root root 0 02-11 08:40 ens2f4d1 -> ../../devices/pci0000:00/0000:00:02.0/0000:03:00.4/net/ens2f4d1

Configure the desired link speed and an IP address for the interface.

# query supported speeds

$ ethtool ens2f4

# (...)

Supported link modes: 1000baseT/Full

10000baseT/Full

# (...)

# set speed

$ sudo ethtool -s ens2f4 speed 10000 autoneg off

# set MTU

$ sudo ifconfig ens2f4 mtu 9000

# configure an IP address for the interface

$ sudo ifconfig ens2f4 192.168.0.1

Intel

The RNIC configuration steps for Cornelis* Omni-Path Fabric software are described in the Cornelis Omni-Path Fabric Software Rev. 11.0 or later. The latest documentation and end user publications are available at Fabric Software Publications.

Installing Cornelis* Omni-Path Fabric Software

Go to the download center and find Cornelis Omni-Path Fabric software. Choose the relevant package of Cornelis Omni-Path Fabric suite (OPA-IFS), depending on the machine’s operating system.

# extract the downloaded software

$ tar xvfz IntelOPA-IFS.RHEL76-x86_64.10.9.1.0.15.tgz

$ cd IntelOPA-IFS.RHEL76-x86_64.10.9.1.0.15

# install

$ sudo ./INSTALL

Reboot machine.

Configuring

Associate the Cornelis Omni-Path Host Fabric Interface adapter ports to the network interfaces.

# query Intel network controller bus and device number (e.g. 82:00)

$ lspci | grep Omni-Path

82:00.0 Fabric controller: Intel Corporation Omni-Path HFI Silicon 100 Series [discrete] (rev 11)

# find matching network interfaces (e.g. ib0)

$ ls -l /sys/class/net | grep 82:00

lrwxrwxrwx 1 root root 0 Jun 18 2018 ib0 -> ../../devices/pci0000:80/0000:80:01.0/0000:82:00.0/net/ib0

Configure the desired MTU and an IP address for the interface.

# set MTU

$ sudo ifconfig ib0 mtu 65520

# configure an IP address to the interface

$ sudo ifconfig ib0 192.168.0.1

Checking Connectivity

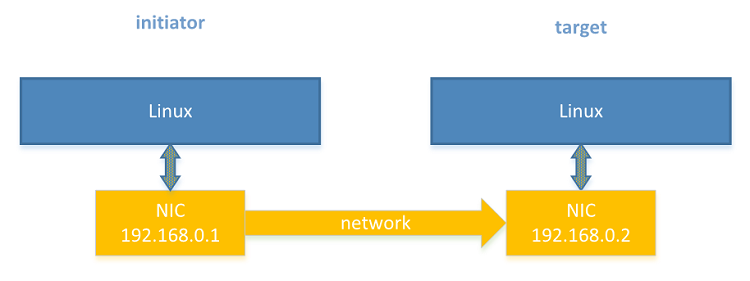

This paper assumes the IP addresses in Figure 1 are assigned to the network interfaces.

Figure 1. Two RDMA machines network

Allow translating the human-friendly name target to the target IP address:

# initiator

$ echo "192.168.0.2 target" | sudo tee --append "/etc/hosts"

# target

# do nothing

To check connectivity use the regular ping(8) command:

# initiator

$ ping target

PING target (192.168.0.2) 56(84) bytes of data.

64 bytes from target (192.168.0.2): icmp_seq=1 ttl=64 time=1.000 ms

64 bytes from target (192.168.0.2): icmp_seq=2 ttl=64 time=1.000 ms

64 bytes from target (192.168.0.2): icmp_seq=3 ttl=64 time=1.000 ms

# (...)

# target

# do nothing

Note A firewall configuration on the target node may prevent pinging from the initiator. Firewall configuration is outside the scope of this paper.

It is also possible to check RDMA connectivity using rping(1), which establishes an RDMA connection between the two nodes. In the following example it also performs RDMA transfers. First, start the rping server on the target and then the rping client on the initiator.

# target

$ rping -s -a 192.168.0.2 -p 9999

# server is waiting for client

# initiator

$ rping -c -Vv -C5 -a 192.168.0.2 -p 9999

ping data: rdma-ping-0: ABCDEFGHIJKLMNOPQRSTUVWXYZ[\]^_`abcdefghijklmnopqr

ping data: rdma-ping-1: BCDEFGHIJKLMNOPQRSTUVWXYZ[\]^_`abcdefghijklmnopqrs

ping data: rdma-ping-2: CDEFGHIJKLMNOPQRSTUVWXYZ[\]^_`abcdefghijklmnopqrst

ping data: rdma-ping-3: DEFGHIJKLMNOPQRSTUVWXYZ[\]^_`abcdefghijklmnopqrstu

ping data: rdma-ping-4: EFGHIJKLMNOPQRSTUVWXYZ[\]^_`abcdefghijklmnopqrstuv

client DISCONNECT EVENT...

When the rping client finishes the transfers, the server will display additional messages:

# target

$ rping -s -a 192.168.0.146 -p 9999

server DISCONNECT EVENT...

wait for RDMA_READ_ADV state 10

If rping finished its connection test successfully, the RDMA connection is ready for use.

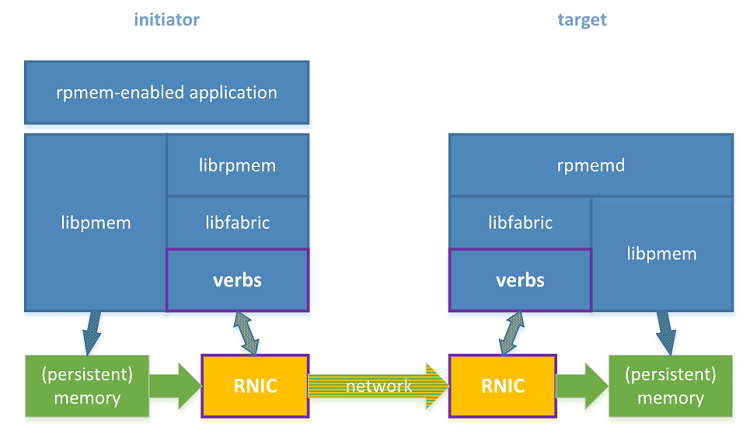

Shift to RDMA-Capable Network

Having configured replication between two machines (described in Replicating to Another Machine), shifting to RDMA-capable NICs (RNICs) is straightforward. This section highlights the differences between using RNICs and regular NICs.

Figure 2. Rpmem software stack with two machines equipped with RDMA.

Configuring Platforms

The rpmem replication between two machines may be subject to limits established via limits.conf(5). Limits are modified on the initiator and on the target separately. For details, see Single Machine Setup.

Network Configuration

RDMA network configuration is described in Prepare to Shine – RNIC Setup.

Rpmem Installation

Just as with the Ethernet NICs, rpmem requires some specific software components installed on the initiator and on the target machine. No changes here.

# initiator

$ sudo dnf install librpmem librpmem-devel

# target

$ sudo dnf install rpmemd

SSH Configuration

SSH configuration is exactly the same, independent of whether NICs or RNICs are used.

If the following steps were executed for other network interfaces there is no need to repeat the first step. But the second and third steps have to be performed each time a new machine, account, or IP address is used.

# initiator

# 1. Generating an SSH authentication key pair

$ ssh-keygen

# (...)

Enter passphrase (empty for no passphrase): # leave empty

Enter same passphrase again: # leave empty

# (...)

# 2. Allowing to connect to the target via SSH

$ ssh-copy-id user@target

# (...)

# 3. Checking SSH connection

$ ssh user@target

# (...)

[user@target ~] $ # the sample shell prompt on the target

# target

# do nothing

Rpmemd Configuration

The rpmemd configuration does not depend on the type of network interface, so the rpmemd configuration is the same:

# initiator

# do nothing

# target

$ mkdir /dev/shm/target

$ cat > /home/user/target.poolset <<EOF

PMEMPOOLSET

10M /dev/shm/target/replica

EOF

Librpmem Configuration

The key difference between using NICs and RNICs is in the librpmem configuration. The Ethernet network interfaces do not support the verbs fabric provider, so they require enabling the sockets provider. This is not the case with the RNICs so the librpmem library does not require any additional configuration.

# initiator

# do nothing

# target

# do nothing

If the initiator machine was previously used for replication using NICs it may have enabled the socket provider support. This is not an issue because, if both the verbs provider and the sockets provider are available, librpmem will always pick the verbs provider.

Creating a Memory Pool with a Replica

After updating the configuration, using rpmem replication is no different.

# initiator

$ ./rpmem101 target target.poolset

# target

# do nothing

Inspecting the Results

The expected results are the same:

# initiator

# do nothing

# target

$ tree /dev/shm/

/dev/shm/

└── target

└── replica

This can also be verified using hexdump to verify that the replica has the expected contents:

# initiator

# do nothing

# target

$ hexdump -C /dev/shm/replica

00000000 4d 41 4e 50 41 47 45 00 00 00 00 00 00 00 00 00 |MANPAGE.........|

00000010 00 00 00 00 00 00 00 00 5c 06 8e c4 13 0b c9 4b |........\......K|

00000020 ae cf 8b 02 56 8d 47 e1 48 76 64 ed 97 31 6a 4a |....V.G.Hvd..1jJ|

00000030 bc 32 4f eb 15 9a a9 a4 48 76 64 ed 97 31 6a 4a |.2O.....Hvd..1jJ|

*

00000070 bc 32 4f eb 15 9a a9 a4 f7 16 a2 5c 00 00 00 00 |.2O........\....|

00000080 10 73 77 37 f7 07 00 00 02 01 00 00 00 00 3e 00 |.sw7..........>.|

00000090 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |................|

*

00000ff0 00 00 00 00 00 00 00 00 71 d1 fa bf 29 57 a8 e3 |........q...)W..|

00001000 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |................|

*

06400000

Proceed to Part 4, "Persistent Memory Development KIt-Based PMEM Replication," which describes how to create and configure an application that can replicate persistent memory over an RDMA-capable network

Other Articles in This Series

Part 1, “Understanding Remote Persistent Memory," describes the theoretical realm of remote persistent memory.

Part 2, "Remote Persistent Memory 101," depicts examples of setups and practical uses of RPMEM.