The Intel® oneAPI Math Kernel Library (oneMKL) supports a range of different language APIs (C, Fortran, C++ with SYCL), threading models (oneTBB, OpenMP, sequential), and GPU offload models (OpenMP, SYCL).

The Linking Quick Start chapter in the Developer Guide for Intel® oneAPI Math Kernel Library provides you with approaches to selecting your perfect link line for your combination of requirements.

Still, if you add the support for dynamic and static linking or the desire for custom build options to control application footprint into the equation, the picture can get a bit complex. The trade-off for reconfigurability and choice tends to be that there are many options to choose from and that it can be confusing to keep track of them.

This is why oneMKL comes with the Command-Line Link Tool and the Link Line Advisor.

In this article, we will provide a quick overview of the high-level considerations when picking your compiler and linker options for use with oneMKL. In addition, we will provide an introduction to the two helper utilities:

- The online Intel® oneAPI Math Kernel Library Link Line Advisor

- The Command-Line Link Tool included with the product distribution

Static and Dynamic Libraries

One key decision for application design, packaging, and distribution is whether you prefer to link libraries statically or dynamically.

Static linking combines all dependencies and libraries into a single executable binary. This simplifies distribution and may lead to slightly faster startup time for some workloads. The drawback of this approach, however, is that the binary and its resource footprint can get rather large if you intend to support a multitude of runtime environments. Any changes and updates in supporting programs or libraries require a rebuild of your binary. This is why pure static linking is usually reserved for embedded use cases and startup or system bootup code requiring consistent, well-defined memory allocation.

Dynamic linking keeps the library functions in separate object files. The main application only contains references to the objects and functions defined in those dynamically loaded libraries. The program executable itself is smaller as a result, and updates of supporting libraries can be done without forcing a full application rebuild. This is, therefore, what is commonly used for enterprise and HPC applications.

This, of course, has its drawbacks as well. When distributing a dynamically linked executable, it is necessary also to distribute the relevant shared objects (*.so) and dynamically loaded libraries (*.dll) with it.

The Developer Guide for Intel® oneAPI Math Kernel Library Linux* and Windows* contains a complete list of all libraries available as dynamic (*.dll, *.so) or static library objects (*.lib, *.a).

These lists of library files, combined with the feature sets you plan to support, provide all the information needed to decide which *.dll and *.so files are needed for redistribution with your application binary.

Linux:

- Static Libraries in the lib/intel64_lin Directory

- Dynamic Libraries in the lib/intel64_lin Directory

- Static Libraries in the lib/ia32_lin Directory

- Dynamic Libraries in the lib/ia32_lin Directory

Windows:

- Static Libraries in the lib\\intel64 Directory

- Dynamic Libraries in the lib\\intel64 Directory

- Contents of the redist\\intel64 Directory

- Static Libraries in the lib\\ia32 Directory

- Dynamic Libraries in the lib\\ia32 Directory

- Contents of the redist\\ia32 Directory

Note As the industry has largely shifted to 64-bit architecture over the last decade, Intel® oneMKL 32-bit binaries will be deprecated in the upcoming Intel® oneMKL 2024.0 release and targeted to be removed after a one-year deprecation notice period. If you currently use the 32-bit binaries, please consider upgrading to our 64-bit options. You can share your feedback or concerns on the oneMKL Community Forum or through Priority Support.

C, C++, and Fortran Interfaces

oneMKL supports multiple language APIs and thus can be used with various software development toolchains, compilers, and linkers.

These include Microsoft, PGI, Intel, GNU*, and CLANG-based build environments.

Let us assume dynamic linking. If we were, for instants, going to use oneMKL with a GNU Fortran compiler, the resulting option string to be handed over to LD, the GNU linker would look similar to

-L${MKLROOT}/lib/intel64 -Wl,--no-as-needed -lmkl_gf_ilp64 -lmkl_gnu_thread -lmkl_core -lgomp -lpthread -lm -ldl

As we can see, the precise parameters to be used might vary quite a bit. In this example, we assumed that 64-bit memory addressing mode and OpenMP support using the standard GNU OpenMP library were required.

The linker option string looks almost identical if we target the GNU C/C++ build toolchain or CLANG.

As soon as we switch to Windows* OS, it all will, of course, look different, though:

mkl_intel_ilp64_dll.lib mkl_intel_thread_dll.lib mkl_core_dll.lib libiomp5md.lib

Picking C++ with SYCL (DPC++) as the language API will also have an impact on the linker options, but not so much because of the language chosen, but because using SYCL implies a change in the threading model used, including the use of oneTBB for host-side threading.

-fsycl mkl_sycl_dll.lib mkl_intel_ilp64_dll.lib mkl_tbb_thread_dll.lib mkl_core_dll.lib tbb12.lib OpenCL.lib

In short, the linker option list depends primarily on the build environment and the selected additional library dependencies and threading layers, not so much on the base language API used.

Threading Layers

This, of course, implies that we should probably talk a bit more about the available threading layers.

oneMKL allows you to turn off internal threading. If you choose to do that on Linux

-lmkl_tbb_thread or -liomp5 will be replaced by - lmkl_sequential.

On Windows mkl_tbb_thread_dll.lib tbb12.lib or mkl_intel_thread_dll.lib libiomp5md.lib will be replaced by mkl_sequential_dll.lib

As a short simplified list of typical linker options for the different threading models when using the Intel oneAPI DPC++/C++ Compiler with oneMKL, please see the small table below:

| Compiler and OS / Threading Layer | Windows (Intel® oneAPI DPC/C++ Compiler) | Linux (Intel® oneAPI DPC/C++ Compiler) |

|---|---|---|

| Sequential | mkl_intel_ilp64_dll.lib mkl_sequential_dll.lib mkl_core_dll.lib | -L${MKLROOT}/lib/intel64 -lmkl_intel_ilp64 -lmkl_sequential -lmkl_core -lpthread -lm -ldl |

| OpenMP | mkl_intel_ilp64_dll.lib mkl_intel_thread_dll.lib mkl_core_dll.lib libiomp5md.lib | -L${MKLROOT}/lib/intel64 -lmkl_intel_ilp64 -lmkl_intel_thread -lmkl_core -liomp5 -lpthread -lm -ldl |

| oneTBB | mkl_intel_ilp64_dll.lib mkl_tbb_thread_dll.lib mkl_core_dll.lib tbb12.lib | -L${MKLROOT}/lib/intel64 -lmkl_intel_ilp64 -lmkl_tbb_thread -lmkl_core -lpthread -lm -ldl |

| SYCL sequential | -fsycl mkl_sycl_dll.lib mkl_intel_ilp64_dll.lib mkl_sequential_dll.lib mkl_core_dll.lib OpenCL.lib | -fsycl -L${MKLROOT}/lib/intel64 -lmkl_sycl -lmkl_intel_ilp64 -lmkl_sequential -lmkl_core -lsycl -lpthread -lm -ldl |

| SYCL with oneTBB | -fsycl mkl_sycl_dll.lib mkl_intel_ilp64_dll.lib mkl_tbb_thread_dll.lib mkl_core_dll.lib tbb12.lib OpenCL.lib | -fsycl -L${MKLROOT}/lib/intel64 -lmkl_sycl -lmkl_intel_ilp64 -lmkl_tbb_thread -lmkl_core -lsycl -lpthread -lm -ldl |

Although this is all quite straightforward, it still implies that there are several compiler and linker options to keep track of whenever you want to change the linkage model for your application or introduce new threading models.

This is why oneMKL provides two simple utilities that ensure your build options match your build environment and requirements, no matter which version of oneMKL you use.

The oneMKL Command-Line Link Tool and Link Line Advisor make it easy always to pick the right compiler and linker options for your needs.

Command-Line Link Tool

The command-line Link Tool mkl_link_tool is part of your oneMKL installation and can be found in the <mkl_directory>/bin/<arch> directory.

If used in interactive mode, it will provide a guided step-by-step selection process for your compiler and linker options:

Intel(R) oneAPI Math Kernel Library (oneMKL) Link Tool v6.3

==========================================================

Choose the tool output by selecting one of the following.

1. Libraries

2. Compiler options

3. Environment variables for application execution

4. All the variants above

5. Compiled files or application

h. Help

q. Quit

----------------------------

Please type a selection or press "Enter" to accept default choice: 4 (All the variants above)

>

The resulting output may, for instants look something like

Configuration

=============

OS: lnx

Architecture: intel64

Compiler: dpcpp

Linking: dynamic

Interface layer: dpcpp_api

Parallel: yes

OpenMP library: tbb

Output

======

Compiler option(s):

-fsycl -DMKL_ILP64 -I"${MKLROOT}/include"

Linking line:

-fsycl -L${MKLROOT}/lib/intel64 -lmkl_sycl -lmkl_intel_ilp64 -lmkl_tbb_thread -lmkl_core -lsycl -lOpenCL -lpthread -lm -ldl

Environment variable(s):

There are no recommended environment variables

In this example, we requested to link with oneMKL libraries explicitly and thus get the most verbose option output possible.

Link Line Advisor

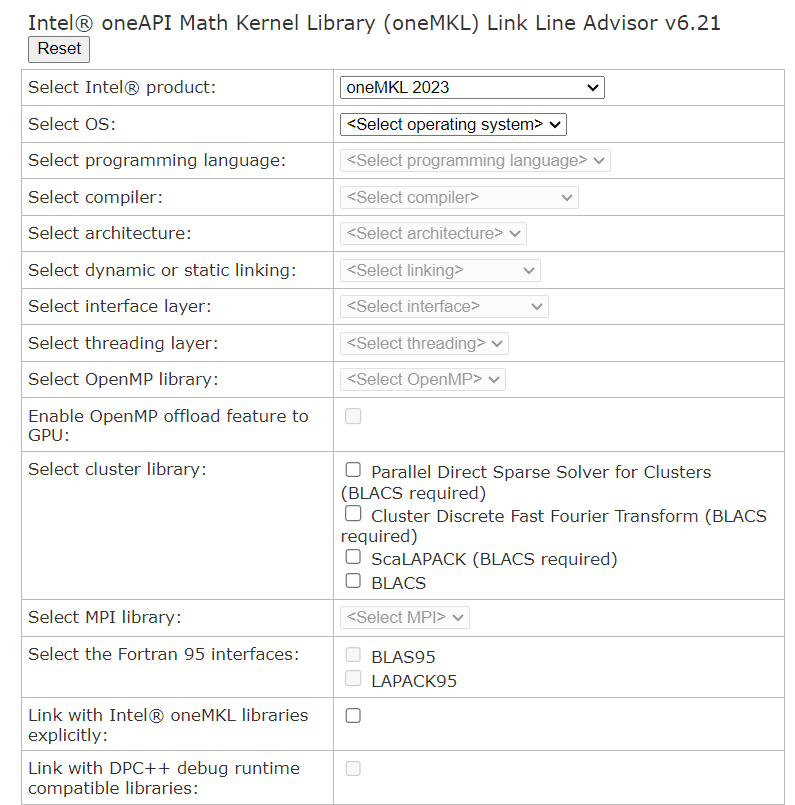

The Link Line Advisor is a web-based online tool with functionality similar to the command-line link tool in interactive mode.

What it provides additionally is that you can check your linker options without being in your fully configured build environment. In addition, it allows you to compare compiler and linker options for all the different oneMKL distributions since 2017.

This can be very helpful if all you are trying to achieve is to identify possible build option changes for your application since your last library update, if there are any.

Link Line Advisor lets you easily identify possible build option changes between an older oneMKL distribution and your current one.

The Advisor uses a simple input form for all the possible use case variations.

Fig 1. Link Line Advisor Input Form

The resulting compiler, linker, and environment options will then be displayed on the very same page:

Fig 2. Link Line Advisor Output

Give it a try today. Even for a feature-rich math kernel library like oneMKL, there is no reason to be uncertain about the best build options. Verifying that you have all the build dependencies set correctly is easy.

Summary

In this article, we provided a brief overview of Intel oneAPI Math Kernel Library linking options and introduced utilities to help decide on the best combination of options for your build environment.

Check out the Command-Line Link Tool as well as the Link Line Advisor today.

Get The Software

You can install the Intel® oneAPI Math Kernel Library (oneMKL) as a part of the Intel® oneAPI Base Toolkit or the Intel® HPC Toolkit. You can also download a standalone version of the library or test it across Intel® CPUs and GPUs on the Intel® Developer Cloud platform.