Overview

This tutorial describes how to set up Intel® Optane™ DC persistent memory in app-direct mode on a VMware* virtual machine (VM) so that applications can access the persistent memory. Prior knowledge of persistent memory is not required.

Prerequisites

To complete this tutorial, you need the following:

- 2nd generation Intel® Xeon® scalable processor-based platform populated with Intel Optane DC persistent memory.

- ipmctl ‒ A utility for configuring and managing Intel Optane DC persistent memory.

- ndctl – A utility for managing the non-volatile memory device subsystem in the Linux* kernel.

- VMware vSphere* Hypervisor (ESXi) version 6.7.0 or higher.

Steps

Configure App-Direct Mode

For the virtual machines running on a VMware ESXi* server to access a non-volatile dual in-line memory module (NVDIMM), you need to configure the memory in app-direct mode.You can do this in one of three ways:

- Using the ipmctl management utility at the OS level.

- Using the ipmctl management utility, but at the Unified Extensible Firmware Interface (UEFI) shell. You can get this ipmctl management utility from the server vendor’s website.

- Use the BIOS options, if available for your system.

This tutorial describes how to use the ipmctl utility at the OS level to configure Intel Optane DC persistent memory. You can also apply the steps at the UEFI level. Due to BIOS differences between vendors and platforms, consult the documentation provided by your platform vendor.

You will use the ipmctl utility to initially configure the persistent memory so the Hypervisor can see it. Then you use the ndctl utility to create namespaces so you can create and mount filesystems.

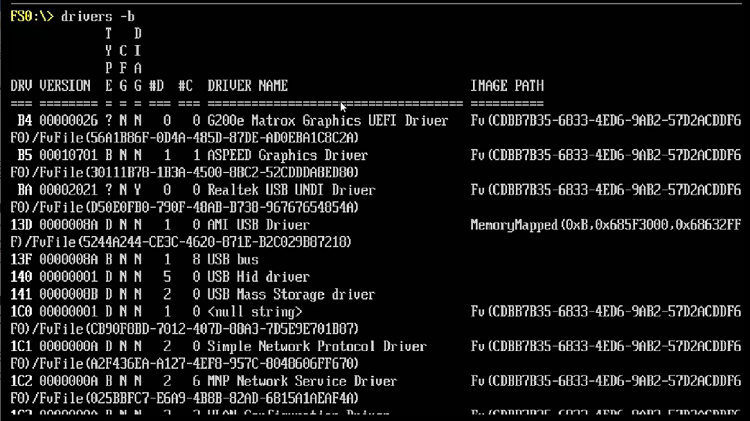

- Boot into your UEFI shell and run drivers –b to list out the UEFI drivers (Figure 1).

- Hit Enter until you can see the Intel Optane DC persistent memory drivers.

- Check the version of the drivers; it should match exactly the version of your ipmctl tool. If there is no exact match, you must uninstall the drivers first, and then install the drivers with the version that matches the ipmctl tool. To uninstall a driver, use the hexadecimal value of the first column (called DRV).

Figure 1. List of the installed UEFI drivers.

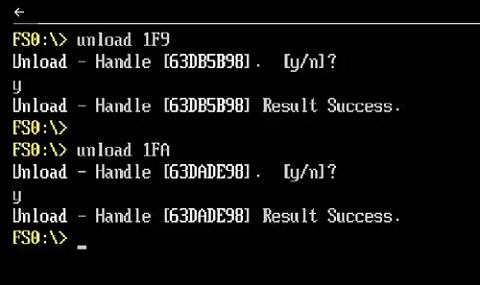

In this case, for example, those values are 1F9 and 1FA. The command name to uninstall a driver is unload.

Figure 2. Uninstall the older version of the ipmctl UEFI drivers.

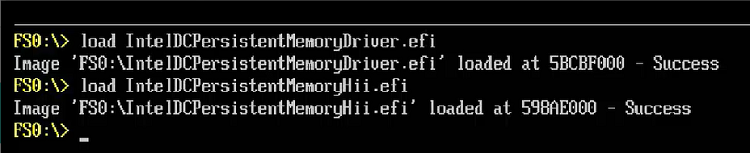

- Load the drivers shipped with your ipmctl tool using the command load.

Figure 3. Load the drivers that shipped with the ipmctl tool.

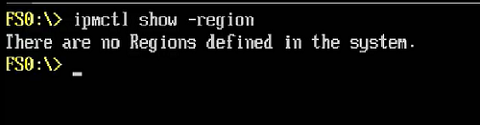

- Once the drivers are installed, run ipmctl to show the available regions in the system.

Figure 4. Running ipmctl to check for the Regions configuration.

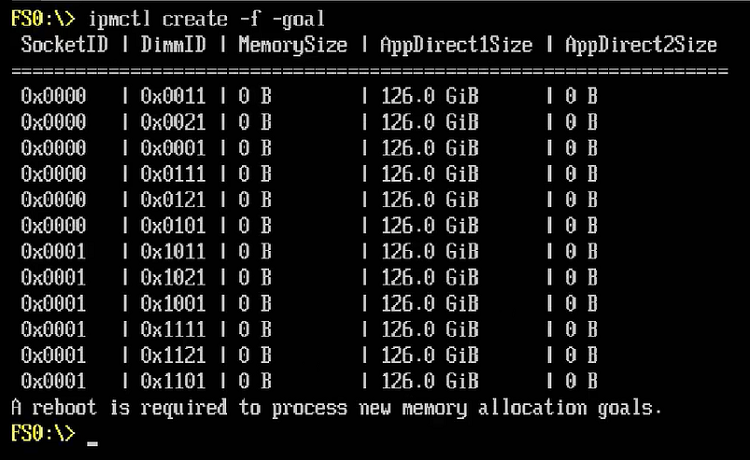

- Configure the entire persistent memory capacity in app-direct, interleaved mode, by running ipmctl create -f -goal. Once the goal is defined, reboot the system.

Figure 5. Configure the memory for app-direct mode using the ipmctl tool.

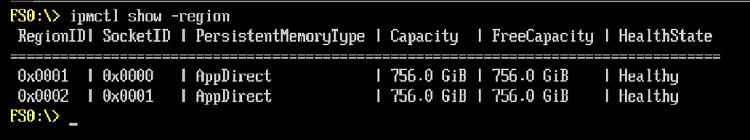

- After rebooting the system, run ipmctl show -region again to check your newly created app-direct regions. You should see one region per CPU socket.

Figure 6. Using the ipmctl tool to show the configured regions.

Install VMware ESXi*

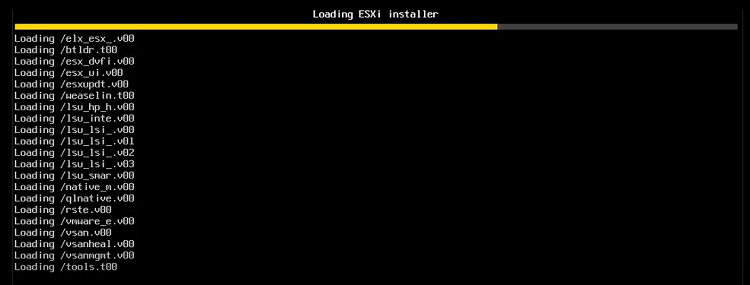

You are now ready to install VMware ESXi in the system.The minimum version required is 6.7.0.

Install VMware ESXi as you would for any other server. Special options are not required during the installation phase.

Figure 7. Install VMware ESXiin the server with Intel® Optane™ DC persistent memory.

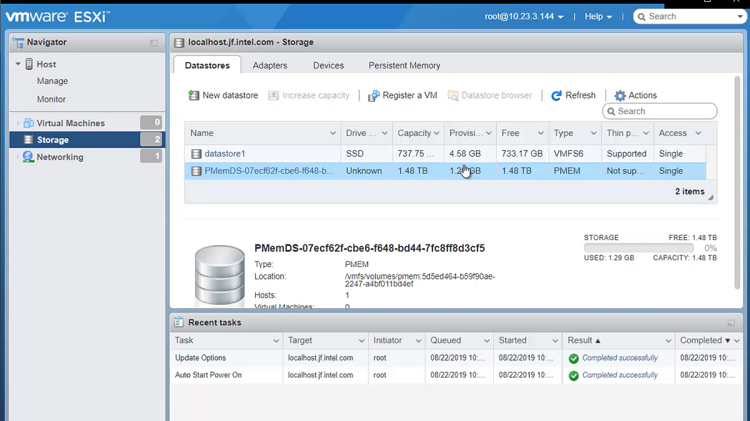

Verify NVDIMM Configuration Under the VMware* Web Console

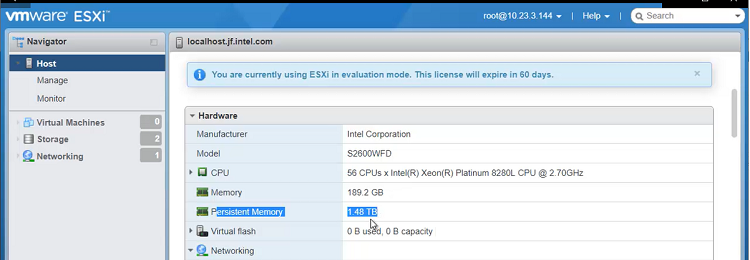

- When the installation is complete, boot the server and access the web console.

- Verify that VMware ESXi is detecting your persistent memory capacity by checking the persistent memory value from the Hardware tab.

A special data store also should have been created, which can be verified by going to Storage in the left side menu.

Figure 8. Verify that persistent memory is configured correctly in VMware ESXi.

Figure 9. Verify that the special datastore is created for persistent memory.

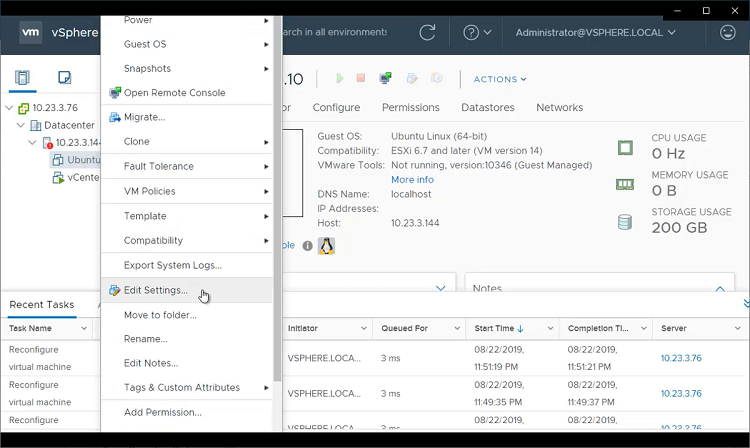

Now that persistent memory is properly configured in VMware ESXi, add it to your virtual machines through VMware vCenter*. As with VMware ESXi, make sure that you are running VMware vCenter version 6.7.0 or higher.

Persistent memory is added to VMs in the form of virtual NVDIMMs, as follows:

- Go to the VM settings. Once there, select Add new device, and then select NVDIMM.

Figure 10. Select VM Settings to add a virtual NVDIMM.

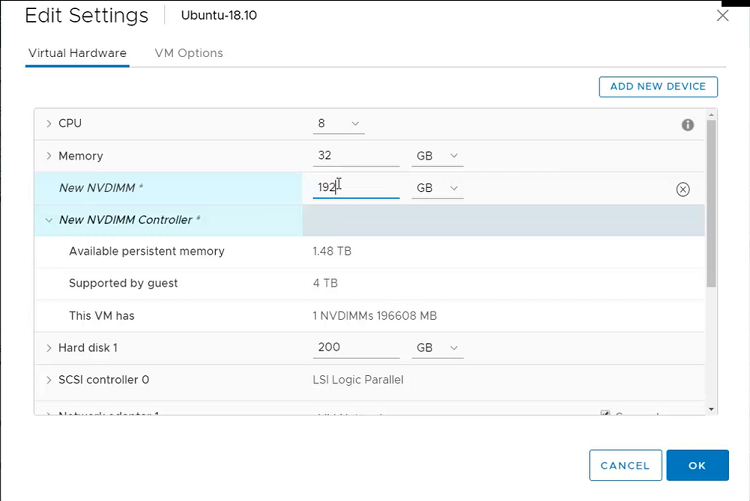

Figure 11. Add a new NVDIMM virtual device.

If you expand the NVDIMM controller, you should see all the available persistent memory capacity.

- Choose a value for the size of the new NVDIMM (in our case, 192 gigabytes (GB)), and then click OK.

Figure 12. Define the size for the new NVDIMM.

Configure the NVDIMM Under the VM

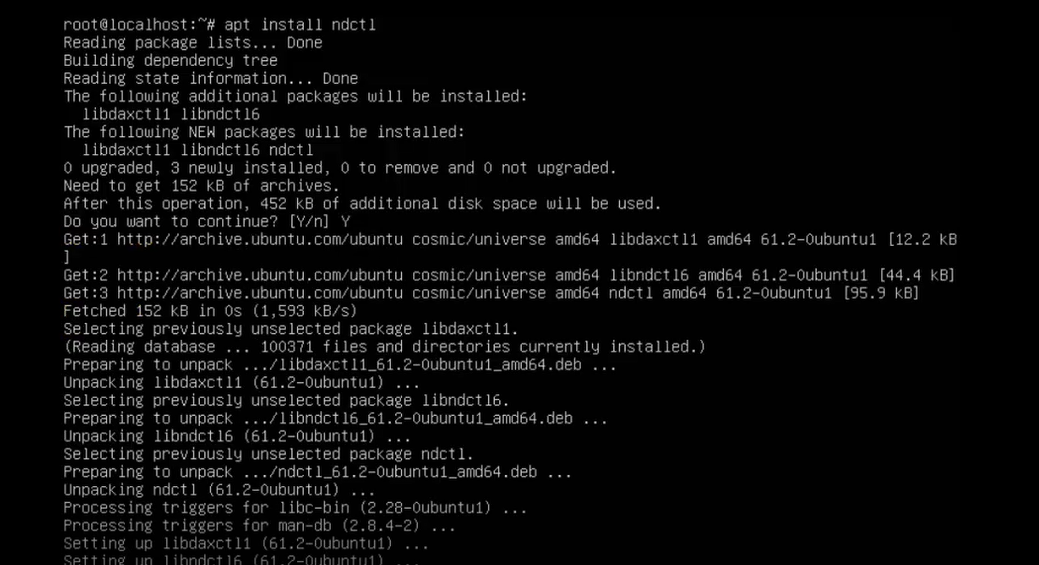

At this point, persistent memory is attached to the VM as a special block device. Boot into your VM and check that /dev/pmem0 exists. If you attached multiple virtual NVDIMMs, you will see multiple persistent memory devices under /dev.

- For the final step, the ndctl tool must be installed in your VM. Install it using the package manager of your favorite Linux distribution.

Figure 13. Install the ndctl tool in the OS running on your VM.

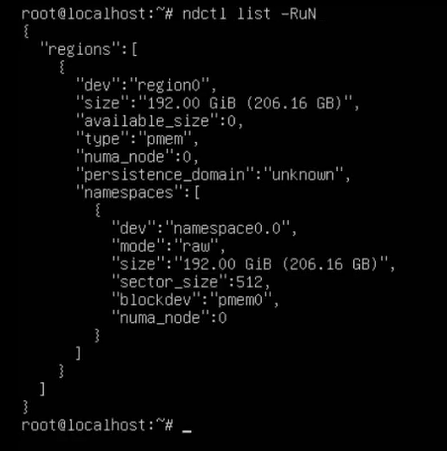

- Run ndctl list -RuN to list the current regions and namespaces configured in the system. You should see at least one namespace within one region.

Figure 14. Check the regions and namespaces configuration by running the ndctl tool under your VM.

As currently configured, the pmem0 device can only be used for regular I/O. This is because the namespace is configured in raw mode, which does not support the dax option. The dax option allows you to memory map persistent memory and access it directly through loads and stores from user space. Without it, you cannot do persistent memory programming.

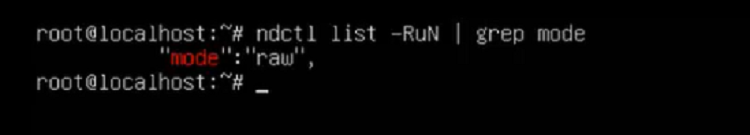

- If you have only one namespace, you can check its mode by running ndctl list -RuN and doing a global regular expression print (grep) for mode. As you can see, the namespace is in raw mode.

Figure 15. Check the namespace mode by running the ndctl tool.

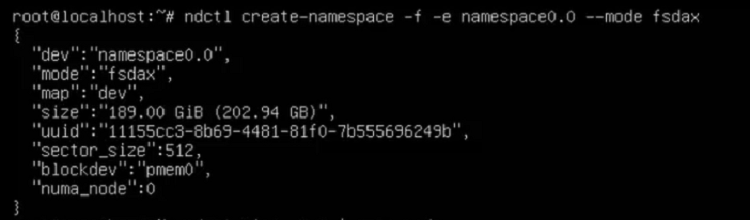

- To change the mode, run ndctl create-namespace -f -e with the namespace name, specifying --mode fsdax.

Figure 16. Change the namespace mode from raw to fsdax.

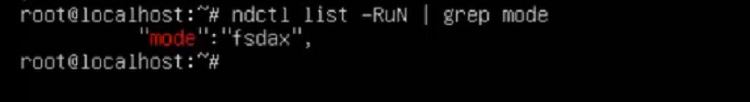

- When the command completes, check whether your namespace is in fsdax mode by running ndctl list -RuN and doing a grep for mode.

Figure 17. Confirm the namespace mode as fsdax.

If your namespace is in fsdax mode, you are done. Now you must create the filesystem and mount it.

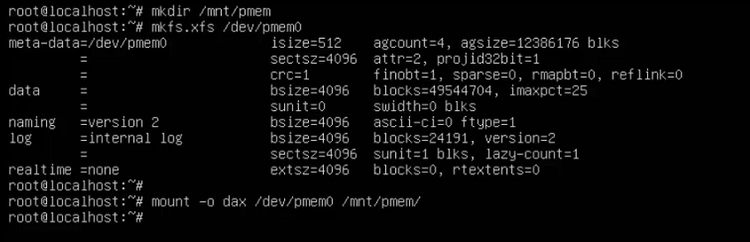

- To create and mount the filesystem:

- Create a mount point for your persistent memory.

- Create a filesystem in the device using either ext4 or xfs.

- Mount the device—with the dax option—in the mount point created.

Figure 18. Mount the pmem0 device for the VMwareVMs to access the persistent memory.

Conclusion

In this tutorial, you set up your Intel Optane DC persistent memory in app-direct mode using the ipmctl tool, and then created virtual NVDIMMs through VMware vCenter to add them to VMs running on VMware ESXi. After completing these steps, your virtual machine should be ready to use persistent memory, and applications can access Intel Optane DC persistent memory directly, without any modifications, as if they were running on a bare metal server.

References

- Get Started with Intel Optane DC Persistent Memory

- ipmctl ‒ A utility for configuring and managing Intel Optane DC persistent memory

- ndctl ‒ A utility library for managing the libnvdimm subsystem in the Linux kernel

- VMware vSphere Hypervisor (ESXi)

- Intel Optane DC Persistent Memory Overview

- Configuring Intel Optane DC Persistent Memory for Best Performance