Code Your Own Mech Robot

Can basic coding concepts be taught in virtual reality? We believe the answer is yes and in fact we are building the world’s very first VR application to teach computer science basics in a virtual environment. This initiative has been made possible thanks to the generous support of Intel and our vast experience teaching coding at Zenva Academy.

How can we make teaching computer science fun, engaging? Robots that teach kids how to code have been an inspiration. Presenting computer science to the public in a way that’s not intimidating is certainly a challenge. The beauty of virtual reality is that it allows us to create an entire universe without the limitations and constraints that come with traditional teaching methods or physical hardware.

Zenva Sky has taken a turn this week by focusing on the idea of programming a robotic vehicle which will introduce users to progressively more advanced computer science concepts. Keep on reading to find out what changed from our last update and what areas we’ll be developing next!

Code Your Way to the Exit

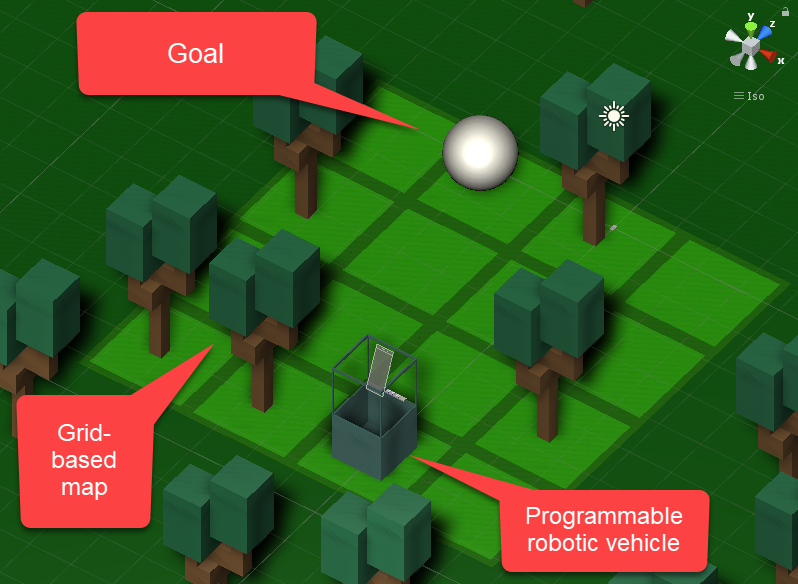

Each challenge / level consists of a grid-based area that a robotic vehicle must navigate in order to reach the goal. It’s important to mention that the models you see below are being used for development purposes – the final art style of Zenva Sky hasn’t been defined yet, and it will most likely look radically different to what you see here.

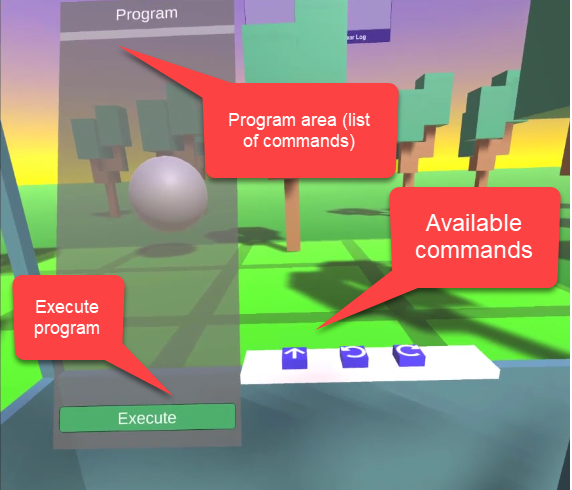

The user is placed inside the vehicle. They can enter different commands into their robot from the cabin's dashboard. Each command is added to the Program panel. The goal of the program is to take the robot all the way to the goal of the level.

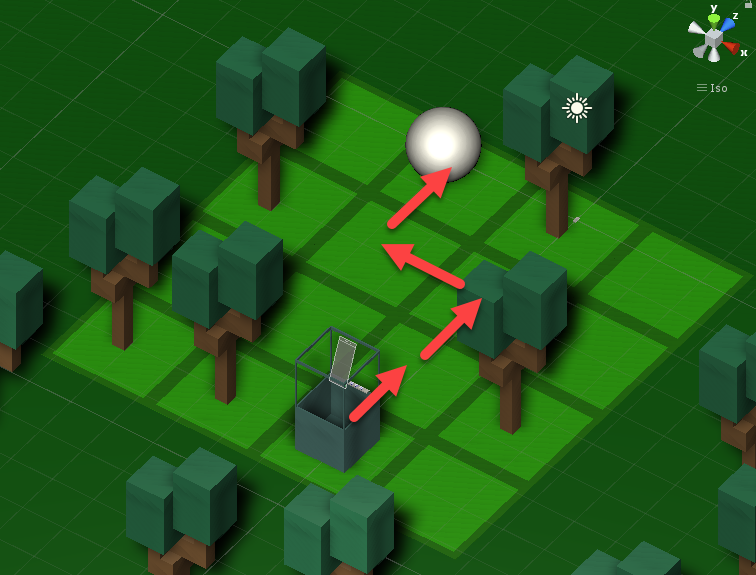

We built the first level, which can be solved by moving and rotation the robot. The first level can be solved making the following moves:

Which translates into the following commands:

- Move forward

- Move forward

- Rotate left

- Move forward

- Rotate right

- Move forward

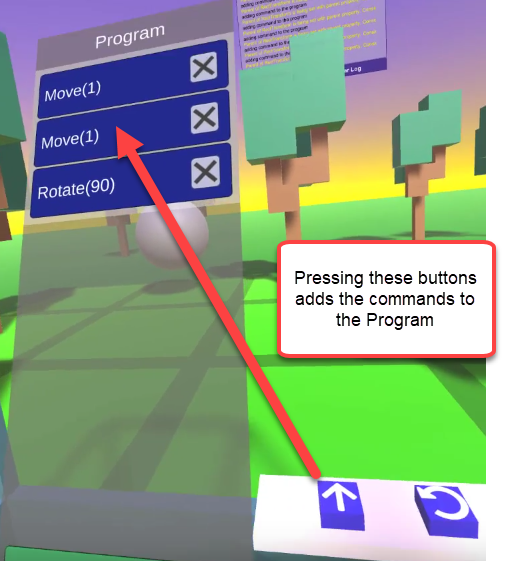

In order to create such a program, the user needs to press the buttons on their dashboard using their hand-tracked Mixed Reality controllers -- just like they would in a real vehicle!

Previously, we were using “laser pointers” for UI interactions, however it felt more natural for VR to actually press buttons and use your hands to interact with the different element, without using a "mouse cursor" analogy (which is what VR laser pointers are, in a way):

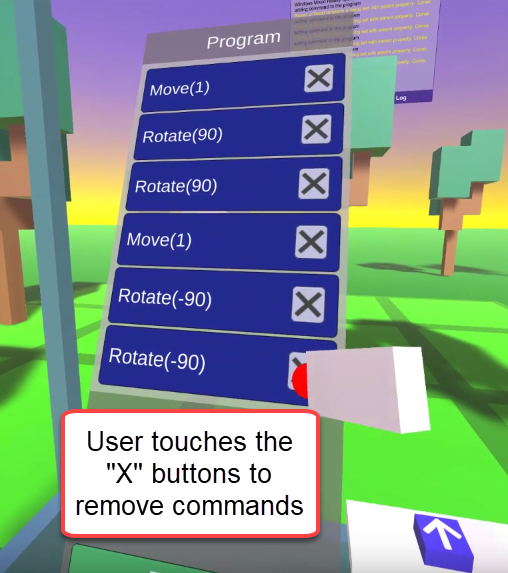

If the user makes a mistake they can remove the command from the Program by touching the “X” buttons with their hand-tracked controller:

When the Program is ready to go, the user presses the “Execute” button in the Program panel, which will make the vehicle move and execute every command on the list.

Comfort Considerations

Simulator sickness (aka “motion sickness”) is a problem for many users. These are some of the considerations we’ve taken so far:

- We tested different speeds for the forward movement and opted for a slow one. In the future, we plan to allow the user to adjust the speed.

- While the current vehicle model is not final, it includes frames of reference (poles, in this case) which help prevent simulator sickness. The final vehicle will have plenty of these.

- Rotations movements are discrete – there is no smooth rotation. It has been found in games that smooth rotation movements make people more sick than larger, discrete movements.

- By using the controller's touchpad buttons the user can "adjust their sit" -- slightly move their position within the cabin.

- Probably the most important measure on this list: we are testing this application with different people in our office space, most of them new to VR, in order to gauge the usability and comfort of our prototype.

Next Steps

Since we started with this project, having a playable level was a main concern. If you followed our past updates we had reached a few roadblocks with our previous prototype. The current gameplay feels adequate and finally having that first level complete is a big milestone for us!

The next thing we’ll implement is the ability to activate boolean gates, so that we can develop a few more challenges that incorporate boolean logic on top of the existing move and rotate commands.