Challenge

Developing code that is efficient, scalable, and optimized for select targets across today's diverse computer architectures has been an ongoing challenge for developers. High-performance computing (HPC) has recently risen to prominence. It supports demanding workloads spanning technologies such as AI, visual computing, data analytics, and video analytics. HPC also supports emerging technologies that include hybrid cloud and edge computing.

Adapting code development to the individual characteristics of multiple architectural models while maintaining top performance levels often requires accommodating a mix of scalar, vector, matrix, and spatial (SVMS) architectures implemented in CPU, GPU, AI, and FPGA accelerators. Previously, accomplishing this objective required many different languages, libraries, and tools. Frequently, individual programming paths had to be built for each target platform. Code portability and reusability were handicapped by the need for separate software investments to optimize performance on different architectures.

Marcel Breyer, a PhD student at the University of Stuttgart was intrigued by this challenge and motivated to find a solution. He began identifying and applying the tools and technologies best suited to HPC use cases, including C++, OpenMP*, message-passing interface (MPI), CUDA*, SYCL*, and more. His work has provided deeper insights into code portability. It confirmed the effectiveness of the oneAPI programming model for solving many of the difficulties of ensuring cross-platform interoperability and programming heterogeneous systems.

Solution

Marcel's attention has been focused on improving software performance portability across differing hardware architectures, particularly those with GPUs. “I have been exploring the potential of SYCL for accomplishing this goal,” he said. “I enjoy writing code that performs well on different platforms—not code that ‘just works’ but performs poorly.”

The industry-wide ecosystem driving the oneAPI initiative and the toolkits and libraries available through the open source community proved to be a useful complement to his project objectives. The oneAPI industry initiative represents a collaborative ecosystem that empowers developers to unify programming across a mix of CPU and accelerator architectures. It focuses on achieving faster application performance, efficient development processes, and creating innovative techniques for friction-free development.

Build on Prior Projects

Beginning with his bachelor's thesis, Marcel developed ideas to make HPC code more portable by using CUDA. “For my master's thesis,” he said, “I used SYCL rather than CUDA. Plus, I added support for multi-GPU execution using MPI and developed a performance-portable k-nearest neighbors implementation using locality sensitive hashing (LSH) that supports multiple GPUs from different vendors. This approach is quite unique. I also rewrote the code to support the Intel® oneAPI DPC++/C++ Compiler and ComputeCpp*, besides hipSYCL.”

Development Notes and Enabling Technologies

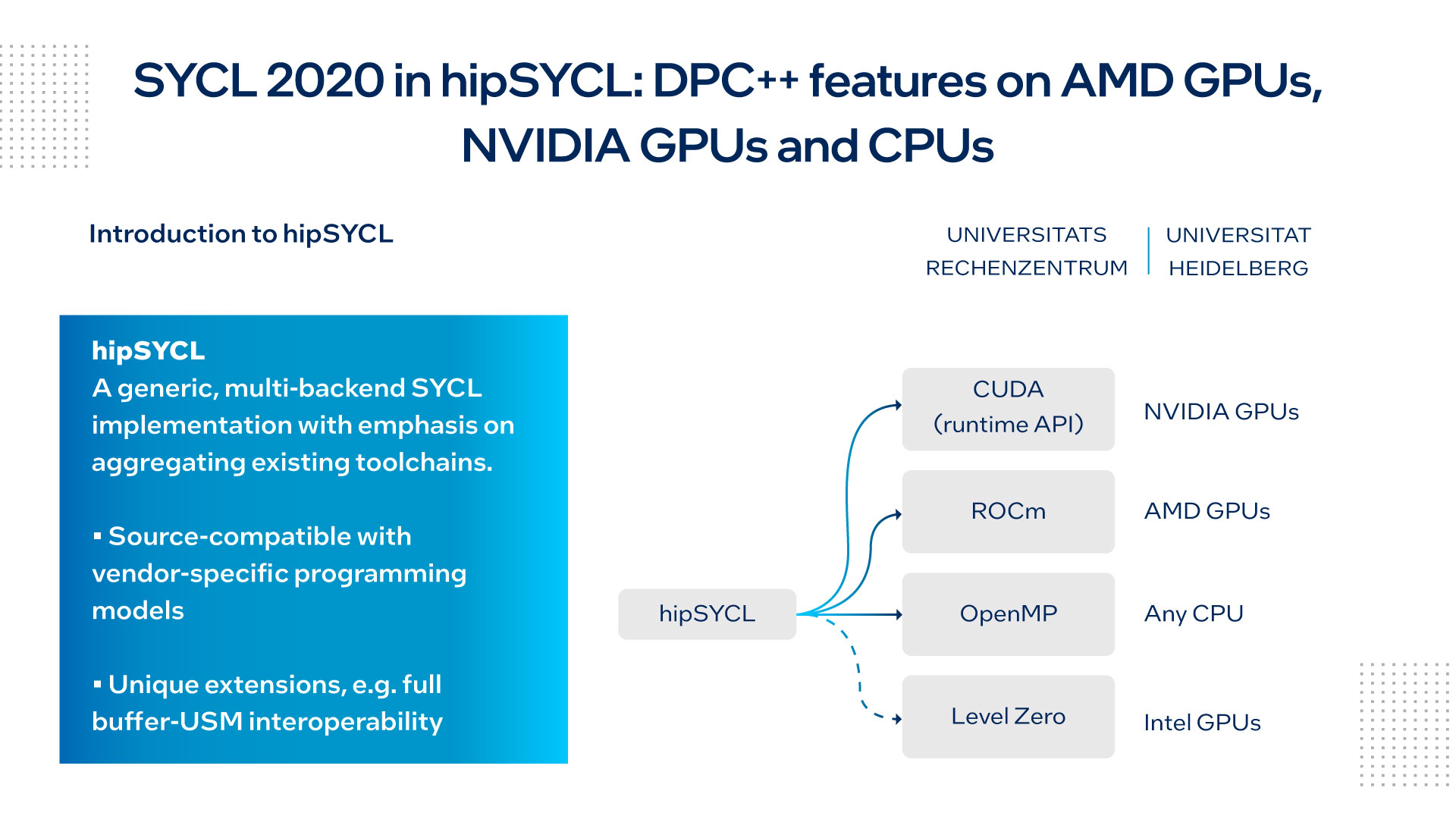

With strong source compatibility for vendor-specific programming models, hipSYCL has gained popularity among open source developers by providing support for CUDA intrinsics or optimized template libraries from Advanced Micro Devices (AMD*). It also offers a useful platform for experimenting with novel SYCL extensions for strengthening and enhancing the language.

Marcel noted, “At this time, hipSYCL hasn’t had support for multi-GPU using only one host process. Therefore, I had to implement the multi-GPU support using MPI (one MPI process per GPU). I discovered that each of the SYCL implementations behaved slightly differently. For example, the DPC++ compiler and ComputeCpp refused code that compiled without issues under hipSYCL.”

Marcel's research demonstrating support for GPUs from different vendors can be viewed on GitHub*: Distributed k-Nearest Neighbors Using Locality Sensitive Hashing and SYCL.

Marcel explained that the main computational tasks are performed on the GPUs. This includes creating hash functions and tables, and calculating the k-Nearest Neighbors. These tasks are evenly distributed among all available GPUs. CPU workloads are focused on auxiliary tasks, such as I/O operations and MPI communications.

Figure 1 provides a view of the toolchains involved in typical hipSYCL implementations.

Figure 1. Aggregate toolchains using hipSYCL

The Intel® DPC++ Compatibility Tool, a tool for streamlining CUDA code migration to DPC++ code (Figure 2), is available as part of the Intel® oneAPI Base Toolkit, which features a collection of useful compilers, libraries, analysis, and debug tools.

Figure 2. Intel DPC++ Compatibility Tool Use Flow

Insights for Other Developers

With the experience gained from his work in this area, Marcel offered this advice to other developers: “If multiple SYCL implementations are to be supported, start developing with the DPC++ compiler. This compiler incorporates more compile-time checks to catch potential bugs as early in the development process as possible.”

Marcel noted that all SYCL implementations perform roughly the same and implement roughly the same feature set for SYCL 1.2.1. “However,” he said, “if you are using CMake* with all three SYCL implementations, be sure that you are using version 3.20.0 or later of CMake and that you have package support for hipSYCL. With ComputeCpp, use it by means of an include.”

“The DPC++ compiler is preinstalled in the Intel® DevCloud,” Marcel continued, “which simplifies getting started developing. Also, I strongly recommend this very good (and free) resource: Data Parallel C++: Mastering DPC++ for Programming of Heterogeneous Systems Using C++ and SYCL.” The PDF version of the book can be downloaded from Open Access.

“The promise of oneAPI to deliver a single programming environment across multiple compute architectures is a vital tool to unlock the promise of heterogeneous computing. Here science communities can leverage investments in code development across multi-hardware platforms helping advance performance gains from different hardware targets and also making future hardware targets more accessible.”1

— Paul Calleja, director of Research Computing Service, University of Cambridge

Conclusion: Ongoing Research

When asked about the key successes for the project, Marcel replied, “I achieved reasonable performance on different hardware platforms with GPUs from different vendors. All three SYCL implementations showed performance gains. Also, I managed near-perfect parallel efficiency with up to eight NVIDIA* GTX 1080 Ti GPUs.”

Marcel identified specific areas for ongoing research and work:

- Include other distance metrics other than the Euclidean distance

- Develop more variants of the base LSH algorithm

- Develop other types of LSH functions

- Find a more efficient implementation for creating the entropy-based hash function

- Investigate performance on multi-node systems

Marcel Breyer's breakthrough technology advances are progressing on several fronts involving hipSYCL and oneAPI. Research and discoveries originate from the oneAPI Academic Center of Excellence at the Heidelberg University Computing Centre. They are part of a cooperative, worldwide initiative within the open source community to build an effective open-programming model for heterogeneous computer architectures.

Jeff McVeigh, Intel vice president of Datacenter XPU Products and Solutions commented, “oneAPI is a true cross-industry initiative that seeks to simplify development of diverse workloads by streamlining code reuse across a variety of architectures through an open and collaborative approach. URZ's research helps to deliver on the cross-vendor promise of oneAPI by expanding advanced DPC++ application support to other architectures.”2

Resources and Recommendations

Distributed k-Nearest Neighbors Using Locality-Sensitive Hashing and SYCL

Find out more on the Intel® DevMesh project page.

hipSYCL on GitHub

Explore the underlying code and long-term potential for this implementation.

oneAPI Centers of Excellence

Learn more about helping the oneAPI ecosystem grow and prosper.

Intel DevCloud

This site offers free access to code from home with cutting-edge Intel® CPUs, GPUs, FPGAs, and preinstalled Intel® oneAPI Toolkits including tools, frameworks, and libraries. Sign up for this free resource and get started in minutes.

2 OneAPI Academic Center of Excellence Established at the Heidelberg University Computing Center. URZ Newsroom. September 2020.