Introduction

The Intel® Intelligent Storage Library (Intel ISA-L) includes support for erasure coding. This article describes how the use of erasure coding can benefit a storage application and explains the Intel ISA-L implementation, which uses Reed Solomon error correction (RS EC). The attached sample application demonstrates the efficiency of RS EC on Intel Architecture CPUs, showing how with Intel ISA-L erasure coding, error correction can be performed on the CPU sufficiently fast enough to not induce a bottleneck or add substantial latency to storage devices. To download the sample application, click on the button at the top of this article.

Historically, RAID storage arrays protected against data loss through mirroring (RAID 1) or calculating parity (RAID 5), which required additional storage and resulted in slower throughput. To address the problems with RAID 5, I/O Host Bus Adapters (HBAs) were given dedicated circuitry to offload parity computation instead of performing the computations on the CPU. However, with the advent of low-latency NVMe storage devices connected directly to the PCIe bus, this offload of parity calculations can no longer be done in the data path, which eliminates any advantage of offloading parity calculations. In addition, the continuing march of Moore’s Law has reduced the computational cost of parity calculations to fractions of a CPU cycle per byte, meaning a single Xeon core can compute tens of gigabytes per second.

The largest change to storage architecture has been driven by the emergence datacenter-scale storage solutions. As applications have scaled from server to rack to datacenter scale, so have the demands on the storage infrastructure that support them. Without access to data, a datacenter is nothing more than an elaborate space heater, so storage availability and fault tolerance have become the most crucial challenges in the of the data center. For this reason, datacenter scale systems are designed for continuous operation and at least 99.999% availability (about five minutes of downtime per year). To achieve this goal, two techniques are pervasive: multi-replication (typically triple-replication) and Reed-Solomon erasure codes (RS EC), both of which ensure there are always copies of the data available despite single or dual failures.

Triple replication has the advantage of simplicity. It is computationally easy to maintain three copies of data, and this technique is used in scalable file systems like Hadoop HDFS, but it does have a drawback: it requires that a system’s raw storage capacity is (at least) 3X the design capacity. By contrast, RS EC has historically been computationally intensive, but is far more flexible and space efficient, enabling raw capacity to be only 1.5X the design capacity. For storage applications requiring large data sets, this difference in the underlying availability algorithm can translate into huge differences in capital and operating expenditures.

In this article, we demonstrate that RS EC efficiency on Intel Architecture CPUs has improved to become a viable choice even for directly PCIe-connected, high-throughput, low latency media like NVMe devices. The Intel ISA-L implementation of RS will be used to demonstrate that it is now possible to keep up with current storage hardware throughput requirements by doing RS error correction in software instead of offloading to dedicated hardware or using drive-based parity computations

Hardware and Software Configuration

| CPU and Chipset | Intel® Xeon® processor E5-2699 v4, 2.2 GHz

|

| Platform | Platform: Intel® Server System R2000WT product family (code-named Wildcat Pass)

|

| Memory | Memory size: 256 GB (16X16 GB) DDR4 2133P Brand/model: Micron – MTA36ASF2G72PZ2GATESIG |

| Storage | Brand and model: 1 TB Western Digital (WD1002FAEX) Intel® SSD Data Center P3700 Series (SSDPEDMD400G4) |

| Operating System | Ubuntu* 16.04 LTS (Xenial Xerus) Linux* kernel 4.4.0-21-generic |

Note: Depending on the platform capability, Intel ISA-L can run on various Intel® processor families. Improvements are obtained by speeding up the computations through the use of the following instruction sets:

- Intel® Advanced Encryption Standard New Instruction (Intel® AES-NI)

- Intel® Streaming SIMD Extensions (Intel® SSE)

- Intel® Advanced Vector Extensions (Intel® AVX)

- Intel® Advanced Vector Extensions 2 (Intel® AVX2)

- Intel Advanced Vector Extensions 512 (Intel AVX 512)

Why Use Intel® Intelligent Storage Acceleration Library?

Intel ISA-L has the capabilities to do RS EC in software instead of offloading to dedicated hardware or using drive-based parity computations. This capability is well suited for high throughput storage applications. This article has the sample application that simulates a RS EC scenario, using a set of memory buffers instead of discrete I/O devices. Memory buffers are used for two reasons: first, to put the focus on the software overhead instead of the media latency involved in I/Os; and second, to allow this example to be run on any system configuration.

The sample application creates up to 24 different buffers in memory to store data; a larger data set of up to 256 MB of data is distributed across these buffers. ISA-L’s RS algorithm implementation is used to calculate error correction codes that are stored and distributed with the data across the buffers. Storage times, i.e., the time to calculate the RS EC are measured and logged. To simulate failure, up to two memory buffers are made inaccessible and the program demonstrates that the data can be completely and correctly recovered from the remaining buffers. Recovery time is also measured and logged. The approach is compared to published results from parity-based (RAID 5) systems.

Prerequisites

Intel ISA-L supports Linux and Microsoft Windows*. A full list of prerequisite packages can be found here.

Building the sample application (for Linux):

- Install the dependencies:

- a c++14 compliant c++ compiler

- cmake >= 3.1

- git

- autogen

- autoconf

- automake

- yasm and/or nasm

- libtool

- boost's "Program Options" library and headers

>sudo apt-get update >sudo apt-get install gcc g++ make cmake git autogen autoconf automake yasm nasm libtool libboost-all-dev - Also needed is the latest versions of ISA-L. The get_libs.bash script can be used to get this. The script will download two library from their official GitHub* repository, build them, and then install them in the `./libs/usr` directory.

>`bash ./libs/get_libs.bash` - Build from the `ex2` directory:

- `mkdir <build-dir>`

- `cd <build-dir>`

- `cmake -DCMAKE_BUILD_TYPE=Release $OLDPWD`

- `make`

Getting Started with the Sample Application

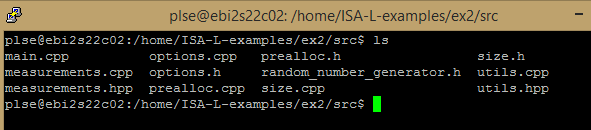

The sample application contains the following:

This example goes through the following steps at a high-level work flow and only focuses on those routines demonstrating the whole data recovery using the Intel® ISA-L RS implementation.

Setup

- In the “main.cpp” file, the program parses the command line and displays the options that are going to be performed.

int main(int argc, char* argv[]) { options options = options::parse(argc, argv);Parsing the option of the command line

- In the options.cpp file, the program parses the command line arguments using `options::parse()`.

Performing storage and recovery

- In the “main.cpp” file, the program performs as many storage/recovery cycles (up to 10,000) as it can until the aggregate storage or recovery time exceeds 1 seconds in a while loop.

- Then it calls the

encode_data()to create the error correction codes for the data.// Create the error correction codes, and store them alongside the data. std::vector encode_data(int m, int k, uint8_t** sources, int len, prealloc_encode prealloc) { // Generate encode matrix gf_gen_cauchy1_matrix(prealloc.encode_matrix.data(), m, k); // Generates the expanded tables needed for fast encoding ec_init_tables(k, m - k, &prealloc.encode_matrix[k * k], prealloc.table.data()); // Actually generated the error correction codes ec_encode_data( len, k, m - k, prealloc.table.data(), (uint8_t**)sources, (uint8_t**)&sources[k]); return prealloc.encode_matrix; } - Out of the 24 buffers for source data, the

iteration()function randomly picks 2 buffers to contain the erroneous_data.// We randomly choose up to 2 buffers to "lose", and return the indexes of those buffers. // Note that we can lose both part of the data or part of the error correction codes indifferently. std::vector generate_errors(int m, int errors_count) { random_number_generator idx_generator(0, m - 1); std::vector errors{idx_generator.get(), 0}; if (errors_count == 2) { do { errors[1] = idx_generator.get(); } while (errors[1] == errors[0]); std::sort(errors.begin(), errors.end()); } return errors; } // We arrange a new array of buffers that skip the ones we "lost" uint8_t** create_erroneous_data(int k, uint8_t** source_data, std::vector errors) { uint8_t** erroneous_data; erroneous_data = (uint8_t**)malloc(k * sizeof(uint8_t*)); for (int i = 0, r = 0; i < k; ++i, ++r) { while (std::find(errors.cbegin(), errors.cend(), r) != errors.cend()) ++r; for (int j = 0; j < k; j++) { erroneous_data[i] = source_data[r]; } } return erroneous_data; } - Finally, the

iteration()function calls therecover_data()function to recover the data and then compare with the original data.// Recover the contents of the "lost" buffers // - m : the total number of buffer, containint both the source data and the error // correction codes // - k : the number of buffer that contain the source data // - erroneous_data : the original buffers without the ones we "lost" // - errors : the indexes of the buffers we "lost" // - encode_matrix : the matrix used to generate the error correction codes // - len : the length (in bytes) of each buffer // Return the recovered "lost" buffers uint8_t** recover_data( int m, int k, uint8_t** erroneous_data, const std::vector& errors, const std::vector& encode_matrix, int len, prealloc_recover prealloc) { for (int i = 0, r = 0; i < k; ++i, ++r) { while (std::find(errors.cbegin(), errors.cend(), r) != errors.cend()) ++r; for (int j = 0; j < k; j++) { prealloc.errors_matrix[k * i + j] = encode_matrix[k * r + j]; } } gf_invert_matrix(prealloc.errors_matrix.data(), prealloc.invert_matrix.data(), k); for (int e = 0; e < errors.size(); ++e) { int idx = errors[e]; if (idx < k) // We lost one of the buffers containing the data { for (int j = 0; j < k; j++) { prealloc.decode_matrix[k * e + j] = prealloc.invert_matrix[k * idx + j]; } } else // We lost one of the buffer containing the error correction codes { for (int i = 0; i < k; i++) { uint8_t s = 0; for (int j = 0; j < k; j++) s ^= gf_mul(prealloc.invert_matrix[j * k + i], encode_matrix[k * idx + j]); prealloc.decode_matrix[k * e + i] = s; } } } ec_init_tables(k, m - k, prealloc.decode_matrix.data(), prealloc.table.data()); ec_encode_data(len, k, (m - k), prealloc.table.data(), erroneous_data, prealloc.decoding); return prealloc.decoding; } - Once the recovery is completed, the program displays the average storage and recovery time.

Execute the sample application

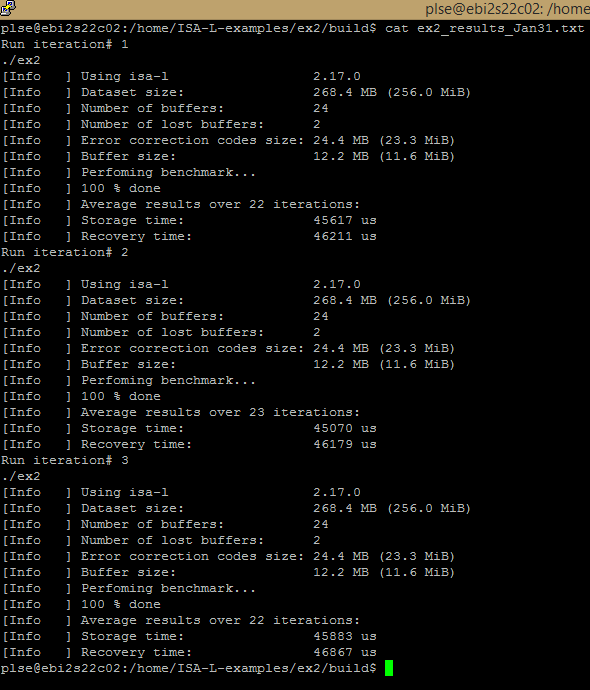

In this example, the program runs using 24 buffers, and 2 of those buffers are lost. Therefore, 2 buffers are used to hold the error correction codes, and the other 22 are holding the data.

Run

From the ex2 directory:

cd <build-bir>/ex2

./ex2 --helpUsage

Usage: ./ex2 [--help] [--data-size <size>] [--buffer-count <n>] [--lost-buffers <n>]:

--help display this message

--data-size size (=256MiB) the amount of data to be distributed among the buffers (size <=256 MiB)

--buffer-count n (=24) the number of buffers to be distributed data across (n <= 24)

--lost-buffers n (=2) the number of buffers that will get inaccessible (n <= 2)

Sizes must be formatted the following way:

- a number

- zero or more white space

- zero or one prefix (k, M)

- zero or one i character (note: 1kiB == 1024B, 1kB == 1000B)

- the character B

For example,

- 1KB

- "128 MiB"

- 42B

Running the example

>./ex2

From the output, the program displays the results of the storage and recovery time applying the Intel ISA-L RS method. With the Intel® Xeon® processor server, the RS computation can be done efficiently and quickly as demonstrated in the example runs.

Notes: 2x Intel Xeon processor E5-2699 v4 (HT off), Intel Speed Step technology enabled, Intel Turbo Boost Technology disabled, 16x16GB DDR4 2133 MT/s, 1 DIMM per channel, Ubuntu 16.04 LTS, Linux kernel 4.4.0-21-generic, 1 TB Western Digital (WD1002FAEX), 1 Intel SSD P3700 Series (SSDPEDMD400G4), 22x per CPU socket. Performance measured by the written sample application in this article.

Conclusion

As demonstrated in this quick tutorial, developers have a how-to guide to incorporate the Intel ISA-L RS EC feature into their storage applications along with the full build instructions. The example shows how to prepare the data and recover it, which can help developers adopt the technology. Intel ISA-L provides the library for storage developers for quick adoption to your specific application run on Intel® architecture.

Other Useful Links

Algorithms Built for Speed - A Brief Introduction to Intel® Intelligent Storage Acceleration Library (ISA-L) - BrightTALK webinar

Additional Intel ISA-L Code Samples

- Accelerate Data Deduplication Using Chunking and Hashing Functions

- Intel® ISA-L: Semi-Dynamic Compression Algorithms

- Intel® ISA-L: Cryptographic Hashes for Cloud Storage

Authors

- Thai Le is a software engineer who focuses on cloud computing and performance computing analysis at Intel.

- Steven Briscoe is an application engineer focusing on cloud computing within the Software Services Group at Intel Corporation (UK).

- Jonathan Stern is an applications engineer and solutions architect who works to support storage acceleration software at Intel.