Introduction

Monster Hunter World: Iceborne* is a massively new expansion for Monster Hunter World* - the console and PC-targeted Monster Hunter World* — Capcom’s best-selling game in history. Different from ordinary Downloadable Content - DLC, Iceborne was developed as if it was a brand-new title to embody the atmosphere of “Iceborne” new world. Along with new expressions for the new world, optimizing performance on both high-end CPU and even Intel® Integrated GPU were required to satisfy broad end-users. CAPCOM adopts “World Engine” proprietary engine derived from “MT FRAMEWORK” originally used for the Monster Hunter series, which permitted a wide variety of optimizations. This article covers how it analyzed performance bottlenecks on both high-end and Intel® integrated GPU environments, then introduce practical optimization methods which can be adopted to more broaden titles.

Initial Performance Analysis

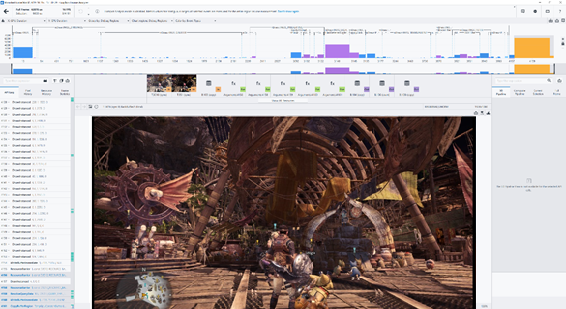

We are mainly using Intel® Graphics Performance Analyzers (Intel® GPA) for Intel® Graphics analysis (Figure 1), Intel® VTune™ Profiler, or GPUView, free profiler that comes with Windows* Assessment and Deployment Kit (Windows* ADK) for CPU and GPU analysis.

First, we chose Intel® Core™ i7-1065G7 Processor + Intel® Iris® Plus Graphics as mobile target system. Intel® GPA System Analyzer experiments “Null Hardware” option can determine whether a current bottleneck is located on the CPU or GPU. In this case, apparently it was a GPU bottleneck, so we focused on GPU frame analyzer results for the next step.

Figure 1 Intel® GPA Frame Analyzer view, Hotspot mode

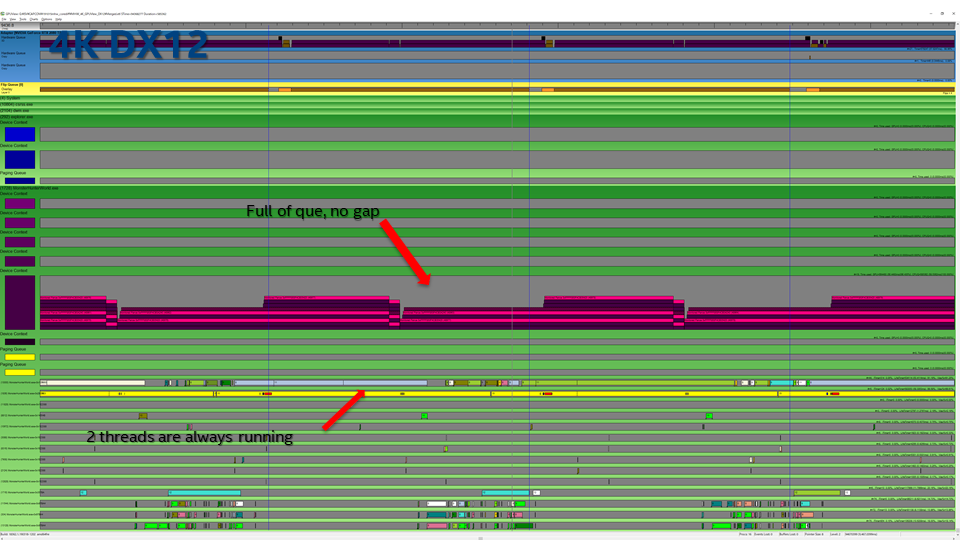

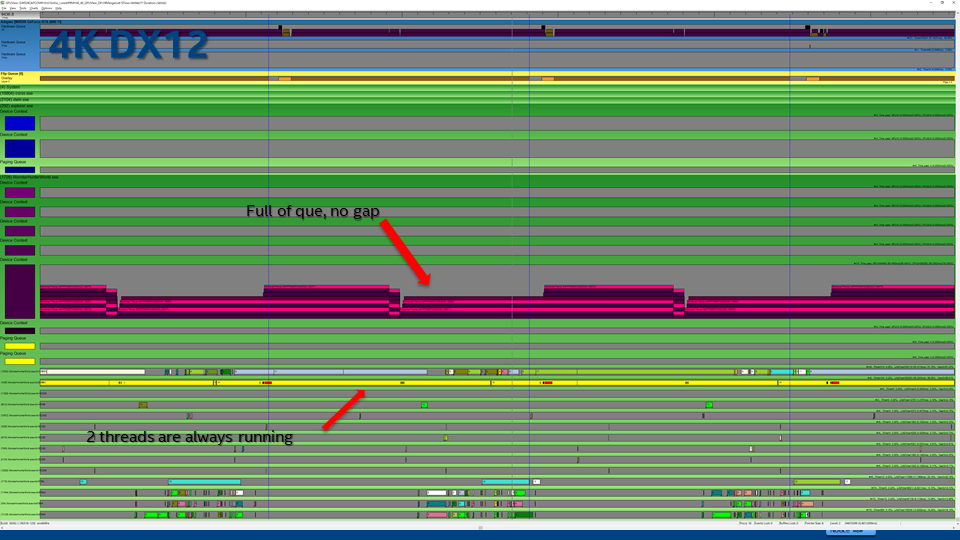

Second, we chose Intel® Core™ i9-9900K Processor + GeForce RTX* 2080 Ti as the high-end target system. For this environment, we chose GPUview as the initial analysis tool. Although Monster Hunter World: Iceborne* was DLC, CAPCOM tried to enable DirectX* 12 support on this version for the first time on Monster Hunter World* series. So, we analyzed both DX11 and DX12 API, along with 2K(1920x1080) and 4K(3840x2160) resolution environments for covering several user scenarios. Initial assessments showed that performance was DX12 > DX11 @ 2K, DX11 > DX12 @ 4K. Also, GPUview results indicated that there was a CPU bottleneck for both DX11/12 2K (Figure 2), GPU bottleneck for both DX11/12 4K (Figure 3). From those results, we focused on Intel® VTune™ Profiler analysis for solving CPU bottleneck for the next step.

Figure 2 2K DX12, gaps in device context, CPU bottleneck

Figure 3 4K DX12, Full of que in device context, GPU bottleneck

Geometry Transformation

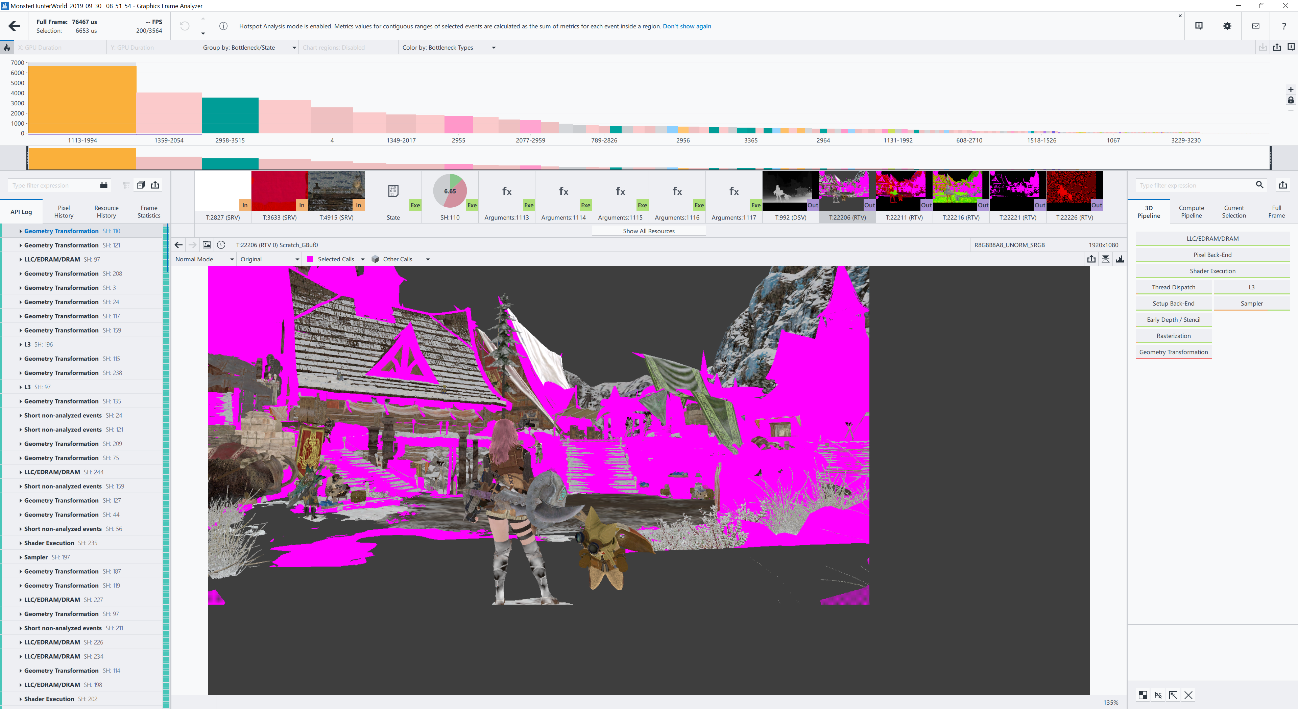

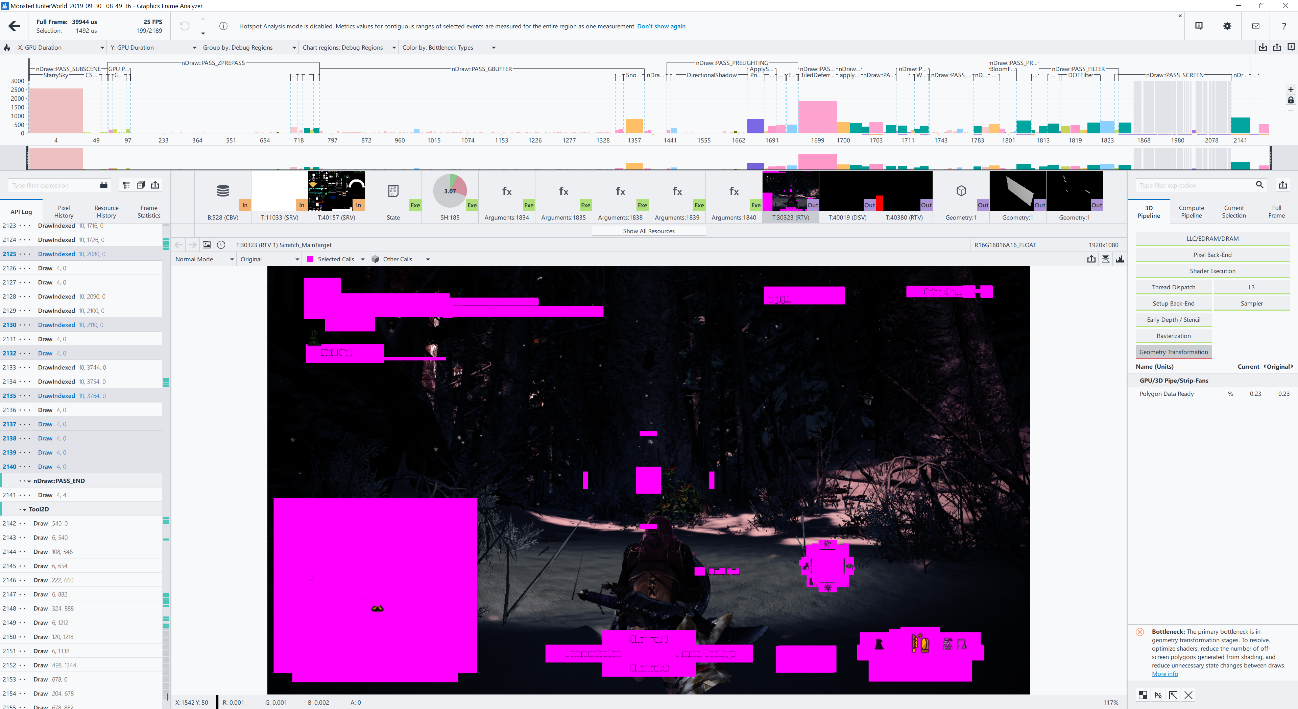

Because of “DLC”, initially we focused on evaluating various “new stages” because it possesses new potential bottlenecks due to new expressions. However, in most cases, top hotspots were Geometry Transformation for architectures, terrain, and objects, same tendency with original “Monster Hunter World*” (Figure 4). To solve this, CAPCOM implemented additional graphics setting “Max LOD Level”, to take more aggressive Level of Depth. By selecting option as “-1”, we could mitigate Geometry Bottleneck, improving performance. But this had already been included in the original version, so we needed to have to look for other optimization items.

Figure 4 Geometry bottleneck for architecture

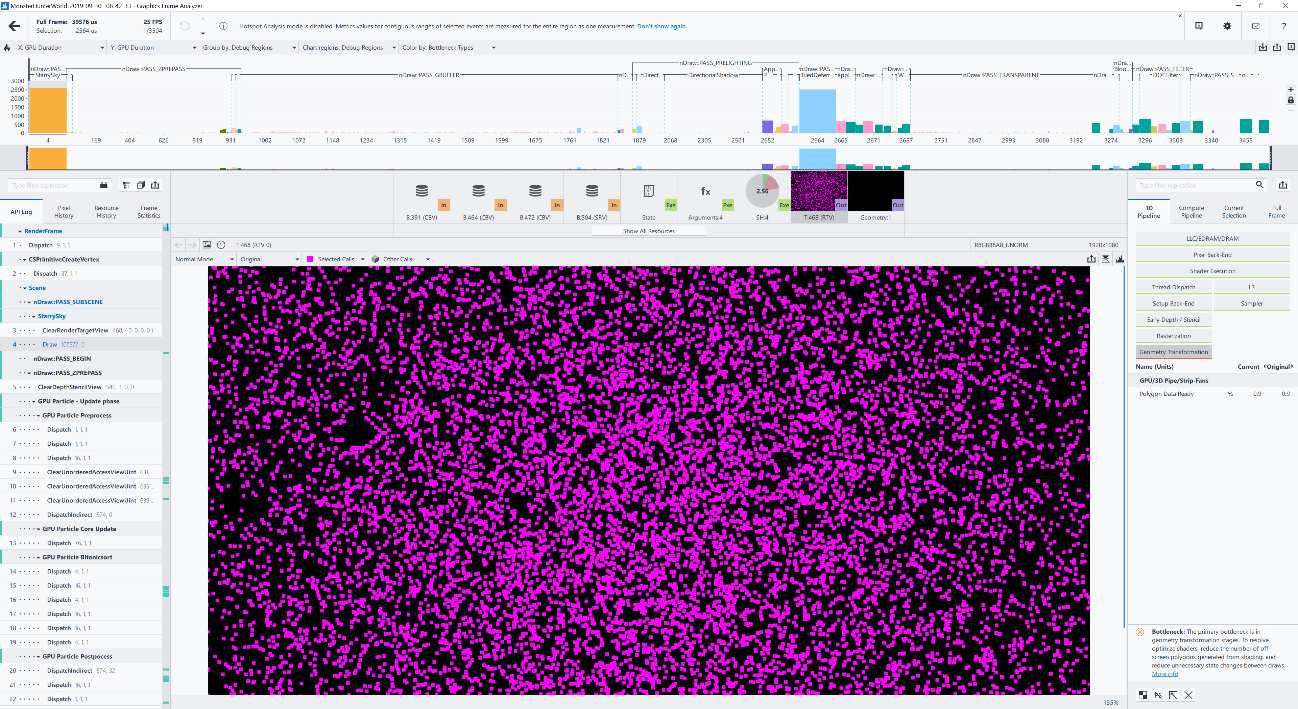

In this case, we could find a new geometry bottleneck due to an additional expression. “StarrySky” – indicating a full set of stars in skybox, and due to normalized performance between day and night, this draw call is always needed, regardless of time periods, thus causing a new Geometry bottleneck (Figure 5). To solve this, CAPCOM changed the drawing method from geometry shader to building Instanced Vertex Buffer by compute shader. Thus, improving performance while keeping the quality of stars intact.

Figure 5 Geometry bottleneck for star

Graphics format

We also found a new bottleneck, one of the buffers after lighting path was using R16G16B16A16_FLOAT, which is too expensive for SDR environment (Figure 6). CAPCOM changed this format to lighter one, R11G11B10_FLOAT, which mitigated this bottleneck.

Figure 6 Expensive graphics format

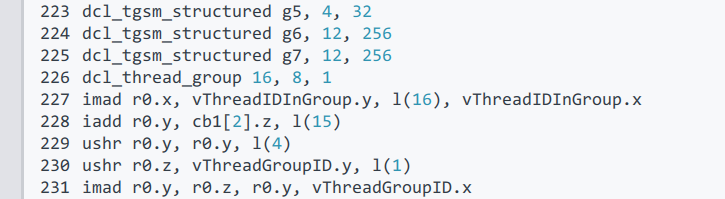

Compute Shader SIMD usage

Same as other modern game engines, “World Engine” uses compute shader for various usages, such as Lighting. We could see thread dispatch bottleneck on TiledDeferredLighting. We can check compute shader code details by choosing Shader column. On initial assessment, we saw that dcl_thread_group was 16,8,1 on DX12, whereas 16,16,1 on DX11 (Figure 7). Generally, a thread group size of 8x8 performs well on Gen11. CAPCOM chose 8x16 dispatch with SIMD16 for both DX11/12 environments, then improved EU occupancy to 93% @ DX12, 98% @ DX11.

Figure 7 Thread Group Size for Compute Shader SIMD usage

Eliminate debug function

Only on DX12 environment, full screen finalizing phase was detected as a top hotspot with unknown cause. Those are ResolveQueryData call with‘D3D12_QUERY_TYPE_TIMESTAMP’ and WriteBufferImmediate (Figure 8).

CAPCOM confirmed those are partially debug features and can be mostly eliminated on the production version. However, they are also partially needed in production as it is used to get a timestamp for dynamic resolution. ResolveQueryData was a potential bottleneck, particularly on Intel® Graphics, but the latest Graphics driver solved this. However, we still advise to not leave in time stamp queries as much as possible in production code, as it will still be wasted performance.

Figure 8 Hotspot by ResolveQueryData

Thread management

For high-end CPU/GPU environment analysis, as we mentioned above, used a combination of GPUview and Intel® VTune™ Profiler analysis. Initial GPUview log showed the fact that even on a 4K environment – GPU bottleneck – was always 1 CPU thread on DX12, 2 threads on DX11 – different from the main thread - were kept running (Figure 9).

Figure 9 GPUview, 4K DX12 result

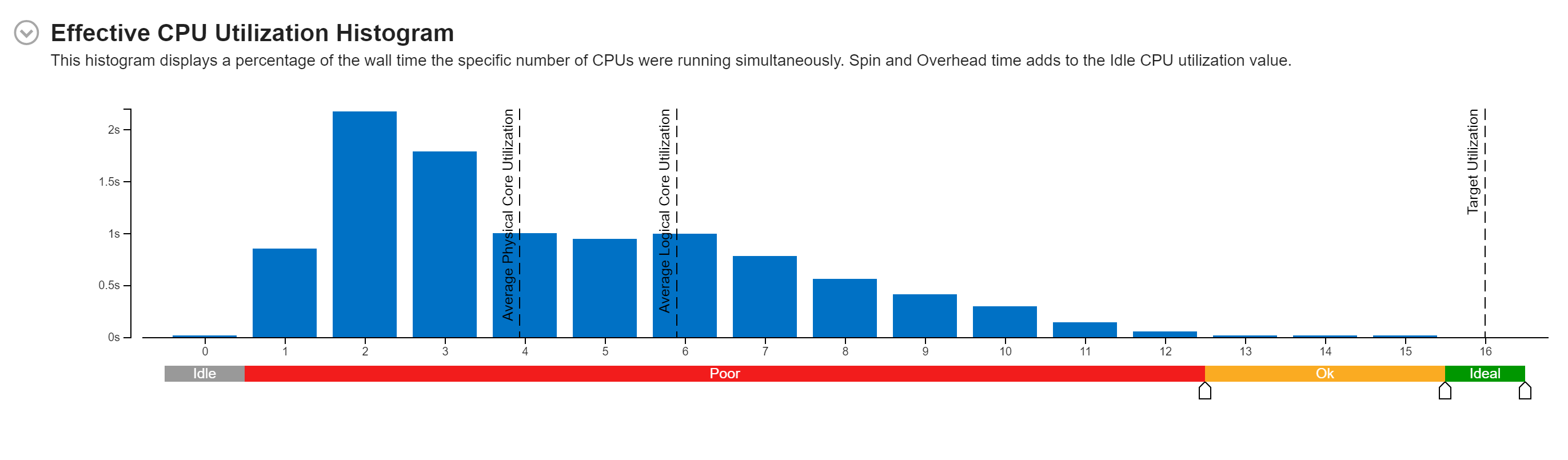

From Intel® VTune™ Profiler’s Effective CPU Utilization Histogram results, it also indicated low parallelism. For example, max CPU core usages on 2K DX11 was 2, even though system has logical 16 cores (Figure 10).

Figure 10 Intel® VTune™ Profiler’s Effective CPU Utilization Histogram

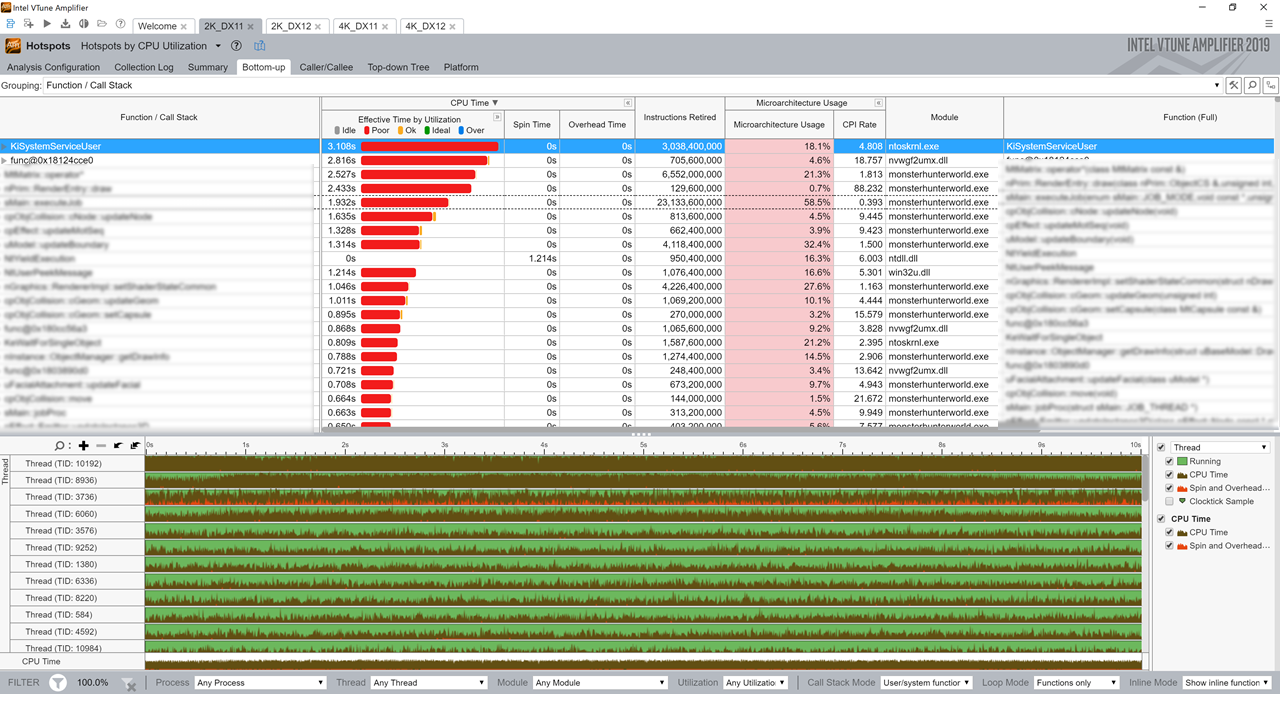

From Intel® VTune™ Profiler’s Bottom-up view (Figure 11), we could see one of the main functions in monsterhunterworld.exe and KiSystemServiceUser in ntoskrnl.exe were always top hotspots except for DX12 4K environment. For DX12 4K environment, Cfence::GetCompletedValue / d3d12.dll was the top hotspot.

Figure 11 Intel® VTune™ Profiler’s Bottom-up view

From those results, CAPCOM solved and improved several parts.

- Could specify one of the causes for low parallelism issue as unintended infinite loop of window procedure.

- For solving CPU idle phase on a DX12 environment, changed waiting method to Signal (WaitForSignleObject) instead of waiting for GPU completion by spinlock (ID3D12Fence::GetCompletedValue).

- Refactored DX12 multithreading algorithm. Specifically, the intermediate drawing commands accumulated in the rendering system were divided by the number of CPU cores (max 8 on 10core system), and each thread was executed one by one.

- Modified dynamic rendering thread priority.

- Inserted _mm_pause for spinlock.

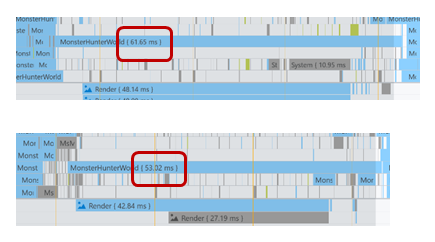

Thanks to those improvements, the main thread became shorter by around +10msec per frame (Figure 12).

Figure 12 Main thread improvement

Conclusion

Thanks to those optimization efforts, performance has been improved on Intel® Iris® Plus Graphics to be more playable on 720p resolutions. Also, we can anticipate it’s playability with 1080p on Intel® Xe Graphics Architecture, codename Tiger Lake. Simultaneously, we also improved on high-end platforms, not only improving over 10% compared with the initial version, but also, we could see over 10% performance benefits on 10 core systems (Intel® Core™ i9-10900K Processor) vs 8 core systems (Intel® Core™ i9-9900K Processor), thanks to refactoring the DX12 multi thread algorithm.

As a recap, following are the major performance optimizations:

- Improving Geometry Transformation by LODs et al.

- Reconsidering graphics format

- Reconsidering compute shader SIMD usage

- Eliminate debug function

- Refactor thread management

Monster Hunter World* is so-called “Ever Green” title, will be played by more worldwide gamers in the future. We are so happy to help elevate this title to be more enjoyable to play on many environments.

About the author

Mikio Sakemoto is an application engineer in the Intel® Client XPU Products and Solutions division. He is responsible for software enabling and also works with application vendors in the area of gaming. Prior to his current job, he was a software engineer for various mobile devices, including embedded Linux* and Windows*.