Updated: July 29th, 2021

This guide provides a step-by-step procedure for virtualizing Intel® Software Guard Extensions (Intel® SGX) using the Kernel-based Virtual Machine (KVM) virtualization module in the Linux* kernel with the QEMU* virtual machine monitor. This creates a virtual machine on your Intel SGX capable hardware, which can use Intel SGX and Intel SGX Data Center Attestation Primitives (Intel SGX DCAP) in the guest operating system.

Requirements

To use Intel SGX in a virtual machine, you must meet the following requirements:

- The host system must support Intel SGX.

- Intel SGX must be enabled, either explicitly in the BIOS or via the software enabling procedure.

- If you want to use Flexible Launch Control in guest systems, the hardware must also support the feature.

- You must run Linux kernel version 5.13 or later, on the host and in the guest VMs. This article provides instructions for two options: installing the 5.13 kernel using mainline, and building the kernel from source.

- If you plan to use the system with Intel SGX DCAP, use the 1.11 release or later on both the host and in the guest VM's. This is based on the Intel SGX driver which is included in the kernel that you will be building for KVM.

Intel SGX support in QEMU is still under active development. Significant changes to the software can and do occur during each revision. This software should not be used in production deployments at the current time.

Installation Procedure

This guide assumes you are starting from a clean installation of Ubuntu* 20.04 LTS Server, but the steps here can be adapted to other Linux distributions.

Update the kernel

KVM support for Intel SGX was added to the Linux kernel in kernel version 5.13. There were not pre-built 5.13 kernel packages for Ubuntu 20.04 LTS at the time of this writing, so you’ll be installing that kernel as part of this process.

If your distribution has 5.13 or later kernel packages and you plan to use them instead of building your own, you should verify that Intel SGX support was included in them. If not, you’ll need to build your own.

Option 1: Install a Pre-built Kernel Image

Installing a pre-built image is the fastest and easiest way to update the kernel. Under Ubuntu this can be done using mainline, a package for installing Ubuntu’s upstream kernel builds. These kernels are created from unmodified kernel source, and as of this writing, they include support for both Intel SGX and its virtualization.

The mainline package is not part of the base repositories in Ubuntu, so the repository will have to be added before the package can be installed:

sudo add-apt-repository ppa:cappelikan/ppa

sudo apt-get update

Next, install the mainline package:

sudo apt install mainline

The mainline utility can list the available kernel images as well as install them. To install the 5.13.4 kernel, run:

sudo mainline --install 5.13.4

Mainline will install the headers, kernel, and kernel module packages for each image, as well as a meta package to encapsulate them, and these can be viewed and verified using dpkg:

$ dpkg -l | grep linux | grep 5.13.4

ii linux-headers-5.13.4-051304 5.13.4-051304.202107201535 all Header files related to Linux kernel version 5.13.4

iU linux-headers-5.13.4-051304-generic 5.13.4-051304.202107201535 amd64 Linux kernel headers for version 5.13.4 on 64 bit x86 SMP

ii linux-image-unsigned-5.13.4-051304-generic 5.13.4-051304.202107201535 amd64 Linux kernel image for version 5.13.4 on 64 bit x86 SMP

ii linux-modules-5.13.4-051304-generic 5.13.4-051304.202107201535 amd64 Linux kernel extra modules for version 5.13.4 on 64 bit x86 SMP

The installation procedure runs grub to update your boot configuration so that the new kernel boots by default. At this point, you are ready to reboot.

Option 2: Build the Kernel From Source

Building a kernel from source gives you precise control over the kernel’s configuration and its features. The following steps you through the process of building a kernel with Intel SGX support on Ubuntu.

Install Prerequisites

Install the software packages that are needed to build the kernel and QEMU:

sudo apt update

sudo apt install fakeroot libelf-dev build-essential libncurses-dev flex bison libssl-dev libfdt-dev libncursesw5-dev pkg-config libgtk-3-dev libspice-server-dev libssh-dev python3 python3-pip

Download and Build the Linux Kernel

Download the latest stable kernel image from kernel.org and untar it. At the time of this writing, the latest version in the stable branch was 5.13.4.

wget https://cdn.kernel.org/pub/linux/kernel/v5.x/linux-5.13.4.tar.xz

tar xf linux-5.13.4.tar.xz

cd linux-5.13.4

Prepare for the kernel build. Setting a shell variable that defines the build area makes this process less error prone.

opt=O=$HOME/build

The O parameter specifies the output location of your kernel configuration and build. This allows you to build multiple kernels from the same source tree without them interfering with one another, and it keeps your source tree clean.

With this convenience shell variable set, you are ready to configure the kernel.

make $opt menuconfig

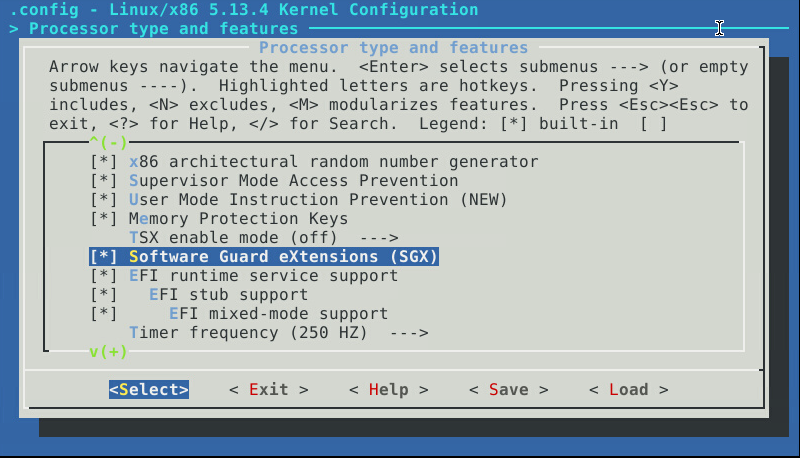

To enable Intel SGX support in KVM guests, you must enable the core functionality in the kernel from the Processor type and features menu. Scroll down to Software Guard eXtensions (SGX) and ensure it is selected. It may be off by default if you are building from a fresh source tree.

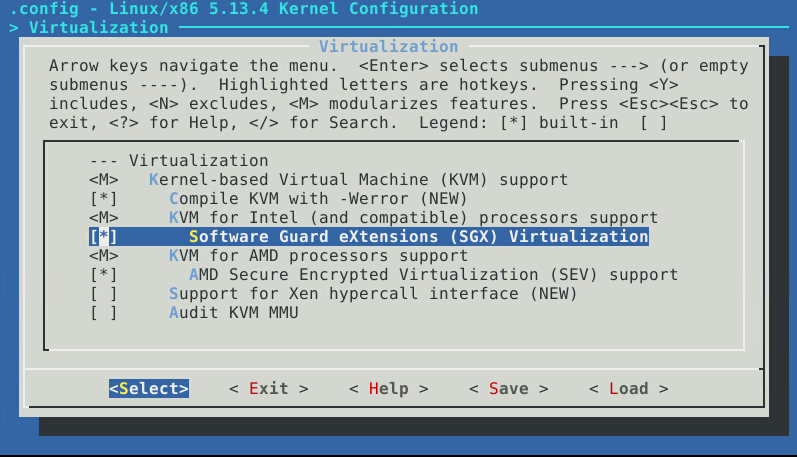

Next, you need to ensure that KVM virtualization is enabled. From the top-level menu, go to the Virtualization menu and ensure the following features are selected.

- Kernel-based virtual machine (KVM) support

- KVM for Intel (and compatible) processors support

- Software Guard Extensions (SGX) Virtualization

The first two may already be enabled as loadable modules (an “M” instead of an asterisk “*”).

The other options are not required, but most of them may already be set.

Save your settings and Exit the kernel configuration screen.

The next step is to compile the kernel. A first-time build from the source tree is a lengthy process that can take a couple of hours, depending on your hardware.

make $opt

You can use the -j option in make to run builds in parallel in order to speed up the process, but specifying more than 2 to 4 jobs can lead to build failures with cryptic error messages. If your build fails, you’ll need to run a make clean and try again with a less aggressive setting.

When the build is finished, install the new kernel. You’ll need to be root to do this.

sudo make $opt INSTALL_MOD_STRIP=1 modules_install

sudo make $opt install

The installation procedure runs grub to update your boot configuration so that the new kernel boots by default. At this point, you are ready to reboot.

Verify the Kernel and Intel SGX Support

After rebooting, log in to verify the new kernel and Intel SGX support. First, the kernel version should be 5.13.4, which you can check with uname.

localhost:$ uname -r

5.13.4

Verify that the Intel SGX driver has loaded by examining the kernel log. You should see a log entry for sgx, which prints the address range for the enclave page cache (EPC) in memory. There will be one line per CPU package.

localhost:~$ dmesg | grep sgx

[ 3.748768] sgx: EPC section 0x2000c00000-0x207f7fffff

[ 3.753699] sgx: EPC section 0x4000c00000-0x407fffffff

If you do not see an “sgx: EPC section” line, then your Intel SGX driver did not load. Possible causes are:

- Intel SGX is not enabled in the system BIOS

- Intel SGX is not supported by your processor

- Intel SGX core functionality was not compiled into your kernel image, or it was not select in the kernel configuration if you built the kernel from source

- An old kernel without the Intel SGX driver booted instead of the new one

If you see this line:

sgx: IA32_SGXLEPUBKEYHASHx MSRs are not writable

then your system supports Intel SGX, but not Launch Control. You’ll still be able to use KVM in your guest systems, but launch control will not be available.

Build QEMU-SGX

Clone the qemu-sgx repository.

git clone http://github.com/intel/qemu-sgx

Check out the sgx-v6.0.0-rc3 tag. This is the release that is compatible with the SGX virtualization support in the 5.13 kernel.

git checkout sgx-v6.0.0-rc3

QEMU v6.x uses the ninja build system, and it specifically requires meson version >= 0.55 which is not available as a package for Ubuntu 20.04 LTS. To work around this, install ninja and meson for Python 3 using pip:

sudo pip3 install meson ninja

The build procedure for QEMU is slightly different than that for most Linux applications. First, you’ll need to install and/or update some of the submodules in the qemu-sgx repository. The command below takes care of the core components.

scripts/git-submodule.sh update ui/keycodemapdb tests/fp/berkeley-testfloat-3 tests/fp/berkeley-softfloat-3 dtc

You may be required to update others, however. If the configuration step fails, it should tell you which components need to be manually updated. Run the scripts/git-submodule.sh command line as prompted in the error message.

You should only perform these updates once. It’s recommended that you freeze the source code repository to ensure you have a consistent, known-good build environment in the event a rebuild is necessary. The QEMU-SGX repository is an out-of-tree set of patches to the QEMU source code, which means the original QEMU package maintainers are not validating their updates against the changes needed by Intel SGX. See the --with-git-submodules=ignore option to QEMU’s configuration script in Table 1. This option replaces --disable-git-update which has been deprecated.

To configure QEMU, you need to create a build directory and then run QEMU’s configure script from within it. The following configure options are recommended (and in some cases, required):

Table 1 QEMU configuration options

| Option | Notes |

| --enable-kvm | Not strictly necessary if you are booted into your KVM-kernel, but it’s good practice to specify it for clarity. |

| --enable-spice | Required if you want to use the libvirt package and the virt-viewer application to reach the console. |

| --with-git-submodules=ignore |

This prevents the QEMU build system from trying to pull in updated sources from the QEMU Git* repository. |

| --enable-curses --enable-gtk --enable-vnc |

These provide additional flexibility for connecting to the console. |

If you enable more options, you may need to install additional prerequisite packages.

The build steps are as follows:

mkdir build

cd build

../configure --with-git-submodules=ignore --enable-kvm --enable-vnc --enable-curses --enable-spice --enable-gtk --target-list=x86_64-softmmu --disable-werror

make

A quick functionality test is to run an empty guest virtual machine (VM) with minimal arguments, and force Intel SGX support on. This virtual machine will not be able to boot, but you will know right away if there is a problem as the VM will not start.

sudo x86_64-softmmu/qemu-system-x86_64 -nographic -enable-kvm -cpu host,+sgx -object memory-backend-epc,id=mem1,size=8M,prealloc -sgx-epc id=epc1,memdev=mem1

If Intel SGX is properly enabled on the host, you should see output that is similar to the following when the virtual machine starts up:

Hit Ctrl-A, then C to get the monitor’s command prompt, then type quit to exit.

If the virtual machine ran properly, you are ready to install QEMU. It will go into /usr/local/bin unless you changed the install prefix when you ran configure.

sudo make install

Using Intel SGX in the QEMU Virtual Machine

There are two parts to enabling Intel SGX in a guest VM. You must:

- Enable the Intel SGX feature, and

- define an enclave page cache (EPC).

Enable Intel SGX in the VM

Adding +sgx to the -cpu option to QEMU enables Intel SGX in the VM. Depending on what CPU model you choose for your VM, QEMU may auto-enable SGX, but there’s less confusion if you explicitly add the sgx parameter.

An easy way to enable Intel SGX support is to pass the host CPU through to the VM rather than define a specific CPU model. This is the default behavior, but again, being explicit with QEMU options helps to eliminate confusion.

If your hardware supports Intel SGX Launch Control, then you can enable (or disable) Launch Control with the sgxlc parameter. By default, the virtual machine inherits the host machine’s configuration, but continuing with the theme of clarity, it is recommended that you explicitly define it.

The following options enable Intel SGX in the VM, but disable Launch Control:

-cpu host,+sgx,-sgxlc

To enable both Intel SGX and Launch Control:

-cpu host,+sgx,+sgxlc

If for some reason you want to disable SGX in the VM entirely, use the following:

-cpu host,-sgx

Allocate an Enclave Page Cache

The current version of the sgx-kvm kernel divides the EPC among the guest hosts. You specify how much of the EPC to provide to a guest, and this reduces the total amount of EPC that is available to other guests (and to the host system itself, if you choose to run SGX applications on the host as well). If you have 96 MB of EPC on your host and you assign 16 MB to each virtual machine, then you’ll only be able to run (at most) six VMs at one time. This behavior may change in future releases of the kvm-sgx kernel.

To define an EPC range, you must allocate a custom QEMU memory object and assign it a unique ID, then provide the memory ID to the -sgx-epc option. The following QEMU options create and assign an 8-MB EPC to the VM:

-object memory-backend-epc,id=mem1,size=8M,prealloc=on -sgx-epc id=epc1,memdev=mem1

You can define multiple EPC segments in this manner. See the README file for the qemu-sgx repository for more information on defining EPC segments.

Integrating with Libvirt

While it is possible to run the qemu-system-x86_64 binary directly on the command line, the lengthy command lines and complex options can make that unwieldy. Libvirt provides a complete management suite that greatly simplifies virtual machine creation, execution, and maintenance. A usage guide to libvirt is beyond the scope of this tutorial.

Run the following to install libvirt:

sudo apt install libvirt-clients virt-manager

The installation process should add you to the libvirt group. The default configuration for libvirt requires that non-root users be in this group to create and manage global VMs.

At this point you will need to log out and then log in again so that the new group membership takes effect.

The libvirt installation may also install Ubuntu’s QEMU package into /usr/bin. To use the qemu-system-x86_64 binary that you installed into /usr/local/bin, you’ll need to specify the path to the QEMU emulator manually after creating a virtual machine. Do not overwrite or replace the distribution-managed package, as any package updates could create conflicts with your custom-built binary.

Configure AppArmor

AppArmor is a mandatory access control (MAC) system that is installed in Ubuntu 20.04 by default. MACs constrain application capabilities at a finer level of granularity than the traditional Unix* file permissions. The default configuration for libvirt does not allow execution of programs in /usr/local/bin.

If you are using AppArmor, you’ll need to add the following line to /etc/apparmor.d/local/usr.sbin.libvirtd:

/usr/local/bin/* PUx,

Reload the AppArmor profile for ibvirtd by running apparmor_parser:

sudo apparmor_parser -r /etc/apparmor.d/usr.sbin.libvirtd

Configure QEMU in libvirt

The Intel SGX build of QEMU requires access to the following devices:

- /dev/sgx_enclave to launch enclaves

- /dev/sgx_provision to launch the provisioning certification enclave (PCE)

- /dev/sgx_vepc to assign EPC memory pages

Access to these device files will be denied by libvirt’s cgroup controllers which are enabled by default. Edit /etc/libvirt/qemu.conf and change the cgroup_device_acl list to include all three:

cgroup_device_acl = [

"/dev/null", "/dev/full", "/dev/zero",

"/dev/random", "/dev/urandom",

"/dev/ptmx", "/dev/kvm",

"/dev/rtc","/dev/hpet",

"/dev/sgx_enclave", "/dev/sgx_provision", "/dev/sgx_vepc"

]

QEMU also needs to read and write to the /dev/sgx_vepc device, which is owned by root with file mode 600. This means you must configure QEMU to run as root. To do that, /etc/libvirt/qemu.conf, and set the user parameter.

user = "root"

By default, libvirt also tries to use a security driver for QEMU, and it chooses either Security-Enhanced Linux (SELinux) or AppArmor, whichever is available. This is set by the security_driver parameter. If you don’t want to use a security driver, set this parameter to “none”. Configuring SELinux profiles for libvirt’s security driver is outside the scope of this document. To set AppArmor, set the parameter to “apparmor”.

security_driver = [ “apparmor” ]

If you are using AppArmor for the security driver, you’ll need to modify /etc/apparmor.d/libvirt/TEMPLATE.qemu to read:

#include <tunables/global>

profile LIBVIRT_TEMPLATE flags=(attach_disconnected) {

#include <abstractions/libvirt-qemu>

/usr/local/bin/* PUx,

}

After making these changes, you’ll need to restart the libvirtd service:

sudo systemctl restart libvirtd

Install a Test VM

Verify that your login session includes the libvirt group by running the groups command:

localhost:~$ groups

adm cdrom sudo dip plugdev lxd libvirt

If you want to be able to execute virt-manager or use the graphics consoles for your virtual machines, you’ll need a graphical desktop as well. If you haven’t already, install the desktop environment for Ubuntu, and (optionally) a VNC server, if you’ll be working remotely. Note that this step is strictly one of convenience; you can create and manage virtual machines in a command-line environment.

sudo apt install ubuntu-desktop

Unfortunately, libvirt does not have direct support for the Intel SGX enabled version of QEMU. To add Intel SGX support to a virtual machine, you’ll first need to create the VM and then edit the XML by hand.

You have three main options for creating a VM.

Option 1: Create a blank VM on the command line

This method works entirely from the command line, though it does require a few manual steps. First, generate a unique UUID with the uuidgen command.

uuidgen

Next, create a disk image to hold the VM. Using the QEMU disk image format is arguably the most versatile, and allows features such as VM snapshots and encryption. The following creates a 20GB disk image.

localhost:~$ qemu-img create -f qcow2 testvm.qcow2 20G

Formatting 'testvm.qcow2', fmt=qcow2 size=21474836480 cluster_size=65536 lazy_refcounts=off refcount_bits=16

Finally, create an XML file, which we’ll call testvm.xml, that defines a minimal VM. This configuration is for 4 GB RAM and a single virtual disk which boots in UEFI mode. It does not include a graphical console (you can add this later in virt-manager). Note that you’ll need to set the UUID and the source file path to the disk image you created above. This must be an absolute path. Also, the <nvram> path must be unique to the VM.

<domain type='kvm' xmlns:qemu='http://libvirt.org/schemas/domain/qemu/1.0'>

<name>testvm</name>

<uuid>UUID_FROM_UUIDGEN_GOES_HERE</uuid>

<memory>4194304</memory>

<currentMemory>4194304</currentMemory>

<vcpu>1</vcpu>

<os>

<type arch='x86_64' machine='pc-i440fx-2.8'>hvm</type>

<loader readonly='yes' type='pflash'>/usr/share/OVMF/OVMF_CODE.fd</loader>

<nvram>/var/lib/libvirt/qemu/nvram/testvm_VARS.fd</nvram>

</os>

<features>

<acpi/>

<apic/>

<pae/>

</features>

<clock offset='utc'/>

<on_poweroff>destroy</on_poweroff>

<on_reboot>restart</on_reboot>

<on_crash>restart</on_crash>

<devices>

<emulator>/usr/local/bin/qemu-system-x86_64</emulator>

<disk type='file' device='disk'>

<driver name='qemu' type='qcow2'/>

<source file='/PATH/TO/testvm.qcow2'/>

<target dev='vda' bus='virtio'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x05' function='0x0'/>

</disk>

<controller type='ide' index='0'>

<address type='pci' domain='0x0000' bus='0x00' slot='0x01' function='0x1'/>

</controller>

<memballoon model='virtio'>

<address type='pci' domain='0x0000' bus='0x00' slot='0x04' function='0x0'/>

</memballoon>

</devices>

</domain>

Next, define the VM in libvirt using the XML source file:

localhost:~$ virsh define testvm.xml

Domain testvm defined from testvm.xml

You can verify that the domain has been created by listing the known libvirt domains.

localhost:~$ virsh list –all

Id Name State

----------------------------------------------------

- testvm shut off

Run it as a final validation step. This definition does not provide a console, nor is there an OS loaded, so it will simply start and run in the background.

localhost:~$ virsh start testvm

Domain testvm started

Stop the VM by running the following:

localhost:~$ virsh destroy testvm

Domain testvm destroyed

Option 2: Create a blank VM using virt-manager

Once your desktop environment is running, you can run virt-manager and create a virtual machine. This method is arguably the easiest, as the GUI steps you through the options.

Option 3: Create and install an OS in the VM with virt-install

This method allows you to create and install a VM from a source image such as an ISO image. The virt-install command is part of the virtinst package.

sudo apt install virtinst

Add Intel SGX Support to the Test VM

Unfortunately, libvirt does not have direct support for the Intel SGX enabled version of QEMU. To add Intel SGX support to your newly-created virtual machine, you’ll need to edit the XML for the newly created VM (or domain, using libvirt’s terminology) by hand.

virsh edit domain

The first step is to edit the opening <domain> stanza as follows:

<domain type='kvm' xmlns:qemu='http://libvirt.org/schemas/domain/qemu/1.0'>

This allows you to encode custom QEMU arguments in the XML definition for the domain.

Next, you need to delete the <cpu> stanza if your XML definition includes one. This is necessary because you’ll be providing custom arguments to QEMU’s -cpu option.

Inside the <devices> stanza, you’ll need to add the path to your qemu binary in /usr/local/bin.

<devices>

<emulator>/usr/local/bin/qemu-system-x86_64</emulator>

<!-- Your XML file will have several items inside here -->

</devices>

The required arguments for QEMU are encoded via a <qemu:commandline> stanza, with each argument getting its own <qemu:arg> definition. Place this anywhere inside the <domain> stanza.

<qemu:commandline>

<qemu:arg value='-cpu'/>

<qemu:arg value='host,+sgx,+sgxlc'/>

<qemu:arg value='-object'/>

<qemu:arg value='memory-backend-epc,id=mem1,size=16M,prealloc=on'/>

<qemu:arg value='-sgx-epc'/>

<qemu:arg value='id=epc1,memdev=mem1'/>

</qemu:commandline>

This XML corresponds to the following QEMU command-line options:

-cpu host,+sgx,+sgxlc -object memory-backend-epc,id=mem1,size=16M,prealloc=on -sgx-epc id=epc1,memdev=mem1

Test the Intel SGX Enabled VM

If your VM does not start, see the following table for a list of common errors and their possible causes. You may need to look at the system logs for qemu in /var/log/libvirt/qemu to get complete error messages.

localhost:~$ virsh start testvm

Domain testvm started

Troubleshooting

If your VM does not start, see the following table for a list of common errors and their possible causes:

| Error Message |

Possible Cause(s) and Resolution |

| -sgx-epc: invalid option | Libvirt is running the distribution QEMU from /usr/bin. Make sure the <emulator> stanza is set to /usr/local/bin/qemu-system-x86_64 |

| /usr/local/bin/qemu-system-x86_64: Permission denied |

Your MAC is preventing access to the executable in /usr/local/bin. You either have: |

| invalid object type: memory-backend-epc | Your kernel version may be too old. In 5.13 the virtual memory device is /dev/sgx_vpc, which is a change from earlier release candidates. Make sure you are running a 5.13 or later kernel. OR Libvirt’s security settings are preventing access to /dev/sgx_vepc. Check the following: 1. /dev/sgx_vepc should be in the cgroup_device_acl list in qemu.conf. 2. QEMU should be configured to run as uid 0 in qemu.conf. 3. Either disable your MAC or create an exception or profile to allow access to /dev/sgx_virt_epc. |

Assuming the VM launches properly, you are now ready to create a real guest image and start using Intel SGX in your virtualized environment.

Summary

With the Intel SGX enabled KVM and QEMU distributions it is possible to virtualize Intel SGX on Intel SGX capable hardware. Each guest operating system gains access to the Intel SGX hardware features, and they can be configured independently of one another, whether that be EPC size, access to flexible launch control, or even access to Intel SGX as a whole. Further, these virtual machines can be managed through libvirt, making it possible to integrate Intel SGX enabled guests into existing deployments.

Further Reading

See the kvm-sgx and qemu-sgx project pages on GitHub* for technical information and the latest updates on the Intel SGX virtualization project.