Introduction

This article describes the concept of TCP Segmentation Offload (TSO) and discusses some best-known methods for testing the feature in Open vSwitch* (OVS) with the Data Plane Development Kit (DPDK). It also describes the benefits the feature brings to OVS DPDK. It was written for users of OVS DPDK who want to know more about how to test TSO, particularly with Intel® Ethernet Controller 710 Series Network cards.

At this time, TSO in OVS with DPDK is available on both the OVS master branch and the 2.13 release branch. Users can download the OVS master branch or the 2.13 branch as a zip file. Installation steps for the master branch and 2.13 branch are available as well.

TSO

TCP Segmentation Offload is often also referred to as Large Send Offload (LSO). Segmentation refers to the splitting up of large chunks of data into smaller segments. Offload refers to the practice of moving this workload off the CPU and onto the network card. Offloading this work saves CPU cycles and generally improves packet processing performance.

TSO has been available in OVS DPDK since the 2.13 release.

Test Environment

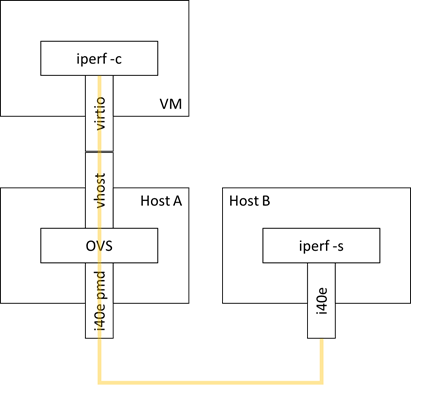

The following describes how to set up an OVS DPDK configuration on host A with one physical dpdk port and one dpdkvhostuserclient port attached to a virtual machine (VM). The VM runs an iperf client process. The server process is run on another host B with a network interface that is connected back-to-back with the network interface used for the dpdk port. If only one host is available, it is possible to use two NICs connected back-to-back on the same host instead. Figure 1 shows the test environment configuration.

Figure 1: Open vSwitch* with the Data Plane Development Kit configuration using one physical port and one dpdkvhostuserclient port. The iperf tool is being used to generate traffic and measure bandwidth. The iperf client process is run on the virtual machine on host A, and the iperf server process is run on host B, which is connected back-to-back with host A.

Figure 1: Open vSwitch* with the Data Plane Development Kit configuration using one physical port and one dpdkvhostuserclient port. The iperf tool is being used to generate traffic and measure bandwidth. The iperf client process is run on the virtual machine on host A, and the iperf server process is run on host B, which is connected back-to-back with host A.

The setup used in this article consists of the following hardware and software components:

|

Processor |

Intel(R) Xeon(R) Gold 6140 CPU @ 2.30GHz |

|

Kernel |

5.4.0 |

|

OS |

Ubuntu* 18.04 |

|

Data Plane Development Kit |

19.11 patched with https://patches.dpdk.org/patch/64136/ |

|

Open vSwitch* |

branch-2.13 |

Configuration Steps

- Build OVS with DPDK, as described in the installation docs.

- Enable TSO before launching the daemon

$ ovs-vsctl --no-wait set Open_vSwitch . other_config:userspace-tso-enable=true

- Configure the switch as described in the Test Environment section, with one physical dpdk port and one dpdkvhostuserclient port. Set their MTUs to 1500 using the mtu_request option, and configure flow rules between the two ports.

$ ovs-vsctl add-br br0

$ ovs-vsctl set Bridge br0 datapath_type=netdev

$ ovs-vsctl add-port br0 dpdk0

$ ovs-vsctl set Interface dpdk0 type=dpdk options:mtu_request=1500

$ ovs-vsctl add-port br0 vhost0

$ ovs-vsctl set Interface vhost0 type=dpdkvhostuserclient

$ ovs-vsctl set Interface vhost0 options:vhost-server-path=/path/to/sock mtu_request=1500

$ ovs-ofctl add-flow br0 in_port=1,action=output:2

$ ovs-ofctl add-flow br0 in_port=2,action=output:1

- Launch the switch.

- Launch the VM. The sample Qemu command line below shows the appropriate offloads to be enabled.

$ taskset -c 0xF0 qemu-system-x86_64 -cpu host -enable-kvm -m 2048M -object memory-backend-file,id=mem,size=2048M,mem-path=/dev/hugepages,share=on -numa node,nodeid=0,memdev=mem -mem-prealloc -smp 4 -drive file=/path/to/image -chardev socket,id=char0,path=/path/to/sock,server -netdev type=vhost-user,id=mynet1,chardev=char0,vhostforce -device virtio-net-pci,mac=00:00:00:00:00:01,netdev=mynet1,csum=on,gso=off,host_tso4=on,host_tso6=on,host_ecn=off,host_ufo=off,mrg_rxbuf=on,guest_csum=on,guest_tso4=on,guest_tso6=on,guest_ecn=off,guest_ufo=off,rx_queue_size=1024,tx_queue_size=1024

- On host B, configure the interface and launch the iperf server process

$ ethtool -L $IFACE combined 1

$ ip link set dev $IFACE up

$ ip addr add 192.168.20.2/24 dev $IFACE

$ ifconfig $IFACE mtu 1500

$ taskset -c 8 iperf3 -s -i 1

- On the VM on host A, configure the interface and launch the iperf client process.

$ ifconfig $IFACE up $ ethtool -K $IFACE sg on $ ethtool -K $IFACE tso on $ ip link set dev $IFACE up $ ip addr add 192.168.20.1/24 dev $IFACE $ taskset 0x2 iperf3 -c 192.168.20.2

More information about the iperf tool as well as information about its usage can be found in the documentation.

Performance Tuning

General performance tuning guidelines for OVS DPDK can be found in the documentation.

For this particular test setup, careful CPU affinization can significantly improve performance. The table below holds information about how each process can be appropriately affinitized.

|

Process |

Affinitization Method |

More information |

|

OVS DPDK |

pmd-cpu-mask |

|

|

QEMU |

taskset |

|

|

iperf (client and server) |

taskset |

We suggest that you affinitize each of the above processes to separate cores.

One final and critical component which needs to be affinitized are the interrupt requests (IRQs) of the NIC being used by the iperf server process. It has been observed that for 710 Series NICs, the iperf server uses the NIC queue corresponding to the coreid that the server process is running on, provided the number of queues is greater than or equal to the lcoreid number. By default, IRQs for that queue will also land on that coreid, which causes contention between the server process and the NIC IRQs. To resolve this, set the IRQ affinity for the given NIC queue by writing to the appropriate /proc/irq/<irq number>/smp_affinity file.

The following steps can be followed to pin IRQs for a 710 Series NIC:

- Find out what queue number the iperf server process is using. As previously mentioned, it is typically the same number as the lcoreid on which the process is being run. However, ethtool counters can be used to confirm:

$ ethtool -S $IFACE | grep rx- | grep packets

- Find out the IRQ number for that queue (eg. queue 8) by checking the /proc/interrupts file:

$ cat /proc/interrupts | grep enp24s0f0-TxRx-8

$ 195: 0 …. 0 0 IR-PCI-MSI 12582921-edge i40e-enp24s0f0-TxRx-8

- Set the desired affinity eg. core 2 by writing to /proc/irq/195/smp_affinity:

$ echo “0000004” > /proc/irq/195/smp_affinity

Conclusion

In this article, we briefly described the concept of TSO and how it can be tested in OVS DPDK with a 710 network card and achieve optimal performance

Additional Information

Download 710 Series data sheet

Have a question? Feel free to follow up with a query on the Open vSwitch discussion mailing thread or the DPDK users mailing thread.

To learn more about OVS with DPDK, check out the material available on Intel® Network Builders.

About the Author

Ciara Loftus is a network software engineer with Intel. Her work is primarily focused on accelerated packet processing and software switching solutions in user space running on Intel® architecture.