Executive Overview

- Users struggle with workload placement due to a requirement to declare resources.

- Intel offers Intent-Driven Orchestration, which allows you to use intent instead of declaring a set of resources that means little for heterogeneous clusters.

- Intent-Driven Orchestration enables the management of applications through their service-level objectives while minimizing developer and administrator overhead.

Intro

Typically in Kubernetes*, we have to define workload deployments with many properties that are not directly related to the workload’s objectives. Those include defining resources, choosing server types, operation systems, and many other aspects. We propose a new approach to the open-source system where orchestration is drawn by intent, or in other words, objectives for our workloads. The objectives are used by a framework that helps strike away the processes of configuring whole other set resources, making interaction with the system as simple as pushing a button to switch driving modes in a car.

Intent-Driven Orchestration

The definition of intention: is a determination to act in a certain way. Intent-Driven Orchestration framework makes it as easy as possible to control cloud server platforms. Ultimately, intents are defined by objectives. In the push-button car analogy from the intro, the Intent-Driven Orchestration framework and the underlying server platform are our car.

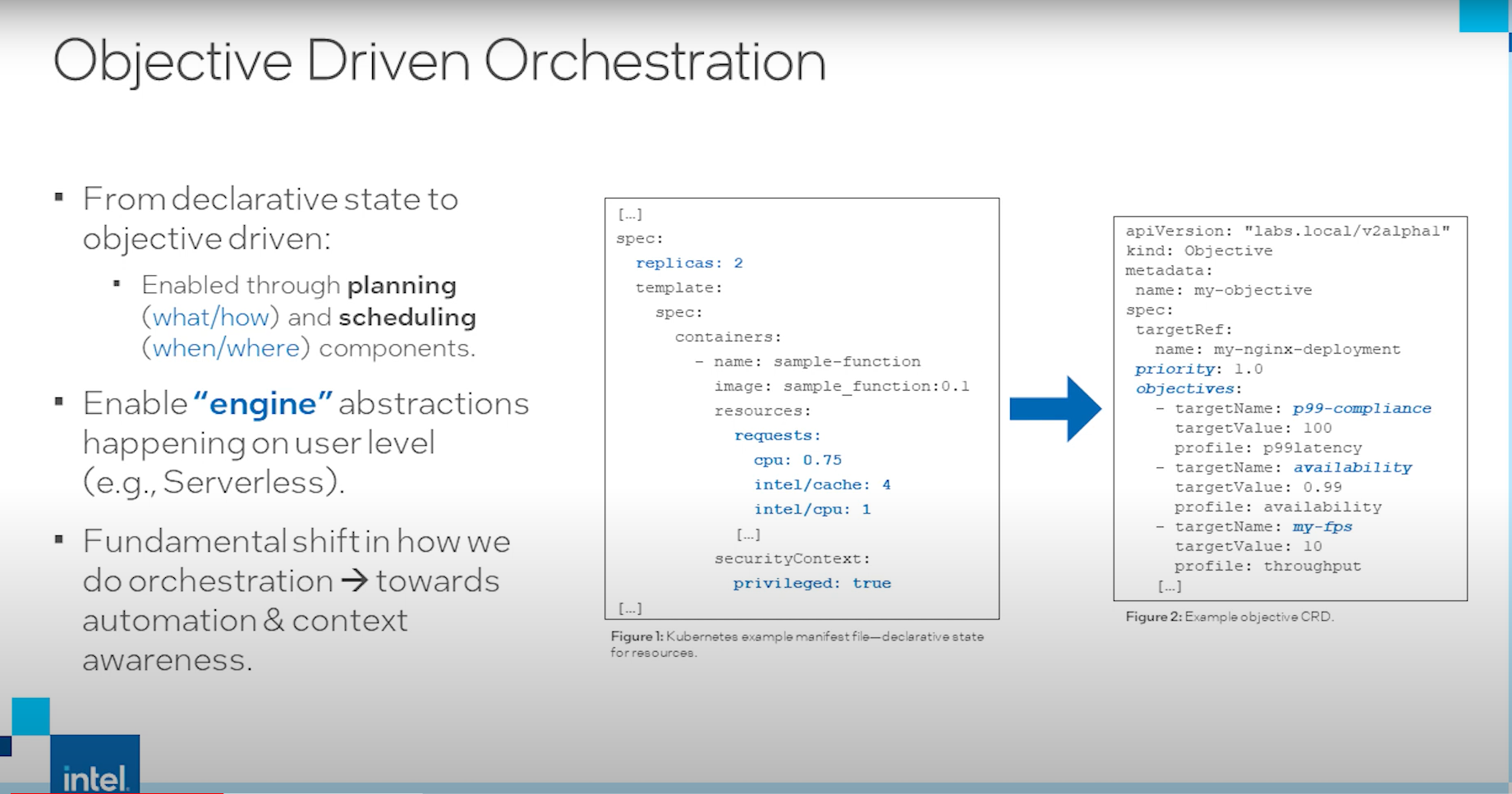

Here, we see an example of a workload that needs a configuration regarding resources, such as the number of cores, cache, and CPU shares. On the right, we see our new approach, with a set of objectives for our workload, such as latency, availability, and throughput. In this case, we are not defining the active resources we want to use, but rather just defining our guidelines. Resources are then located and managed automatically by our framework to fulfill the KPIs.

Objective-Driven Orchestration

From declarative stat to objective-driven:

- Enabled through planning (what/how) and scheduling (when/where) components

- Enable “engine” abstractions happening on the user level (e.g., serverless)

- Fundamental shift in how we do orchestration ➡ towards automation and context awareness

The framework is implemented as a Kubernetes operator; where the objectives are defined as resources while being assessed by the planning component. The planning component continuously watches the current state of our KPIs and the system telemetry. The data is used to generate a new action plan, which helps us reach the desired state. The actions are realized through actuators interacting with specific resources. As a result, we can accumulate all data in the knowledge base, allowing us to make better decisions over time.

Our framework can be extended in two specific points. One possibility is to extend it on the actuator side by writing a new plugin for some new resource type. The plugin can be adjusted on the fly inside our planning component. Another extension point would be the actual planner. The default planner uses an A* search tool written to find the path to our desired state. In addition, a planning plugin can enable other machine learning algorithms and combine them for different workloads.

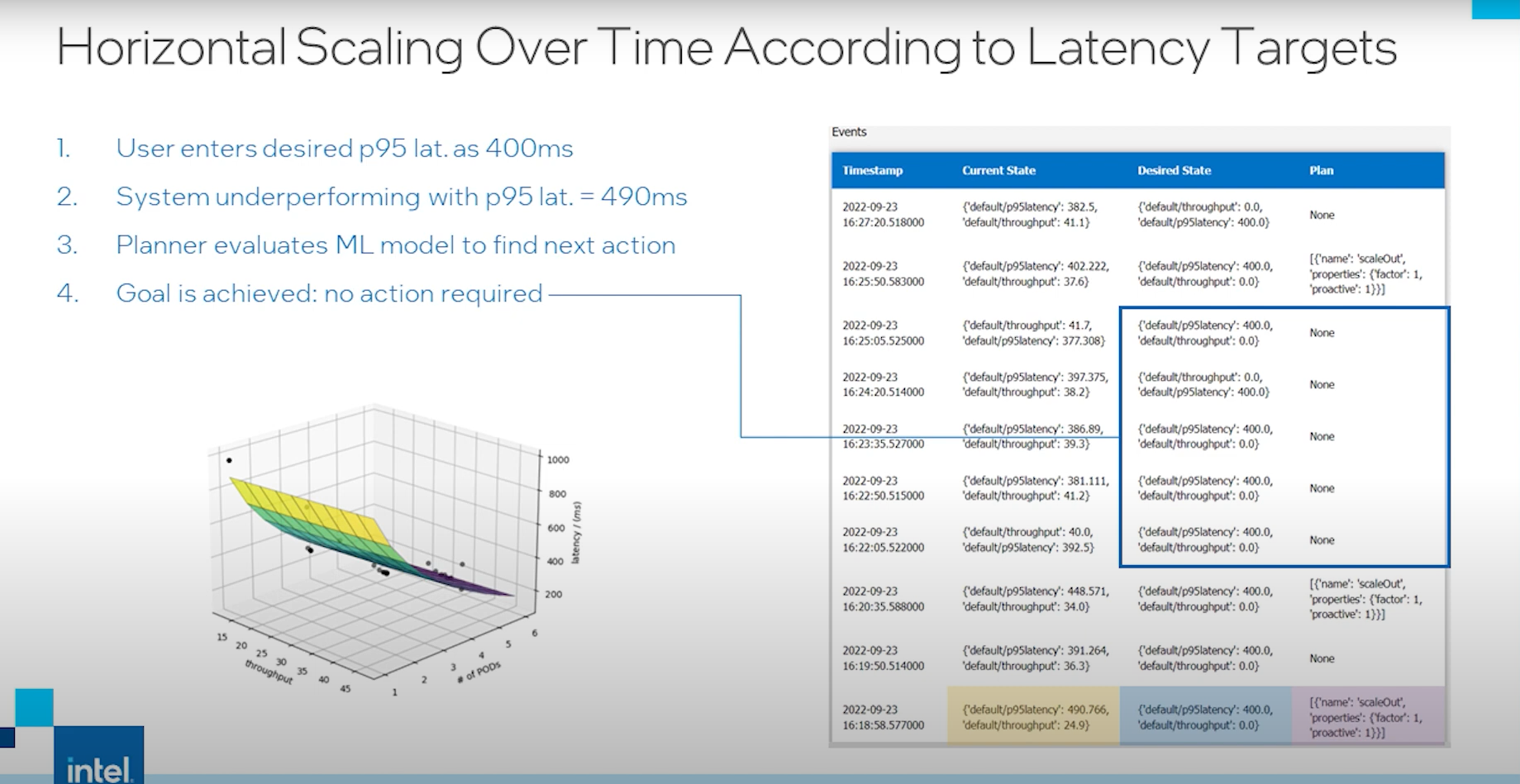

- User enters desired p95 latency as 400ms

- System underperforming with p95 latency + 490ms

- Planner evaluates the ML model to find the next action

- Goal is achieved: no action required

- Maintain target

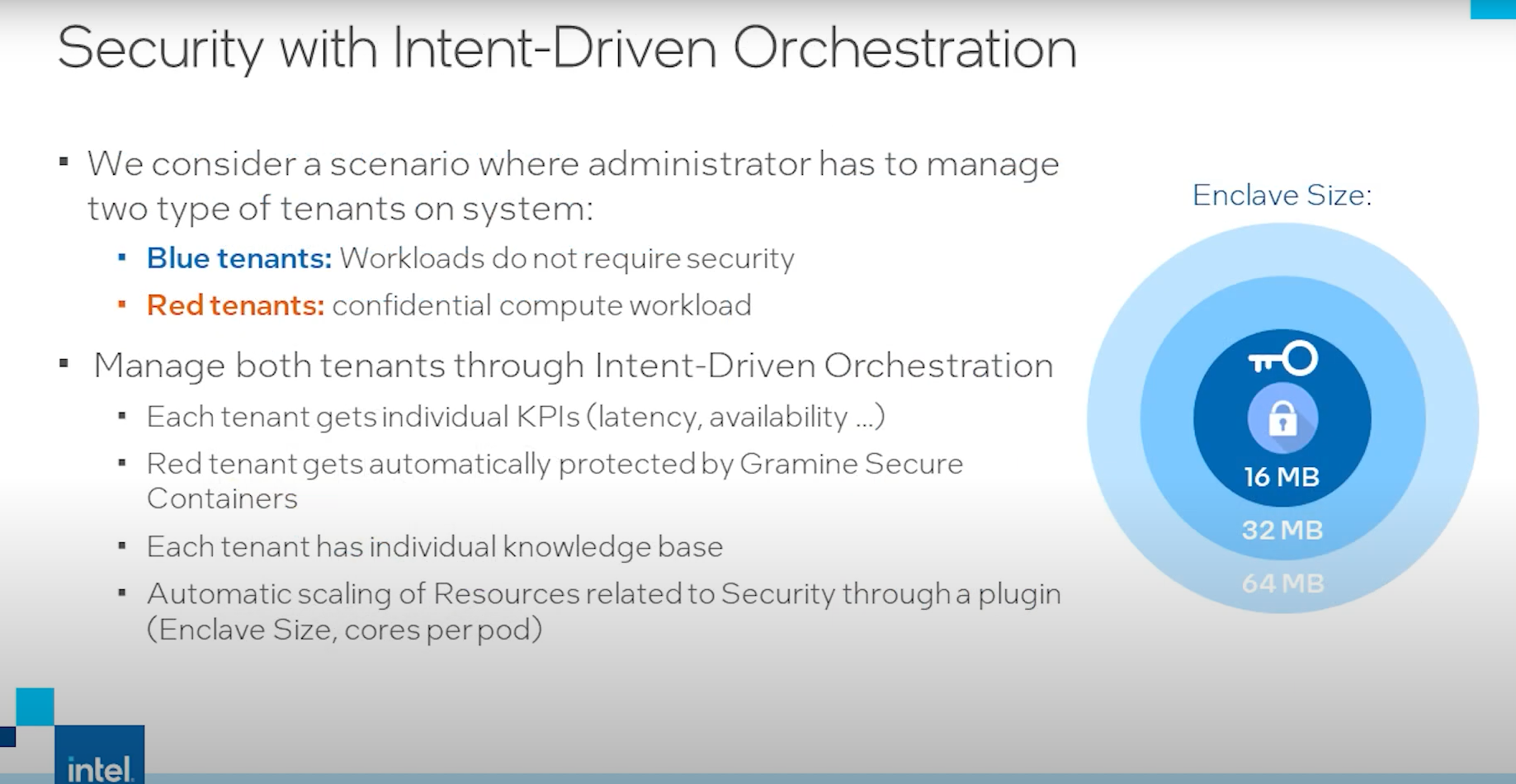

This approach can be applied to any resource. We can also use it to control certain performance-rated characteristics of workloads that would like to predict secure enclaves. In the demo, we show that with two tenants (blue nonsecure tenant and red secure tenant), both workloads can enter their targets in the knowledge base. For the secure tenant, we can use intent-driven orchestration to automatically tweak the security size. This will have an actual performance impact to help us reach KPI targets faster.

This post offers a high-level understanding of Intent-Driven Orchestration and how it can help you with application management. Head to the links below to learn more.