- Performance Improvement Opportunities with NUMA Hardware

- NUMA Hardware Target Audience

- Modern Memory Subsystem Benefits for Database Codes, Linear Algebra Codes, Big Data, and Enterprise Storage

- Memory Performance in a Nutshell

- Data Persistence in a Nutshell

- Hardware and Software Approach for Using NUMA Systems

There is little conceptual difference between storing data in a computer and storing things in a home.

Important Characteristics of Movable Objects

- Space: A kitchen shelf holds less than a basement.

- Placement: Some things are on hand, while others are deeply buried on a back shelf in the distant corner of the basement. Cooking implements are stored in the kitchen, near where they are used; the lawn mower is stored in the garden shed, because it is used near there. Christmas tree lights spend most of the year on the shelf in the basement but are moved to the living room just in time, only to return to the basement a few weeks later.

- Latency: The dishwasher takes just as long to wash one plate as it does a full load, so we fill it before turning it on unless we need something in it right now.

- Bandwidth: We carry laundry in a basket because we can carry more during one trip.

- Density: Balloons can be stored and transported inflated or deflated, and – unless they are filled with helium which is not available at the destination – we usually buy, store, and move packages of deflated balloons, inflating them near the play location.

- Removal vs. storage: Sometimes you need to decide whether to throw something away and make a new one when needed, or store it in the attic where it takes up space and hinders access to other things.

Whether you are getting the guest room ready or running the computer application predicting tomorrow’s weather, you are trying to get something done before you need it done, at as low a cost as possible, given the circumstances.

You try to minimize the space required to store things, the time moving things, the idle time waiting for things to arrive, and the difficulties involved in using things once they arrive.

Important Characteristics of Computer Data and Storage

Space and Placement

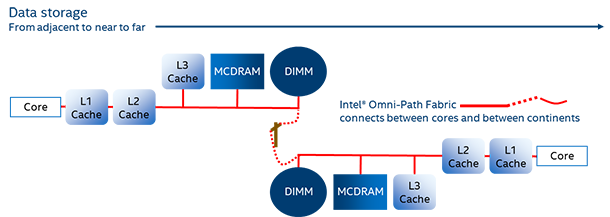

A typical modern computer can store a few hundred bytes of data in registers within each core of the processor. The data in the registers may be explicitly moved to or from more distant devices, or may be created by the core and lost when overwritten. The movement starts with reading or writing a register from/to an L1 (Level one) cache. Each core usually has a private L1 cache that can contain tens of thousands of bytes (32 KB is a typical size).

Other private and shared caches are usually located on the path between the L1 cache and main memory (although non-temporal loads and stores can bypass them). These caches range in size from the private 256-KB caches and many-MB shared caches in processors, to the many-GB caches stored in Multi-channel DRAM (MCDRAM) and High-Bandwidth Memory (HBM) memories or in dual inline-memory modules (DIMMs).

The MCDRAM and HBM memories of Intel® Xeon Phi™ processors can be used as caches for more distant DIMMs, and these caches contain on the order of 16 to 60 GB of data.

The main memory of a personal computer or server tends to be in the 4-GB to 1500-GB range.

Future 3D XPoint™ DIMMs may make it practical for main memory to hold terabytes – 6 TB (6000 GB) is predicted. 3D XPoint DIMMs will probably have a slower bandwidth than double data rate (DDR) DIMMs, perhaps with their contents cached in MCDRAM, HBM memory to compensate for this. Such DDR DIMM caches could be about 10% of the capacity of the main memory, so these caches can be 600 GB in size – a far cry from the 4-KB main memory on the machines from the early 1970s.

The fundamental difference between caches and main memory is:

- Caches look somewhere else for data they do not hold.

- Main memory is the end of the line – if asked for data it does not have, it does not look elsewhere for it.

Data can survive in registers, caches, and main memory while the computer has power.

Beyond the main memory are devices where data can survive when a computer loses power. These devices can usually hold more data than main memory. Hard drives, solid-state drives (SSDs), and removable media such as DVDs are all examples of such devices for persisting data.

If these devices were faster than main memory, you would use them as main memory. You do not, because the processor cannot access the data on them as quickly as it can from main memory – it is hindered by latency and bandwidth.

3D XPoint technology, and any DIMMs built using it, may keep contents across power failures, so you can use them as both main memory and as a persistent data store. 3D XPoint technology may be used in Optane SSDs.

Latency, Bandwidth, and Density

Latency is how long it takes from when you start a request for data until the data arrives.

It is hard to measure latency in many situations because both the compiler and the hardware reorder many operations, including requests to fetch data. They also reorder instructions to do other things while waiting for data to arrive. They may even predict what the fetched data is going to be and act on that prediction, so the arrival of the data simply makes the results of this prediction visible. Of course, if the prediction is wrong, all that work must be redone. Because of this, latency matters most when the compiler and hardware cannot find useful work to do while waiting.

Bandwidth is the rate at which the data arrives, however long that is after it is requested. The usual example involves sending a ship carrying 1 million DVDs across the ocean every day. The latency might be six days, but the bandwidth is tens of GB per second.

As for density, many data transfers occur in 4 bytes, 8 bytes, or even more bytes between the core or memory devices. But not all this data may be used at the destination.

Size, Latency, and Bandwidth of Memory Subsystem Components

Assuming you have a large processor (about 16 cores), the following summarizes, for 2016, approximate data totals present in and moving through the system.

| Memory | Size | Latency | Bandwidth |

|---|---|---|---|

| L1 cache | 32 KB | 1 nanosecond | 1 TB/second |

| L2 cache | 256 KB | 4 nanoseconds | 1 TB/second Sometimes shared by two cores |

| L3 cache | 8 MB or more | 10x slower than L2 | >400 GB/second |

| MCDRAM | 2x slower than L3 | 400 GB/second | |

| Main memory on DDR DIMMs | 4 GB-1 TB | Similar to MCDRAM | 100 GB/second |

|

Main memory on Cornelis* Omni-Path Fabric |

Limited only by cost | Depends on distance | Depends on distance and hardware |

| I/O devices on memory bus | 6 TB | 100x-1000x slower than memory | 25 GB/second |

| I/O devices on PCIe bus | Limited only by cost | From less than milliseconds to minutes | GB-TB/hour Depends on distance and hardware |

Summary

The previous article, Modern Memory Subsystem Benefits for Database Codes, Linear Algebra Codes, Big Data, and Enterprise Storage, aligned new memory subsystem hardware technologies with the needs of applications. This article provides a deeper understanding of hardware capabilities when used for usual variables and heap allocated data of an application – data that did not exist before the application started and evaporates when it ends. The next article, Data Persistence in a Nutshell, introduces using non-volatile memory to replace the use of files for keeping data from one execution of an application to the next.

About the Author

Bevin Brett is a Principal Engineer at Intel Corporation, working on tools to help programmers and system users improve application performance. He was born and raised in New Zealand, where he earned a B.Sc (Hons) in Mathematics before moving to Australia and then New Hampshire, pursuing first an education and then a career in software engineering.