Abstract

This article showcases the implementation of object detection on a PC video stream using the Intel® Distribution of OpenVINO™ toolkit on Intel® processors. The functional problem tackled is the identification of hand gestures and skeletal input to enrich a game’s user experience. This work has involved experiments using a video feed from an Intel® RealSense™ Depth Camera D435, an API, and some functions in the Intel® RealSense™ SDK 2.0. A 3D gesture recognition and tracking application are realized with Python* programming language, which is called XRDrive Sim. XRDrive Sim consists of a 3D augmented steering wheel controlled by hand gestures. The steering wheel is used to navigate around the virtual environment roads in 3D space.

Why XRDrive Sim

XRDrive Sim uses the power of artificial intelligence (AI) to provide immersive experiences, encourage immersive driving lessons, and enhance vehicle safety standards regulations.

Computer Vision: What It Is and How It’s Used in XRDrive Sim

Computer vision is the science and technology of machines that see. As a scientific discipline, computer vision is concerned with building artificial systems that obtain information from images or multi-dimensional data. A significant part of artificial intelligence deals with planning or deliberation for systems that can perform mechanical actions such as moving a robot through an environment. This type of processing typically needs input data provided by a computer vision system, acting as a vision sensor and providing high-level information about the environment and the robot. Other parts are sometimes described as belonging to artificial intelligence and used in relation to computer vision, including pattern recognition and learning techniques.

XRDrive uses hand gesture recognition to control an augmented steering wheel to navigate around the virtual environment roads in a 3D space. Gesture recognition has been a very interesting problem in the computer vision community for a long time – particularly since segmentation of a foreground object from a cluttered background in real-time is challenging. The most obvious reason is the semantic gap between a human and a computer looking at the same image. Humans can easily figure out what’s in an image, but for a computer, images are just three-dimensional matrices. Because of this, computer vision problems remain a challenge.

XRDrive uses the DeepHandNet prediction network for accurate 3D hand and human pose estimation from a single depth map. DeepHandNet is well suited for XRDrive Sim since it is trained on a hand dataset obtained using the depth sensors from the Intel RealSense Depth Camera.

The Evolution of Hand Gestures

Using cameras to recognize hand gestures started very early, along with the development of the first wearable data gloves. At that time there were many hurdles in interpreting camera-based gestures4. Most researchers initially used gloves for the interaction, and then came the vision-based hand gesture recognition for 2D graphical interfaces, which uses color extraction through optical flow and feature point extraction of the hand image captured. New ideas and algorithms have become available for 3D applications of moving machine parts or humans. This evolution has resulted in developing a low-cost interface device for interacting with objects in a virtual environment using hand gestures.

With the emergence of Extended Reality (XR) (virtual reality and augmented reality), operators do not use the keyboard and mouse like before – now they use mobile controllers or hand gestures. Computer vision can recognize gestures and control the computer remotely as intended solely by gestures. By moving their body to control the computer, users can avoid sitting. Moreover, they can play a game or teach others in a much more immersive experience by moving their hands, head, and body.

Audience

The code in this repository is written for computer vision and machine learning students and researchers interested in developing hand gesture applications using the Intel® RealSense™ D400 Series depth modules. The convolutional neural network (CNN) implementation provided is intended to be used as a reference for training networks based on annotated ground-truth data; researchers may instead prefer to import captured datasets into other frameworks such as TensorFlow* and Caffe* and optimize these algorithms using the Model Optimizer from the Intel Distribution of OpenVINO toolkit. OpenVINO toolkit Model Optimizer1 was utilized since it is an advanced cross-platform command-line tool. Model Optimizer allows for rapid deployment of deep learning models for optimal execution on end-point target devices.

This project provides Python* code to demonstrate hand gestures via a PC camera or depth data, namely Intel® RealSense™ Depth Cameras. Additionally, this project showcases the utility of CNNs as a key component of real-time hand tracking pipelines using the Intel Distribution of OpenVINO toolkit. Two demo Jupyter* Notebooks are provided showing hand gesture control from a webcam and a depth camera. Vimeo* demo-videos showing XRDrive functionality can be found on:

XRDrive Sim Hardware and Software

XRDrive is an inference-based application supercharged with power-efficient Intel processors and Intel processor graphics on a laptop.

Hardware

- ASUS ZENBOOK* UX430

- 8th generation Intel® Core™ i7 processor

- 16 GB DDR4 RAM

- 512 GB SSD storage

- Intel RealSense D435 Depth Camera

Software

- Ubuntu* 18.04 LTS

- ROS23

- Intel Distribution of OpenVINO toolkit

- Jupyter Notebook

- MxNet*

- Pyrealsense2

- PyMouse

Experimental Setup Process

- Environment setup

- Define hand gestures

- Data collection

- Train the deep learning model

- Model optimization

- Run the XRDrive Sim demo

Environment Setup

- Install the Robot Operating System (ROS) 2 (Robotic Operating System, 2018)

- ROS2 Overview

- Linux* Development Setup

- Install the Intel Distribution of OpenVINO toolkit

- Overview of Intel® Distribution of OpenVINO™ toolkit

- Linux Installation Guide for Intel Distribution of OpenVINO toolkit

Note: Use root privileges to run the installer when installing the core components.

- Install the OpenCL* Driver for GPU

cd /opt/intel/computer_vision_sdk/install_dependencies sudo ./install_NEO_OCL_driver.sh

- Install the Intel RealSense SDK 2.0

Note: The 2018 Release 2 of the Intel Distribution of OpenVINO toolkit (used for this article) uses OpenCV* 3.4.2 by default for its inference2 engine. When OpenCV is built with inference engine support, the call above is not necessary. Also, the inference engine backend is the only available option (also enabled by default) when the loaded model is represented in the Intel Distribution of OpenVINO toolkit brand Model Optimizer format.

Define Hand Gestures

Once defined, the hand gestures will control both the keyboard and mouse. Hand gesture control keys for the keyboard are assigned to the left hand, and control keys for the mouse are assigned to the right hand. The standard set has ten key features:

- Closed hand

- Open hand

- Thumb only

- Additional index finger

- Additional middle finger

- Folding first finger

- Folding second finger

- Folding middle finger

- Folding index finger

- Folding thumb

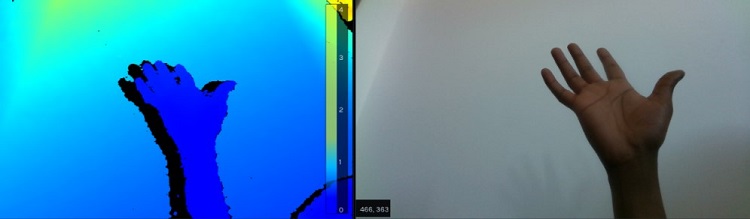

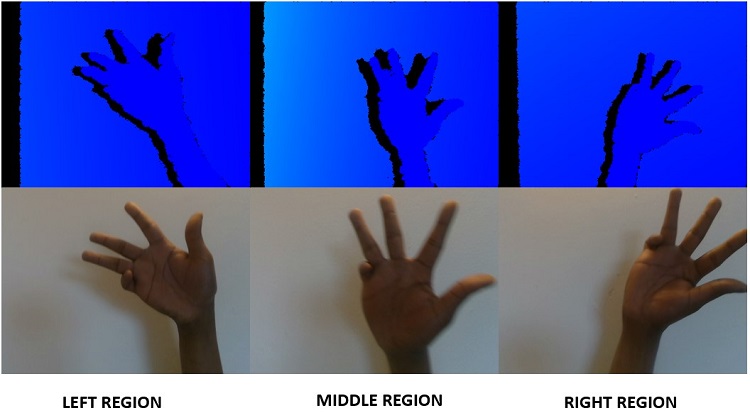

The left-hand is divided into three regions: left, middle and right. Using 6, 7, 8, 9, 10 as inputs, this allows 5 * 3 = 15 different gestures in each region and 15 * 3 = 45 gestures in total region.

Figure 1. left, middle and right region of the left side. These are LEFT side images. Images are flipped horizontally when captured.

Using 3, 4, 5 as control allows 3 * 3 = 9 controls and 15 * 9 = 135 different gestures to cover the whole range of keys (numbers, lower alphabets, upper alphabets, special keys, and controls).

To use the right hand as a mouse all ten features cover the inputs from the mouse. The center finger is a mouse cursor. The mouse cursor of the computer will track the center of the right hand.

Data Collection

Training the DeepHandNet required a significant amount of data that was self-collected from a variety of sources, including 2,000 hand images.

Train the Deep Learning Model

Train the model to recognize hand gestures in real-time.

- The model is trained with 64x64 pixel images using the 8th generation Intel Core i7 CPU

- Data preprocessing is done using OpenCV and NumPy to generate masked hand images. Run xr_preprocessing_data.ipynb

- Recreate the model run xrdrive_train_model.ipynb. It will read the preprocessed hand dataset, mask dataset, and train the model, with 400 epochs iterated.

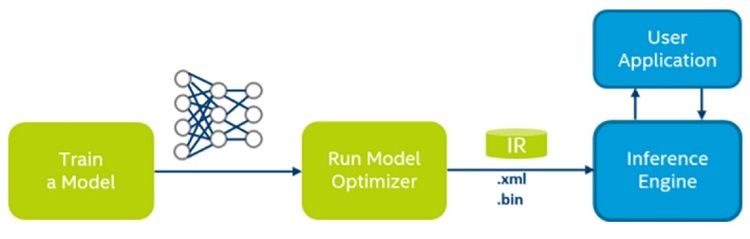

Model Optimization

This step will convert an MXNet* model and optimize DeepHandNet. OpenVINO toolkit Model Optimizer was utilized since it is an advanced cross-platform command-line tool. Model Optimizer allows for rapid deployment of deep learning models for optimal execution on end-point target devices.

The schematic below illustrates the typical OpenVINO toolkit workflow for deploying a trained deep learning model, in our case we used MxNet to train:imagehere

To convert an MXNet* model, go to this directory: <INSTALL_DIR>/deployment_tools/model_optimizer

To convert an MXNet model contained in a model-file-symbol.json and model-file0390.params, run the model optimizer launch script mo.py, specifying a path to the input model file:

- Optimize DeepHandNet

- Set Intel Distribution of OpenVINO toolkit ENV: python3 mo_mxnet.py --input_model chkpt-0390.params

Run the XRDrive Sim Demo

Download the code from the GitHub* repository for XRDrive Sim.

Preparation:

- Set the Intel Distribution of OpenVINO toolkit ENV:

export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/opt/intel/computer_vision_sdk/deployment_tools /inference_engine/samples/build/intel64/Release/lib

- Launch Jupyter Notebook in root mode Anaconda*

jupyter notebook --allow -root - Run xr_preprocessing_data.ipynb to preprocess the data; Download the XRDrive Sim Preprocessing Data

- Run xrdrive_train_model.ipynb to train DeepHandNet or Download the Pretrained DeepHandNet model

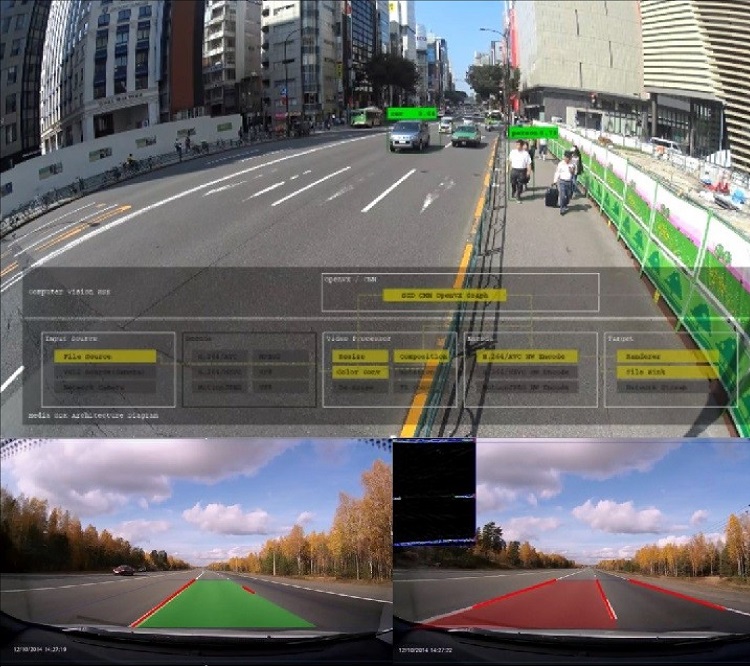

- imagehere

Conclusion

The development of XRDrive Sim, with the rich hand-gesture-based interface for driving school training simulations, was a tedious process requiring expertise in computer vision or machine learning. We addressed this by developing a deep neural network/algorithmic model to recognize hand gestures with high accuracy using an MXNet deep learning framework.

The hand gesture model was optimized using the Intel Distribution of OpenVINO toolkit’s Model Optimizer to deploy the model in a scalable fashion. Deploying deep learning networks from the training environment to embedded platforms for inference is a complex task that introduces complicated technical challenges. To overcome those challenges, we paired the Intel Distribution of OpenVINO toolkit Inference Engine with an OpenCV backend. The team then experimented with this interface to control the mouse and keyboard in a 3D racing game on Ubuntu 18.04. The XRDrive Sim experimental results indicate that the model enables successful gesture recognition with a very low computational load, thus allowing a gesture-based interface on Intel processors.

References

- Model Optimizer Developer Guide

- Inference Engine Developer Guide

- ROS2 OpenVINO Toolkit

- Premaratne, P. (2014). Human Computer Interaction Using Hand Gestures. Shanghai, China.