Visible to Intel only — GUID: GUID-8C32F53E-2A20-41BD-B26E-CBB26D98A296

Visible to Intel only — GUID: GUID-8C32F53E-2A20-41BD-B26E-CBB26D98A296

Window: Summary - Memory Usage

Use the Summary window as your starting point of the performance analysis with the Intel® VTune™ Profiler. To access this window, select the Memory Usage viewpoint and click the Summary sub-tab in the result tab.

Depending on the analysis type, the Summary window provides the following application-level statistics in the Memory Usage viewpoint:

You may click the  Copy to Clipboard button to copy the content of the selected summary section to the clipboard.

Copy to Clipboard button to copy the content of the selected summary section to the clipboard.

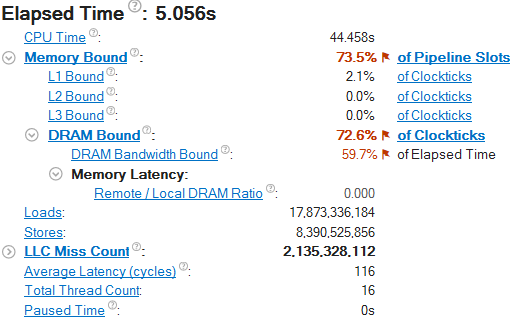

Analysis Metrics

The Summary window displays a list of memory-related CPU metrics that help you estimate an overall memory usage during application execution. For a metric description, hover over the corresponding question mark icon  to read the pop-up help:

to read the pop-up help:

Memory Bound metrics are measured either as Clockticks or as Pipeline Slots. Metrics measured in Clockticks are less precise compared to the metrics measured in Pipeline Slots since they may overlap and their sum at some level does not necessarily match the parent metric value. But such metrics are still useful for identifying the dominant performance bottleneck in the code.

Mouse over a flagged value with the performance issue and read the recommendation for further analysis. For example, a high Memory Bound value typically indicates that a significant fraction of the execution pipeline slots could be stalled due to a demand memory load and stores. For further details, you may switch to the Bottom-up window and explore metric data per memory object.

A high DRAM Bandwidth Bound metric value indicates that your system spent much time heavily utilizing the DRAM bandwidth. The calculation of this metric relies on the accurate maximum system DRAM bandwidth measurement provided in the System Bandwidth section below.

System Bandwidth

This section provides various system bandwidth-related properties detected by the product. Depending on the number of sockets on your system, the following types of system bandwidth are measured:

Max DRAM System Bandwidth |

Maximum DRAM bandwidth measured for the whole system (across all packages) by running a micro-benchmark before the collection starts. If the system has already been actively loaded at the moment of collection start (for example, with the attach mode), the value may be less accurate. |

Max DRAM Single-Package Bandwidth |

Maximum DRAM bandwidth for single package measured by running a micro-benchmark before the collection starts. If the system has already been actively loaded at the moment of collection start (for example, with the attach mode), the value may be less accurate. |

These values are used to define default High, Medium and Low bandwidth utilization thresholds for the Bandwidth Utilization Histogram and to scale over-time bandwidth graphs in the Bottom-up view. By default, for Memory Analysis results the system bandwidth is measured automatically. To enable this functionality for custom analysis results, make sure to select the Evaluate max DRAM bandwidth option.

Bandwidth Utilization Histogram

This histogram shows how much time the system bandwidth was utilized by a certain value (Bandwidth Domain) and provides thresholds to categorize bandwidth utilization as High, Medium and Low. You can set the threshold by moving sliders at the bottom.

If you switch to the Bottom-up window and group the grid data by ../Bandwidth Utilization Type/.., you can identify functions or memory objects with high bandwidth utilization in the specific bandwidth domain.

If you select the Interconnect domain, you will be able to check whether the performance of your application is limited by the bandwidth of Interconnect links (inter-socket connections). Then, you may switch to the Bottom-up window and identify code and memory objects with NUMA issues.

Single-Package domains are displayed for the systems with two or more CPU packages and the histogram for them shows the distribution of the elapsed time per maximum bandwidth utilization among all packages. Use this data to identify situations where your application utilizes bandwidth only on a subset of CPU packages. In this case, the whole system bandwidth utilization represented by domains like DRAM may be low whereas the performance is in fact limited by bandwidth utilization.

Interconnect bandwidth analysis is supported by the VTune Profiler for Intel microarchitecture code name Ivy Bridge EP and later.

To learn bandwidth capabilities, refer to your system specifications or run appropriate benchmarks to measure them; for example, Intel Memory Latency Checker can provide maximum achievable DRAM and Interconnect bandwidth.

Top Memory Objects by Latency (Linux* Targets Only)

If you enabled the Analyze memory object configuration option for the Memory Access analysis, the Summary window in the Memory Usage viewpoint displays memory objects (variables, data structures, arrays) that introduced the highest latency to the execution of your application.

Memory objects identification is supported only for Linux targets and only for processors based on Intel microarchitecture code name Sandy Bridge and later.

Only metrics based on DLA-capable hardware events are applicable to the memory objects analysis. For example, the CPU Time metric is based on a non DLA-capable Clockticks event, so cannot be applied to memory objects. Examples of applicable metrics are Loads, Stores, LLC Miss Count, and Average Latency.

Clicking an object in the table opens the Bottom-up window with the grid data grouped by Memory Object/Function/Allocation Stack. The selected hotspot object is highlighted.

Top Tasks

This section provides a list of tasks that took most of the time to execute, where tasks are either code regions marked with Task API, or system tasks enabled to monitor Ftrace* events, Atrace* events, Intel Media SDK programs, OpenCL™ kernels, and so on.

Clicking a task type in the table opens the grid view (for example, Bottom-up or Event Count) grouped by the Task Type granularity. See Task Analysis for more information.

Latency Histogram

This histogram shows a distribution of loads per latency (in cycles).

Collection and Platform Info

This section provides the following data:

Application Command Line |

Path to the target application. |

Operating System |

Operating system used for the collection. |

Computer Name |

Name of the computer used for the collection. |

Result Size |

Size of the result collected by the VTune Profiler. |

Collection start time |

Start time (in UTC format) of the external collection. Explore the Timeline pane to track the performance statistics provided by the custom collector over time. |

Collection stop time |

Stop time (in UTC format) of the external collection. Explore the Timeline pane to track the performance statistics provided by the custom collector over time. |

Collector type |

Type of the data collector used for the analysis. The following types are possible:

|

CPU Information |

|

Name |

Name of the processor used for the collection. |

Frequency |

Frequency of the processor used for the collection. |

Logical CPU Count |

Logical CPU count for the machine used for the collection. |

Physical Core Count |

Number of physical cores on the system. |

User Name |

User launching the data collection. This field is available if you enabled the per-user event-based sampling collection mode during the product installation. |

GPU Information |

|

Name |

Name of the Graphics installed on the system. |

Vendor |

GPU vendor. |

Driver |

Version of the graphics driver installed on the system. |

Stepping |

Microprocessor version. |

EU Count |

Number of execution units (EUs) in the Render and GPGPU engine. This data is Intel® HD Graphics and Intel® Iris® Graphics (further: Intel Graphics) specific. |

Max EU Thread Count |

Maximum number of threads per execution unit. This data is Intel Graphics specific. |

Max Core Frequency |

Maximum frequency of the Graphics processor. This data is Intel Graphics specific. |

Graphics Performance Analysis |

GPU metrics collection is enabled on the hardware level. This data is Intel Graphics specific.

NOTE:

Some systems disable collection of extended metrics such as L3 misses, memory accesses, sampler busyness, SLM accesses, and others in the BIOS. On some systems you can set a BIOS option to enable this collection. The presence or absence of the option and its name are BIOS vendor specific. Look for the Intel® Graphics Performance Analyzers option (or similar) in your BIOS and set it to Enabled. |